[ad_1]

|

Hearken to this text |

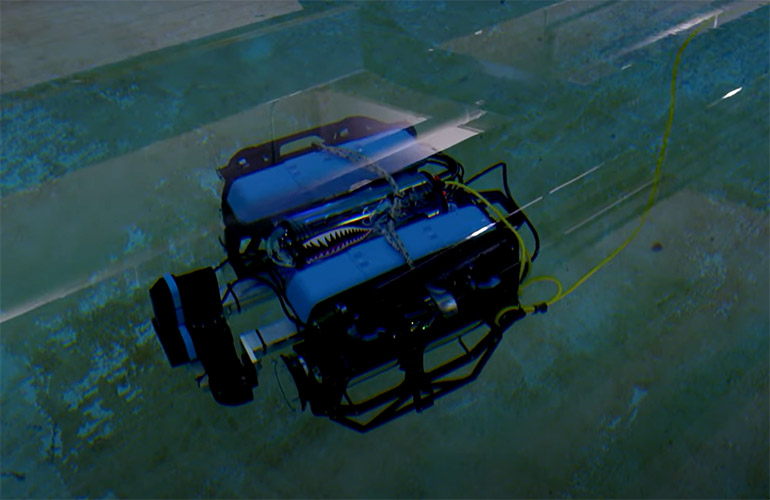

Stevens Institute of Expertise’s BlueROV makes use of notion and mapping capabilities to function with out GPS, lidar, or radar underwater. Supply: American Society of Mechanical Engineers

Whereas protection spending is the supply of many inventions in robotics and synthetic intelligence, authorities coverage normally takes some time to catch as much as technological developments. Given all the eye on generative AI this 12 months, October’s government order on AI security and safety was “encouraging,” noticed Dr. Brendan Englot, director of the Stevens Institute for Synthetic Intelligence.

“There’s actually little or no regulation at this level, so it’s necessary to set commonsense priorities,” he informed The Robot Report. “It’s a measured method between unrestrained innovation for revenue versus some AI consultants desirous to halt all growth.”

AI order covers cybersecurity, privateness, and nationwide safety

The executive order units requirements for AI testing, company data sharing with the federal government, and privateness and cybersecurity safeguards. The White Home additionally directed the Nationwide Institute of Requirements and Expertise (NIST) to set “rigorous requirements for in depth red-team testing to make sure security earlier than public launch.”

The Biden-Harris administration’s order said the objectives of stopping the usage of AI to engineer harmful organic supplies, to commit fraud, and to violate civil rights. Along with growing “rules and finest practices to mitigate the harms and maximize the advantages of AI for employees,” the administration claimed that it’ll promote U.S. innovation, competitiveness, and accountable authorities.

It additionally ordered the Division of Homeland Safety to use the requirements to important infrastructure sectors and to ascertain an AI Security and Safety Board. As well as, the manager order stated the Division of Power and the Division of Homeland Safety should tackle AI programs’ threats to important infrastructure and nationwide safety. It plans to develop a Nationwide Safety Memorandum to direct additional actions.

“It’s a commonsense set of measures to make AI extra secure and reliable, and it captured loads of totally different views,” stated Englot, an assistant professor on the Stevens Institute of Expertise in Hoboken, N.J. “For instance, it known as the overall precept of watermarking as necessary. This may assist resolve authorized disputes over audio, video, and textual content. It would gradual issues a bit of bit, however most of the people stands to learn.”

Stevens Institute analysis touches a number of domains

“Once I began with AI analysis, we started with typical algorithms for robotic localization and situational consciousness,” recalled Englot. “On the Stevens Institute for Synthetic Intelligence [SIAI], we noticed how AI and machine studying might assist.”

“We included AI in two areas. The primary was to boost notion from restricted data coming from sensors,” he stated. “For instance, machine studying might assist an underwater robotic with grainy, low-resolution photographs by building extra descriptive, predictive maps so it might navigate extra safely.”

“The second was to start utilizing reinforcement studying for determination making, for planning under uncertainty,” Englot defined. “Cell robots have to navigate and make good selections in stochastic, disturbance-filled environments, or the place it doesn’t know the surroundings.”

Since getting into the director position on the institute, Englot stated he has seen work to use AI to healthcare, finance, and the humanities.

“We’re taking on bigger challenges with multidisciplinary analysis,” he stated. “AI can be utilized to boost human determination making.”

Drive to commercialization might restrict growth paths

Generative AI equivalent to ChatGPT has dominated headlines all 12 months. The latest controversy round Sam Altman’s ouster and subsequent restoration as CEO of OpenAI demonstrates that the trail to commercialization isn’t as direct as some assume, stated Englot.

“There’s by no means a ‘one-size-fits-all’ mannequin to go along with rising applied sciences,” he asserted. “Robots have completed properly in nonprofit and authorities growth, and a few have transitioned to business purposes.”

“Others, not a lot. Automated driving, as an illustration, has been dominated by the business sector,” Englot stated. “It has some achievements, nevertheless it hasn’t totally lived up to its promise but. The pressures from the push to commercialization should not all the time a very good factor for making expertise extra succesful.”

AI wants extra coaching, says Englot

To compensate for AI “hallucinations” or false responses to consumer questions, Englot stated AI shall be paired with model-based planning, simulation, and optimization frameworks.

“We’ve discovered that the generalized basis mannequin for GPT-4 shouldn’t be as helpful for specialised domains the place tolerance for error may be very low, equivalent to for medical prognosis,” stated the Stevens Institute professor. “The diploma of hallucination that’s acceptable for a chatbot isn’t right here, so that you want specialised coaching curated by consultants.”

“For extremely mission-critical purposes, equivalent to driving a car, we should always notice that generative AI could resolve an issue, nevertheless it doesn’t perceive all the principles, since they’re not hard-coded and it’s inferring from contextual data,” stated Englot.

He really helpful pairing generative AI with finite ingredient fashions, computational fluid dynamics, or a well-trained knowledgeable in an iterative dialog. “We’ll finally arrive at a robust functionality for fixing issues and making extra correct predictions,” Englot predicted.

Submit your nominations for innovation awards in the 2024 RBR50 awards.

Submit your nominations for innovation awards in the 2024 RBR50 awards.

Collaboration to yield advances in design

The mixture of generative AI with simulation and area consultants might result in sooner, extra revolutionary designs within the subsequent 5 years, stated Englot.

“We’re already seeing generative AI-enabled Copilot instruments in GitGub for creating code; we might quickly see it used for modeling elements to be 3D-printed,” he stated.

Nevertheless, utilizing robots to function the bodily embodiments of AI in human-machine interactions might take extra time due to security considerations, he famous.

“The potential for hurt from generative AI proper now’s restricted to particular outputs — photographs, textual content, and audio,” Englot stated. “Bridging the gabp between AI and programs that may stroll round and have bodily penalties will take some engineering.”

Stevens Institute AI director nonetheless bullish on robotics

Generative AI and robotics are “a wide-open space of analysis proper now,” stated Englot. “Everyone seems to be attempting to grasp what’s attainable, the extent to which we are able to generalize, and the best way to generate information for these foundational fashions.”

Whereas there is a humiliation of riches on the Net for text-based fashions, robotics AI builders should draw from benchmark information units, simulation instruments, and the occasional bodily useful resource equivalent to Google’s “arm farm.” There’s additionally the query of how generalizable information is throughout duties, since humanoid robots are very totally different from drones, Englot stated.

Legged robots equivalent to Disney’s demonstration at iROS, which was skilled to stroll “with persona” via reinforcement studying, present that progress is being made.

Boston Dynamics spent years on designing, prototyping, and testing actuators to get to extra environment friendly all-electric fashions, he stated.

“Now, the AI part has are available in by advantage of different firms replicating [Boston Dynamics’] success,” stated Englot. “As with Unitree, ANYbotics, and Ghost Robotics attempting to optimize the expertise, AI is taking us to new ranges of robustness.”

“But it surely’s greater than locomotion. We’re an extended option to integrating state-of-the-art notion, navigation, and manipulation and to get prices down,” he added. “The DARPA Subterranean Challenge was a terrific instance of options to such challenges of cell manipulation. The Stevens Institute is conducting analysis on reliable underwater cell manipulation funded by the USDA for sustainable offshore power infrastructure and aquaculture.”

[ad_2]

Source link