[ad_1]

Image by pch.vector on Freepik

With the widespread adoption of deep studying, progress in Automated Speech Recognition (ASR) accuracy has accelerated in the previous few years. Researchers have been fast to assert human parity on particular measures. However is Speech Recognition actually a solved downside in the present day? If not, what we concentrate on measuring in the present day goes to affect the path ASR takes subsequent.

ASR methods have for a very long time centered on word error rate (WER) to measure accuracy. This metric is sensible. WER measures what number of phrases the system will get unsuitable for each hundred phrases it acknowledges. As a result of system efficiency can range considerably relying on the situation, audio high quality, accent, and many others., it’s laborious to place a single quantity as the top objective. (After all, 0% could be good). So, as a substitute, we now have sometimes used WER to check two methods. And because the system began to get rather a lot higher, we began evaluating its WER with that of people. Human parity was initially considered a distant objective, then deep studying accelerated issues and we ended up “attaining” that rather a lot sooner.

Human judges are first requested to transcribe audio. To generate transcription references, totally different judges do a number of passes of listening to the audio and modifying to get extra correct transcriptions. Transcription is taken into account clear when a number of judges attain a consensus. Human baseline WER is measured by evaluating a brand new choose’s transcription to that of the reference transcription. One choose can nonetheless be incorrect at instances, when in comparison with a reference, actually, sometimes getting one out of twenty phrases incorrect. That is equal to a WER of 5%.

To say human parity, we sometimes examine the system towards one choose. An attention-grabbing facet of that is that ASR methods do attempt to do a number of passes themselves. Then is it honest to check towards one-person efficiency? It’s certainly honest as a result of we assume that the individual additionally had sufficient time to replay the audio as wanted when transcribing, successfully doing a number of passes.

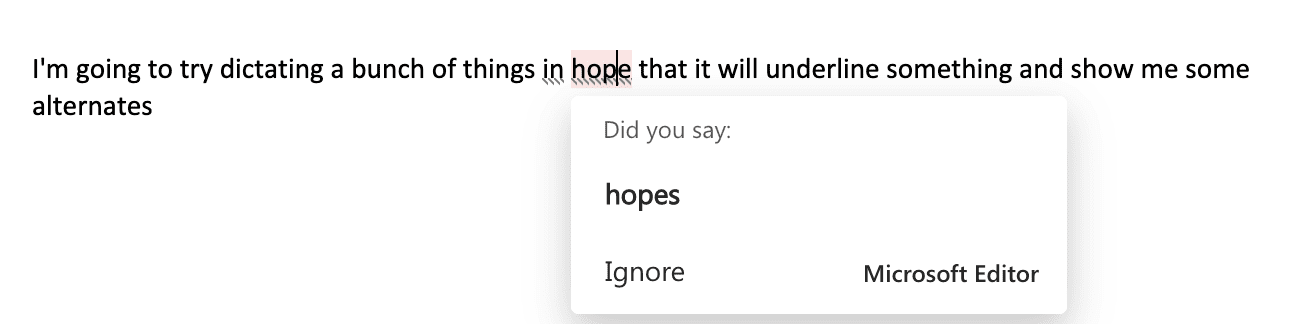

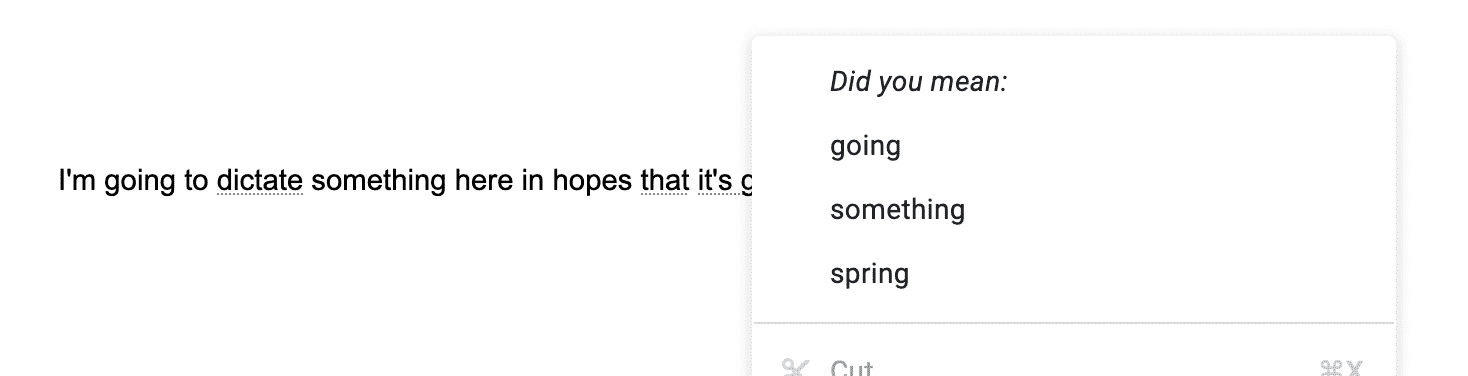

As soon as ASR reached human parity (En-US) for sure Speech Recognition duties, it rapidly turned obvious that for formally written type, getting one phrase unsuitable out of each twenty phrases continues to be a horrible expertise. A technique dictation merchandise have tried tackling this problem is by making an attempt to determine which acknowledged phrases are low confidence and surfacing viable alternate options for them. We will observe this ‘correction’ expertise in lots of business merchandise in the present day, together with Microsoft Workplace Dictation and Google Docs (Fig. 1 and Fig. 2). Nonetheless, there was one other evident problem with WER.

Determine 1: Microsoft Phrase Dictation displaying alternates for dictated textual content

Determine 2: Google Docs displaying alternates for dictated textual content

To maintain issues easy, historically WER wasn’t calculated on the ultimate written type textual content. One might argue that the first job of the ASR system was simply to acknowledge the phrases accurately and never format entities like date, time, foreign money, e-mail, and many others. accurately. So as a substitute of correctly formatted textual content, the spoken type model was used for WER calculation. It canceled out any particular formatting variations, punctuation, capitalization, and many others., and purely centered on the spoken phrases. That is an appropriate assumption if the use case is voice search, the place job completion issues way more than textual content formatting. Looking with a voice may very well be made to work with simply the ‘spoken type’. Nonetheless, with a distinct use case like voice assistant, issues began altering. Now the spoken type “wake me up at eight thirty-seven am” was a lot tougher to deal with in comparison with the written type “wake me up at 8:37 am”. The written type right here is less complicated for the assistant to parse and switch into motion.

Voice search and voice assistants are examples of what we name one-shot dictation use circumstances. Because the ASR system turned extra dependable with one-shot dictation use circumstances, consideration turned to dictation and dialog situations. These are each long-form speech recognition duties. It was straightforward to disregard punctuation for voice search or voice assistant, however for any long-form dictation or dialog, not having the ability to punctuate is a dealbreaker. Since automated punctuation fashions weren’t nearly as good but, one route that dictation took was to help ‘specific punctuation’. You would explicitly say ‘interval’ or ‘query mark’ and the system would do the suitable factor. It gave management to the customers and ‘unblocked’ them to make use of dictation for writing emails or paperwork. Different facets like capitalization or disfluency dealing with additionally began gaining significance for dictation situations. If we continued to depend on WER as the first metric, we might be falsely portray the image that our system has solved it. Our metric wanted to evolve with the evolving use circumstances for Speech Recognition.

An apparent successor of Phrase Error Charge (WER) is Token Error Charge (TER). For TER, we attempt to think about all facets of the written type like capitalization, punctuation, dysfluency, and many others., and attempt to calculate a single metric identical to WER (see Desk 1). On the identical units the place ASR had reached human parity on WER when re-evaluated with TER, ASR misplaced the human parity battle once more. Our goalpost has moved, however this time it does really feel like an actual goalpost as a result of the end result goes to be a lot nearer to the written type that’s broadly used.

| Recognition | Reference | Metric | |

| Spoken type | wake me pat eight thirty 5 a m | wake me up at eight twenty 5 a m | WER = 3/9 = 33.3% |

| Written type | Wake me pat 8:35 AM. | Wake me up at 8:25 AM. | TER = 3/7 = 42.9%

(Punctuation is counted individually) |

Desk 1: How does spoken type vs written type affect metrics computation?

TER is an efficient general metric to take a look at, nevertheless it hides inside all of the essential particulars. There at the moment are many classes contributing to this one quantity, and it isn’t about simply getting the phrases proper. So, this metric by itself is much less actionable. To make it extra actionable, we have to work out which class is contributing probably the most to it and determine to concentrate on bettering that. There’s a class imbalance problem as nicely. Lower than 2% of all tokens would comprise any numbers and number-related codecs like time, foreign money, date, and many others. Even when we get this class utterly unsuitable it might nonetheless have a restricted affect on TER relying on the TER baseline. However getting this 2% of the circumstances even unsuitable half the time could be a horrible expertise for the customers. For that reason, the TER metric alone can not information our analysis investments. I’d argue that we have to work out vital classes for our customers and measure and enhance extra focused metrics like category-F1.

Aha! That is a very powerful query for ASR. WER, TER, or category-F1 are all metrics for scientists to validate their progress, however nonetheless could be fairly far off from what’s vital for the customers. So, what’s vital? To reply this query, we’ll have to return to why customers want an ASR system within the first place. This, in fact, relies on the situation. Let’s take the case of dictation first. The only real function of dictation is to have the ability to change typing. Don’t we have already got a metric for that? Phrases per minute (WPM) is already a well-established metric to measure typing effectivity. I’d argue that is the right metric for Dictation. If dictation customers can obtain a lot greater WPM by dictating than typing, the ASR system has achieved its job. After all, WPM right here takes nicely into consideration how customers want to return and proper issues that may sluggish them down. There could be some errors which might be a must-fix for customers, after which there are some errors which might be acceptable. This naturally assigns the next weight to what issues and will even disagree with TER which supplies equal weight to all errors. Lovely!

The assembly transcription case is sort of totally different from dictation, in its goal. Dictation is a human2machine use-case and assembly transcription is a human2human use-case. WPM stops being the suitable metric. Nonetheless, if there’s a function to producing transcriptions, the suitable metric lies round that function. As an illustration, the objective of a broadcast assembly is likely to be to generate human-readable transcriptions on the finish, so what number of edits a human annotator must make on prime of the machine-generated transcript turns into a metric. That is like TER, besides it doesn’t matter if some sections are misrecognized, what issues is that the result’s coherent and flows nicely.

One other function may very well be to extract actionable insights from transcriptions or generate summaries. These are tougher to measure to attach again to recognition accuracy. Nonetheless, human interactions can nonetheless be measured, and a job completion or engagement-type metric is extra appropriate.

The metrics we mentioned to this point could be categorized as ‘on-line’ metrics and ‘offline’ metrics, as summarized in Desk 2. In an excellent world, they transfer collectively. I’d argue that whereas offline metrics may very well be an excellent indicator of potential enhancements, ‘on-line’ metrics are the actual measure of success. When improved ASR fashions are prepared, it is very important first measure the suitable offline metrics. Transport them is just half the job achieved. The actual check is handed when these fashions enhance the ‘on-line’ metrics for the shoppers.

| Offline Metrics | On-line Metrics | |

|---|---|---|

| Voice Search | Spoken-form Phrase Error Charge (WER) | Profitable Click on on related search outcome |

| Voice Assistant | Token Error Charge (TER),

Formatting F1 for time, date, cellphone numbers, and many others. |

Job Completion price |

| Voice Typing (Dictation) | Token Error Charge (TER),

Punctuation-F1, Capitalization-F1, |

Phrases per minute (WPM), Edit price,

Person retention/engagement |

| Assembly (Dialog) | Token Error Charge (TER),

Punctuation-F1, Capitalization-F1, Disfluency-F1 |

Transcription edit price,

Person engagement with Transcription UI |

Desk 2: Offline and On-line metrics for Speech Recognition

References

- “Attaining Human Parity in Conversational Speech Recognition – arXiv.” 17 Oct. 2016, https://arxiv.org/abs/1610.05256.

- Phrase Error Charge https://en.wikipedia.org/wiki/Word_error_rate

- F1 rating https://en.wikipedia.org/wiki/F-score

- Phrases per minute (WPM) https://en.wikipedia.org/wiki/Words_per_minute

Piyush Behre is a Principal Utilized Scientist at Microsoft engaged on Speech Recognition/Pure Language Processing. He acquired his Bachelor of Know-how diploma in Laptop Science and Engineering from the Indian Institute of Know-how, Roorkee.

[ad_2]

Source link