[ad_1]

Picture by Writer

I believe it’s protected to say 2023 is the 12 months of Large Language Models (LLMs). From the widespread adoption of ChatGPT, which is constructed on the GPT-3 household of LLMs, to the discharge of GPT-4 with enhanced reasoning capabilities, it has been a 12 months of milestones in generative AI. And we get up on a regular basis to the discharge of new applications within the NLP area that leverage the ChatGPT’s capabilities to handle novel issues.

On this article, we’ll find out about Baize, a not too long ago launched open-source chat mannequin.

Baize is an open-source chat mannequin. Cool. However why one other chat mannequin?

Effectively, in a typical session with a chatbot, you do not have a single query that you simply’re searching for a solution to. Slightly, you’ll ask a collection of questions that the bot solutions. This dialog chain continues—till you get your solutions or a suitable answer to your downside—on this multi-turn chat.

So if you wish to begin constructing your individual chat fashions, such a multi-turn chat corpus isn’t tremendous widespread to return by. Baize goals at facilitating the technology of such a corpus utilizing ChatGPT and makes use of it to fine-tune a LLaMA mannequin. This helps you construct higher chatbots with lowered coaching time.

Project Baize is funded by the McAuley lab at UC San Diego, and is the results of collaboration between researchers at UC San Diego, Solar Yat-Sen college, and Microsoft Analysis, Asia.

Baize is known as after the Chinese language legendary creature Baize that may perceive human languages [1]. And understanding human languages is one thing we’d all like chat fashions to have, sure? The analysis paper for Baize was first uploaded to arxiv on third April, 2023. The mannequin’s weights and code have all been made out there on GitHub solely for analysis functions. So now is a good time to discover this new open-source chat mannequin.

And, yeah, let’s be taught extra about Baize.

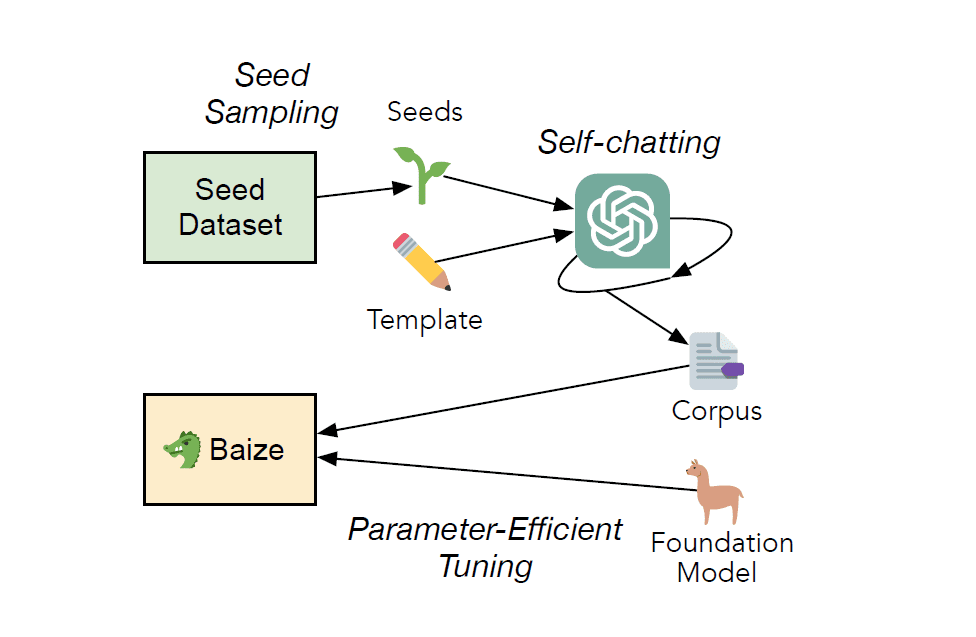

The working of Baize might be (virtually) summed up in two key factors:

- Generate a big corpus of multi-turn chat knowledge by leveraging ChatGPT

- Use the generated corpus to fine-tune LLaMA

The Pipeline for Coaching Baize | Image source

Information Assortment with ChatGPT Self-Chatting

We talked about that Baize makes use of ChatGPT to assemble the chat corpus. It does so utilizing a course of referred to as self-chatting during which ChatGPT has a dialog with itself.

A typical chat session requires a human and an AI. The self-chatting course of within the knowledge assortment pipeline is designed such that ChatGPT has a dialog with itself—to produce either side of the dialog. For the self-chatting course of, a template is offered together with the necessities.

The standard of conversations generated by ChatGPT is sort of excessive (we’ve seen this extra in our social media feeds than in our personal ChatGPT periods). So we get a high-quality dialogue corpus.

Let’s check out the info utilized by Baize:

- There’s a seed that units the subject for the chat session. It may be a query or a phrase that provides the central thought of the dialog. Within the coaching of Baize, questions from StackOverflow and Quora had been used as seeds.

- Within the coaching of Baize, ChatGPT (gpt-turbo-3.5) mannequin is used within the self-chatting knowledge assortment pipeline. The generated corpus has about 115K dialogues—with roughly 55K dialogues coming from every of the above sources.

- As well as, knowledge from Stanford Alpaca was additionally used.

- Presently three variations of the mannequin: Baize-7B, Baize-13B, and Baize-30B have been launched. (In Baize-XB, XB denotes X billion parameters.)

- The seed may also be sampled from a particular area. Which means we are able to run the info assortment course of to assemble a domain-specific chat corpus. On this path, the Baize-Healthcare mannequin is out there, skilled on the publicly out there MedQuAD dataset to create a corpus of about 47K dialogues.

Superb-Tuning in Low-Useful resource Settings

The subsequent half is the fine-tuning of the LLaMA mannequin on the generated corpus. Mannequin Superb-tuning is usually a resource-intensive activity. As tuning all of the parameters of a big language mannequin is infeasible below useful resource constraints, Baize makes use of Low-Rank Adaptation (LoRA) to superb tune the LLaMA mannequin.

As well as, at inference time, there’s a immediate that instructs Baize to not bask in conversations which are unethical and delicate. This mitigates the necessity for human intervention carefully.

The functional app fetches the LLaMA mannequin and LoRA weights from the HugingFace hub.

Subsequent, let’s go over a number of the benefits and limitations of Baize.

Benefits

Let’s begin by stating some benefits of Baize:

- Excessive availability: You’ll be able to check out Baize-7B on HuggingFaces spaces or run it locally. Baize isn’t restricted by the variety of API calls and alleviates issues of availability in occasions of excessive demand.

- Constructed-in moderation assist: The prompts at inference time to cease indulging in conversations on delicate and unethical matters is advantageous because it minimizes efforts wanted to average conversations.

- Chat corpora technology: As talked about, Baize can assist construct giant corpora of multi-turn conversations. This may be useful in coaching chat fashions at scale.

- Accessibility in low-resource settings: As talked about in [1], we are able to run Baize on a single GPU machine, which makes it accessible in low-resource settings which have restricted entry to computation sources.

- Area-specific purposes: By rigorously sampling the seed from a particular area, we are able to have chat bots for domain-specific purposes resembling healthcare, agriculture, finance and extra.

- Reproducibility and customization: The code is publicly out there and the info assortment and coaching pipeline is reproducible. If you wish to accumulate knowledge from numerous particular sources to construct a customized corpus, you possibly can modify the <code>assortment.py</code> script within the mission’s codebase.

Limitations

Like all LLM-powered chat apps, Baize has the next limitations:

- Inaccurate info: Simply the way in which ChatGPT’s responses are typically liable to inaccuracies ensuing from outdated coaching knowledge and contextual nuances, Baize’s responses would possibly as properly be technically inaccurate at occasions.

- Problem with up-to-date info: The LLaMA mannequin isn’t skilled on current knowledge. This makes it difficult for duties that require up-to-date info for correct and useful response.

- Bias and toxicity: By altering the inference immediate, the conduct of the mannequin to say no participating in delicate, unethical conversations might be manipulated.

That’s all for at this time! To discover extra about Baize, you’ll want to check out the demo on HuggingFace areas or run it regionally. ChatGPT and GPT-4 have impressed a variety of purposes within the NLP area.

With novel OpenAI wrappers hitting the developer area virtually on a regular basis, it may be overwhelming to maintain up with these fast developments and releases. On the identical time, we’re excited to see what the way forward for generative AI holds.

[1] C Xu, D Guo, N Duan, J McAuley, Baize: An Open-Source Model with Parameter-Efficient Tuning on Self-Chat Data, arXiv, 2023.

[3] Demo on HuggingFace Spaces

Bala Priya C is a technical author who enjoys creating long-form content material. Her areas of curiosity embrace math, programming, and knowledge science. She shares her studying with the developer neighborhood by authoring tutorials, how-to guides, and extra.

[ad_2]

Source link