[ad_1]

By Chris Mauck, Jonas Mueller

This text demonstrates how data-centric AI instruments can enhance a fine-tuned Massive Language Mannequin (LLM; a.okay.a. Basis Mannequin). These instruments optimize the dataset itself quite than altering the mannequin structure/hyperparameters — working the very same fine-tuning code on the improved dataset boosts test-set efficiency by 37% on a politeness classification process studied right here. We obtain related accuracy features through the identical data-centric AI course of throughout 3 state-of-the-art LLM fashions one can fine-tune through the OpenAI API: Davinci, Ada, and Curie. These are variants of the bottom LLM underpinning GPT-3/ChatGPT.

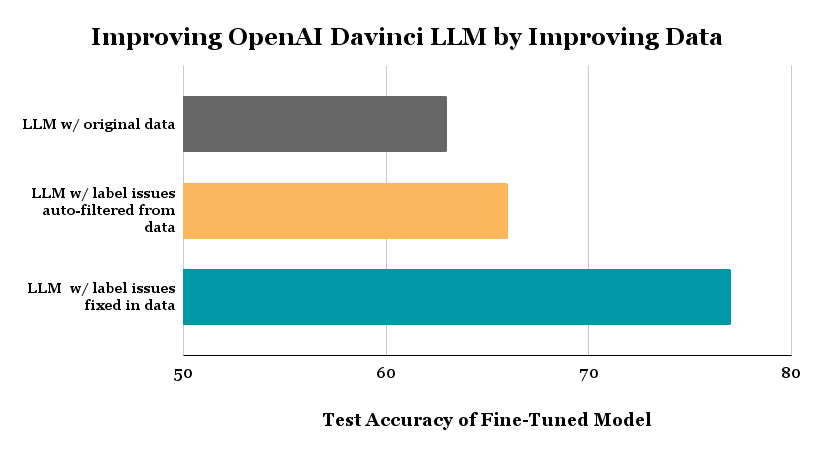

The above plot exhibits the check accuracy achieved for 3-class politeness classification of textual content by the identical LLM fine-tuning code (becoming Davinci through OpenAI API) run on 3 totally different datasets: (1) the unique dataset labeled by human annotators, (2) an auto-filtered model of this dataset through which we eliminated examples routinely estimated to be mislabeled through Assured Studying, (3) a cleaned model of the unique knowledge through which we manually fastened labels of examples estimated to be mislabeled (quite than filtering these examples).

Background

Labeled knowledge powers AI/ML within the enterprise, however real-world datasets have been found to comprise between 7-50% annotation errors. Imperfectly-labeled textual content knowledge hampers the coaching (and analysis of) ML fashions throughout duties like intent recognition, entity recognition, and sequence era. Though pretrained LLMs are outfitted with a variety of world information, their efficiency is adversely affected by noisy coaching knowledge (as noted by OpenAI). Right here we illustrate data-centric strategies to mitigate the impact of label noise with out altering any code associated to mannequin structure, hyperparameters, or coaching. These knowledge high quality enchancment strategies ought to thus stay relevant even for future superior LLMs like GPT-10.

LLMs purchase highly effective generative and discriminative capabilities after being pre-trained on most textual content throughout the web. Nonetheless, making certain the LLM produces dependable outputs for a selected enterprise use-case typically requires extra coaching on precise knowledge from this area labeled with the specified outputs. This domain-specific coaching is called fine-tuning the LLM and may be accomplished through APIs offered by OpenAI. Imperfections within the knowledge annotation course of inevitably introduce label errors on this domain-specific coaching knowledge, posing a problem for correct fine-tuning and analysis of the LLM.

Listed here are quotes from OpenAI on their technique for coaching state-of-the-art AI techniques:

“Since coaching knowledge shapes the capabilities of any discovered mannequin, knowledge filtering is a strong device for limiting undesirable mannequin capabilities.”

“We prioritized filtering out all the unhealthy knowledge over leaving in all the good knowledge. It is because we are able to all the time fine-tune our mannequin with extra knowledge later to show it new issues, nevertheless it’s a lot more durable to make the mannequin neglect one thing that it has already discovered.”

Clearly dataset high quality is an important consideration. Some organizations like OpenAI manually deal with points of their knowledge to supply the perfect fashions, however that is tons of labor! Knowledge-centric AI is an rising science of algorithms to detect knowledge points, so you possibly can systematically enhance your dataset extra simply with automation.

Our LLM in these experiments is the Davinci mannequin from OpenAI, which is their most succesful GPT-3 mannequin, upon which ChatGPT relies.

Right here we think about a 3-class variant of the Stanford Politeness Dataset, which has textual content phrases labeled as: rude, impartial, or well mannered. Annotated by human raters, a few of these labels are naturally low-quality.

This text walks via the next steps:

- Use the unique knowledge to fine-tune totally different state-of-the-art LLMs through the OpenAI API: Davinci, Ada, and Curie.

- Set up the baseline accuracy of every fine-tuned mannequin on a check set with high-quality labels (established through consensus and high-agreement amongst many human annotators who rated every check instance).

- Use Assured Studying algorithms to routinely establish lots of of mislabeled examples.

- Take away the information with automatically-flagged label points from the dataset, after which fine-tune the very same LLMs on the auto-filtered dataset. This straightforward step reduces the error in Davinci mannequin predictions by 8%!

- Introduce a no-code resolution to effectively repair the label errors within the dataset, after which fine-tune the very same LLM on the fastened dataset. This reduces the error in Davinci mannequin predictions by 37%!

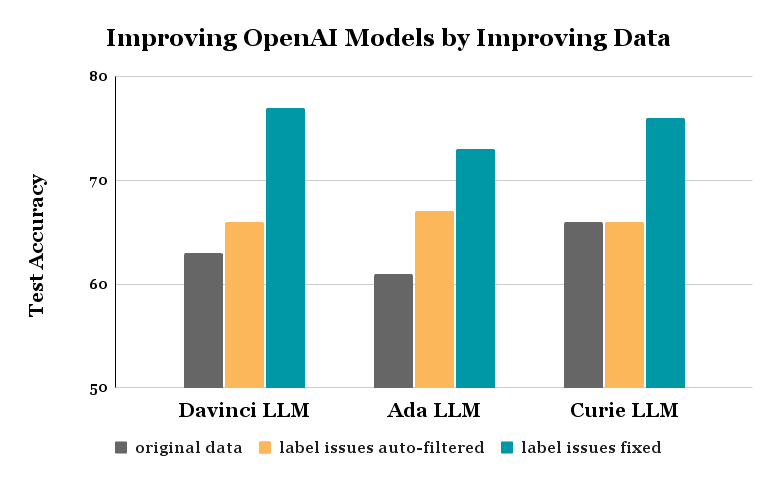

Comparable features are achieved through these identical processes for the Ada and Curie fashions — in all instances, nothing was modified in regards to the mannequin nor the fine-tuning code!

Right here’s a notebook you possibly can run to breed the outcomes demonstrated on this article and perceive the code to implement every step.

You possibly can obtain the prepare and check units right here: train test

Our coaching dataset has 1916 examples every labeled by a single human annotator, and thus some could also be unreliable. The check dataset has 480 examples every labeled by 5 annotators, and we use their consensus label as a high-quality approximation of the true politeness (measuring check accuracy towards these consensus labels). To make sure a good comparability, this check dataset stays fastened all through our experiments (all label cleansing / dataset modification is barely accomplished within the coaching set). We reformat these CSV information into the jsonl file sort required by OpenAI’s fine-tuning API.

Right here’s how our code seems to be to fine-tune the Davinci LLM for 3-class classification and consider its check accuracy:

!openai api fine_tunes.create -t "train_prepared.jsonl" -v "test_prepared.jsonl" --compute_classification_metrics --classification_n_classes 3 -m davinci

--suffix "baseline"

>>> Created fine-tune: ft-9800F2gcVNzyMdTLKcMqAtJ5

As soon as the job completes, we question a fine_tunes.outcomes endpoint to see the check accuracy achieved when fine-tuning this LLM on the unique coaching dataset.

!openai api fine_tunes.outcomes -i ft-9800F2gcVNzyMdTLKcMqAtJ5 > baseline.csv

df = pd.read_csv('baseline.csv')

baseline_acc = df.iloc[-1]['classification/accuracy']

>>> Effective-tuning Accuracy: 0.6312500238418579

Our baseline Davinci LLM achieves a check accuracy of 63% when fine-tuned on the uncooked coaching knowledge with presumably noisy labels. Even a state-of-the-art LLM just like the Davinci mannequin produces lackluster outcomes for this classification process, is it as a result of the information labels are noisy?

Confident Learning is a not too long ago developed suite of algorithms to estimate which knowledge are mislabeled in a classification dataset. These algorithms require out-of-sample predicted class chances for all of our coaching examples and apply a novel type of calibration to find out when to belief the mannequin over the given label within the knowledge.

To acquire these predicted chances we:

- Use the OpenAI API to compute embeddings from the Davinci mannequin for all of our coaching examples. You possibly can obtain the embeddings here.

- Match a logistic regression mannequin on the embeddings and labels within the unique knowledge. We use 10-fold cross-validation which permits us to supply out-of-sample predicted class chances for each instance within the coaching dataset.

# Get embeddings from OpenAI.

from openai.embeddings_utils import get_embedding

embedding_model = "text-similarity-davinci-001"

prepare["embedding"] = prepare.immediate.apply(lambda x: get_embedding(x, engine=embedding_model))

embeddings = prepare["embedding"].values

# Get out-of-sample predicted class chances through cross-validation.

from sklearn.linear_model import LogisticRegression

mannequin = LogisticRegression()

labels = prepare["completion"].values

pred_probs = cross_val_predict(estimator=mannequin, X=embeddings, y=labels, cv=10, technique="predict_proba")

The cleanlab package deal gives an open-source Python implementation of Assured Studying. With one line of code, we are able to run Assured Studying utilizing the mannequin predicted chances to estimate which examples have label points in our coaching dataset.

from cleanlab.filter import find_label_issues

# Get indices of examples estimated to have label points:

issue_idx = find_label_issues(labels, pred_probs,

return_indices_ranked_by='self_confidence') # kind indices by chance of label error

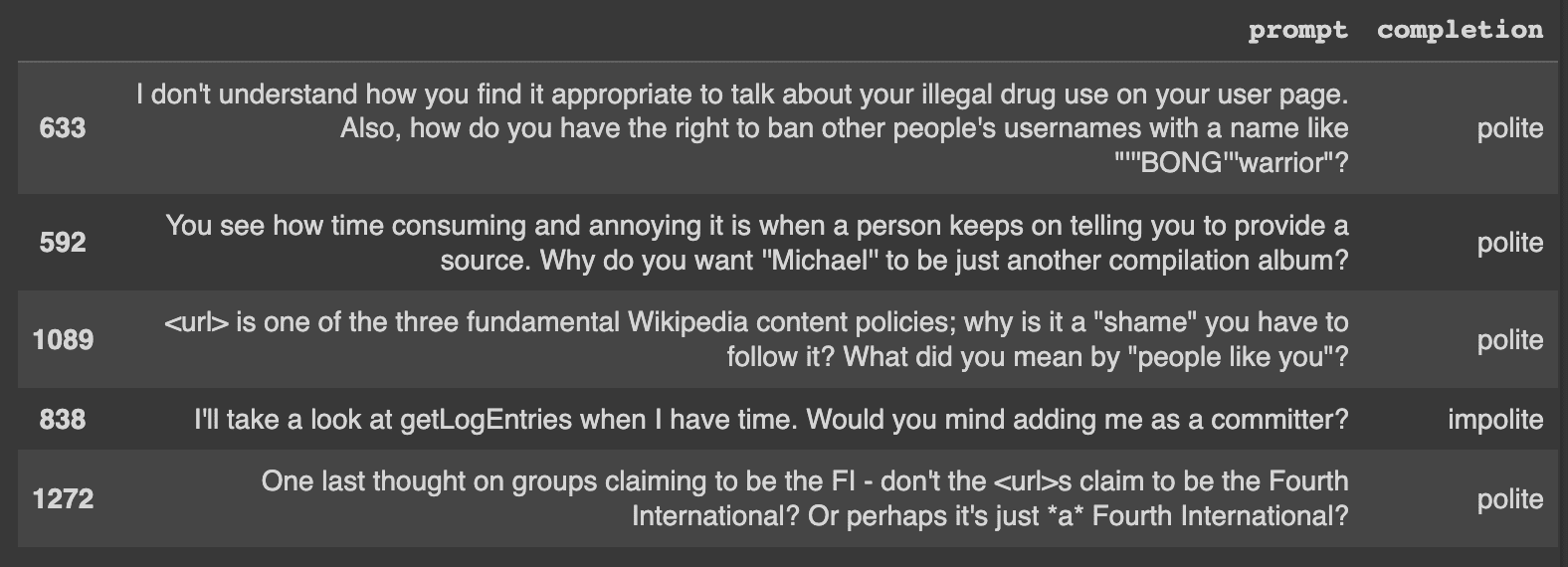

Let’s check out a number of of the label points routinely recognized in our dataset. Right here’s one instance that’s clearly mislabeled:

- Phrase: I am going to check out getLogEntries when I’ve time. Would you thoughts including me as a committer?

- Label: rude

Labeling errors like this are why we may be seeing poor mannequin outcomes.

Caption: Just a few of the highest errors that had been routinely recognized.

Notice: find_label_issues is ready to decide which of the given labels are probably incorrect given solely the out-of-sample pred_probs.

Now that we’ve got the indices of probably mislabeled examples (recognized through automated strategies), let’s take away these 471 examples from our coaching dataset. Effective-tuning the very same Davinci LLM on the filtered dataset achieves a check accuracy of 66% (on the identical check knowledge the place our unique Davinci LLM achieved 63% accuracy). We lowered the error-rate of the mannequin by 8% utilizing much less however higher high quality coaching knowledge!

# Take away knowledge flagged with potential label error.

train_cl = prepare.drop(issue_idx).reset_index(drop=True)

format_data(train_cl, "train_cl.jsonl")

# Practice a extra strong classifier with much less faulty knowledge.

!openai api fine_tunes.create -t "train_cl_prepared.jsonl" -v "test_prepared.jsonl" --compute_classification_metrics --classification_n_classes 3 -m davinci --suffix "dropped"

# Consider mannequin on check knowledge.

!openai api fine_tunes.outcomes -i ft-InhTRQGu11gIDlVJUt0LYbEx > autofiltered.csv

df = pd.read_csv('autofiltered.csv')

dropped_acc = df.iloc[-1]['classification/accuracy']

>>> 0.6604166626930237

As a substitute of fixing the auto-detected label points routinely through filtering, the smarter (but extra complicated) means to enhance our dataset can be to right the label points by hand. This concurrently removes a loud knowledge level and provides an correct one, however making such corrections manually is cumbersome. We did this manually utilizing Cleanlab Studio, an enterprise knowledge correction interface.

After changing the unhealthy labels we noticed with extra appropriate ones, we fine-tune the very same Davinci LLM on the manually-corrected dataset. The ensuing mannequin achieves 77% accuracy (on the identical check dataset as earlier than), which is a 37% discount in error from our unique model of this mannequin.

# Load in and format knowledge with the manually fastened labels.

train_studio = pd.read_csv('train_corrected.csv')

format_data(train_studio, "train_corrected.jsonl")

# Practice a extra strong classifier with the fastened knowledge.

!openai api fine_tunes.create -t "train_corrected_prepared.jsonl" -v "test_prepared.jsonl"

--compute_classification_metrics --classification_n_classes 3 -m davinci --suffix "corrected"

# Consider mannequin on check knowledge.

!openai api fine_tunes.outcomes -i ft-MQbaduYd8UGD2EWBmfpoQpkQ > corrected .csv

df = pd.read_csv('corrected.csv')

corrected_acc = df.iloc[-1]['classification/accuracy']

>>> 0.7729166746139526

Notice: all through this whole course of, we by no means modified any code associated to mannequin structure/hyperparameters, coaching, or knowledge preprocessing! All enchancment strictly comes from rising the standard of our coaching knowledge, which leaves room for extra optimizations on the modeling aspect.

We repeated this identical experiment with two different latest LLM fashions OpenAI gives for fine-tuning: Ada and Curie. The ensuing enhancements look much like these achieved for the Davinci mannequin.

Knowledge-centric AI is a strong paradigm for dealing with noisy knowledge through AI/automated strategies quite than tedious handbook effort. There are actually instruments that can assist you effectively discover and repair knowledge and label points to enhance any ML mannequin (not simply LLMs) for many varieties of knowledge (not simply textual content, but additionally photos, audio, tabular knowledge, and many others). Such instruments make the most of any ML mannequin to diagnose/repair points within the knowledge after which enhance the information for some other ML mannequin. These instruments will stay relevant with future advances in ML fashions like GPT-10, and can solely turn into higher at figuring out points when used with extra correct fashions!

Follow data-centric AI to systematically engineer higher knowledge through AI/automation. This frees you to capitalize in your distinctive area information quite than fixing basic knowledge points like label errors.

Chris Mauck is Knowledge Scientist at Cleanlab.

[ad_2]

Source link