[ad_1]

TL;DR: Textual content Immediate -> LLM -> Intermediate Illustration (resembling a picture structure) -> Secure Diffusion -> Picture.

Latest developments in text-to-image era with diffusion fashions have yielded exceptional outcomes synthesizing extremely reasonable and various pictures. Nevertheless, regardless of their spectacular capabilities, diffusion fashions, resembling Stable Diffusion, typically wrestle to precisely comply with the prompts when spatial or widespread sense reasoning is required.

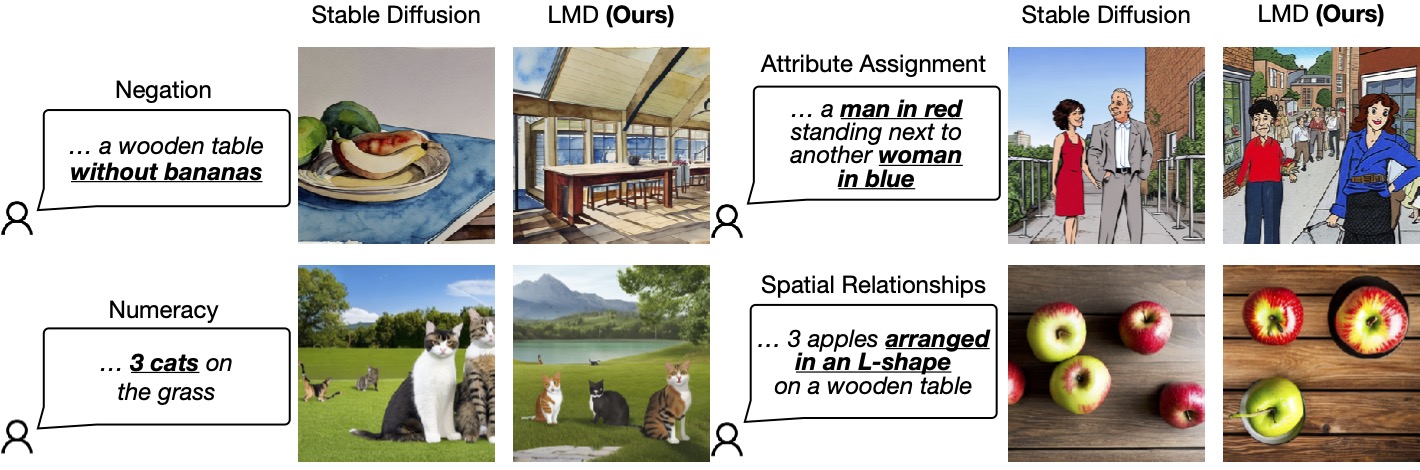

The next determine lists 4 eventualities by which Secure Diffusion falls quick in producing pictures that precisely correspond to the given prompts, specifically negation, numeracy, and attribute project, spatial relationships. In distinction, our methodology, LLM-grounded Diffusion (LMD), delivers a lot better immediate understanding in text-to-image era in these eventualities.

Determine 1: LLM-grounded Diffusion enhances the immediate understanding means of text-to-image diffusion fashions.

One attainable answer to handle this concern is after all to assemble an unlimited multi-modal dataset comprising intricate captions and prepare a big diffusion mannequin with a big language encoder. This strategy comes with important prices: It’s time-consuming and costly to coach each massive language fashions (LLMs) and diffusion fashions.

Our Resolution

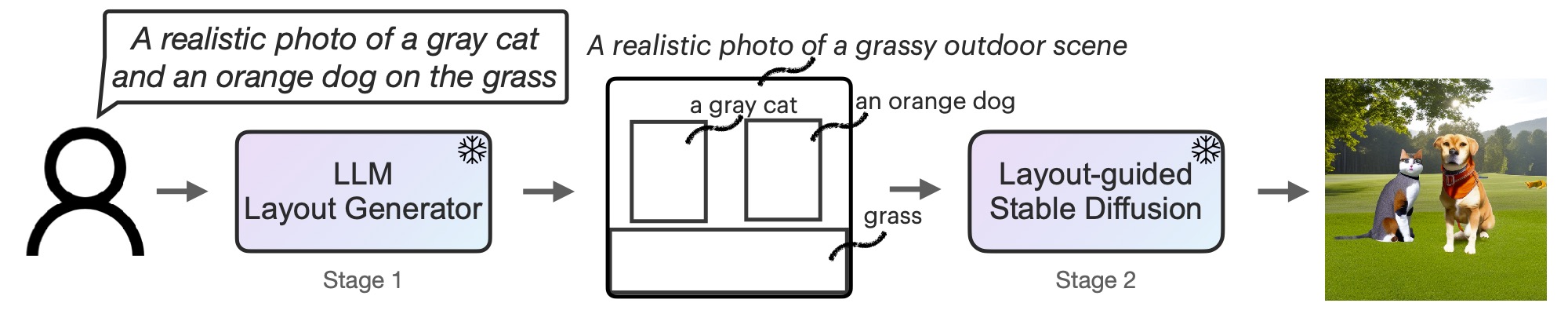

To effectively clear up this drawback with minimal price (i.e., no coaching prices), we as an alternative equip diffusion fashions with enhanced spatial and customary sense reasoning by utilizing off-the-shelf frozen LLMs in a novel two-stage era course of.

First, we adapt an LLM to be a text-guided structure generator by in-context studying. When supplied with a picture immediate, an LLM outputs a scene structure within the type of bounding containers together with corresponding particular person descriptions. Second, we steer a diffusion mannequin with a novel controller to generate pictures conditioned on the structure. Each levels make the most of frozen pretrained fashions with none LLM or diffusion mannequin parameter optimization. We invite readers to read the paper on arXiv for added particulars.

Determine 2: LMD is a text-to-image generative mannequin with a novel two-stage era course of: a text-to-layout generator with an LLM + in-context studying and a novel layout-guided steady diffusion. Each levels are training-free.

LMD’s Extra Capabilities

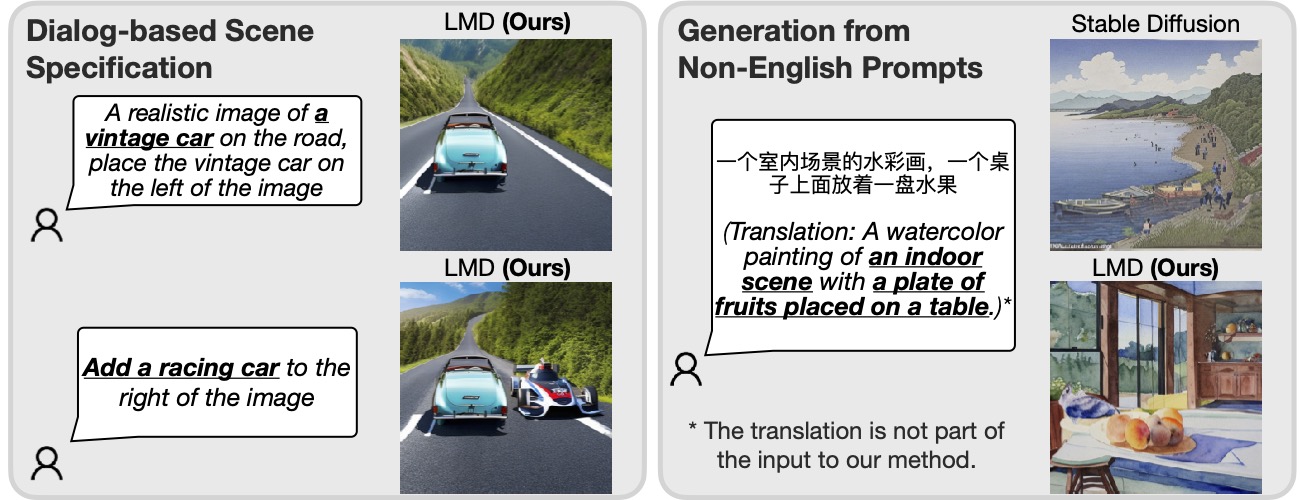

Moreover, LMD naturally permits dialog-based multi-round scene specification, enabling further clarifications and subsequent modifications for every immediate. Moreover, LMD is ready to deal with prompts in a language that’s not well-supported by the underlying diffusion mannequin.

Determine 3: Incorporating an LLM for immediate understanding, our methodology is ready to carry out dialog-based scene specification and era from prompts in a language (Chinese language within the instance above) that the underlying diffusion mannequin doesn’t assist.

Given an LLM that helps multi-round dialog (e.g., GPT-3.5 or GPT-4), LMD permits the consumer to supply further info or clarifications to the LLM by querying the LLM after the primary structure era within the dialog and generate pictures with the up to date structure within the subsequent response from the LLM. For instance, a consumer might request so as to add an object to the scene or change the present objects in location or descriptions (the left half of Determine 3).

Moreover, by giving an instance of a non-English immediate with a structure and background description in English throughout in-context studying, LMD accepts inputs of non-English prompts and can generate layouts, with descriptions of containers and the background in English for subsequent layout-to-image era. As proven in the correct half of Determine 3, this enables era from prompts in a language that the underlying diffusion fashions don’t assist.

Visualizations

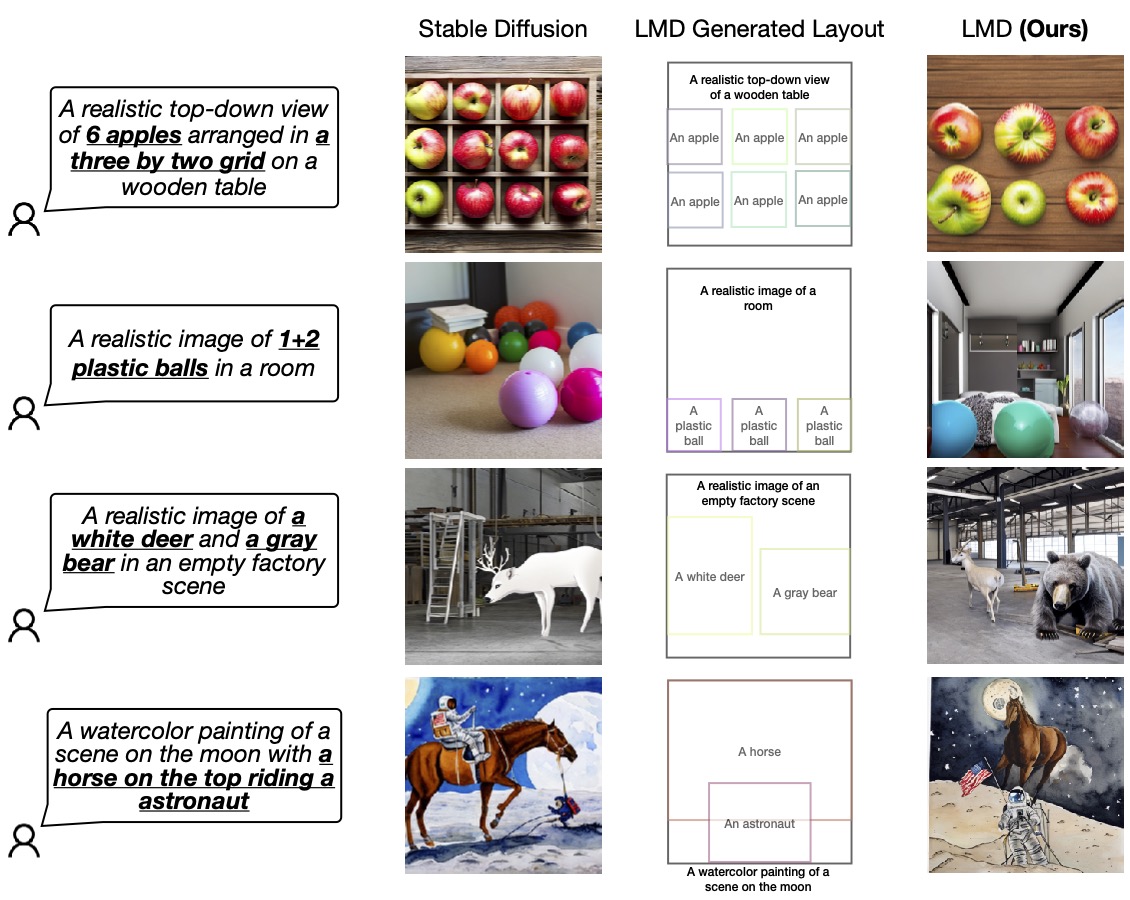

We validate the prevalence of our design by evaluating it with the bottom diffusion mannequin (SD 2.1) that LMD makes use of underneath the hood. We invite readers to our work for extra analysis and comparisons.

Determine 4: LMD outperforms the bottom diffusion mannequin in precisely producing pictures based on prompts that necessitate each language and spatial reasoning. LMD additionally allows counterfactual text-to-image era that the bottom diffusion mannequin isn’t capable of generate (the final row).

For extra particulars about LLM-grounded Diffusion (LMD), visit our website and read the paper on arXiv.

BibTex

If LLM-grounded Diffusion evokes your work, please cite it with:

@article{lian2023llmgrounded,

title={LLM-grounded Diffusion: Enhancing Immediate Understanding of Textual content-to-Picture Diffusion Fashions with Giant Language Fashions},

creator={Lian, Lengthy and Li, Boyi and Yala, Adam and Darrell, Trevor},

journal={arXiv preprint arXiv:2305.13655},

yr={2023}

}

[ad_2]

Source link