[ad_1]

Pure language processing (NLP) is constant to develop in recognition, and necessity, as synthetic intelligence and deep studying applications develop and thrive within the coming years. Pure language processing with PyTorch is one of the best wager to implement these applications.

On this information, we are going to deal with a number of the apparent questions which will come up when beginning to dive into pure language processing, however we may also have interaction with deeper questions and provide the proper steps to get began working by yourself NLP applications.

At the beginning, NLP is an utilized science. It’s a department of engineering that blends synthetic intelligence, computational linguistics, and laptop science as a way to “perceive” pure language, i.e., spoken and written language.

Second, NLP doesn’t imply Machine Studying or Deep Studying. As an alternative, these synthetic intelligence applications must be taught learn how to course of pure language after which use different programs to make use of what’s being enter into the applications.

Whereas it’s easier to consult with some AI applications as NLP applications, that isn’t strictly the case. As an alternative, they can make sense of language, after being correctly educated, however there’s a wholly completely different system and course of concerned in serving to these applications perceive pure language.

Because of this pure language processing with PyTorch is useful. PyTorch is constructed off of Python and has the advantage of having pre-written codes, referred to as lessons, all designed round NLP. This makes the complete course of faster and simpler for everybody concerned.

With these PyTorch lessons on the prepared and the assorted different Python libraries at PyTorch’s disposal, there is no such thing as a higher machine studying framework for pure language processing.

To get began utilizing NLP with PyTorch, you will want to be accustomed to Python for coding.

Image Source

As soon as you’re accustomed to Python, you’ll start to see loads of different frameworks that can be utilized for numerous deep studying initiatives. Nonetheless, pure language processing with PyTorch is perfect due to PyTorch Tensors.

Merely put, tensors let you carry out computations with the usage of a GPU which might considerably improve the pace and efficiency of your program for NLP with PyTorch. This implies you’ll be able to prepare your deep studying program faster to have the ability to make the most of NLP for no matter desired end result you have got.

As talked about above, there are completely different PyTorch lessons with the flexibility to perform properly for NLP and accompanying applications. We are going to break down six of those lessons and their use instances that will help you get began making the appropriate choice.

1. torch.nn.RNN

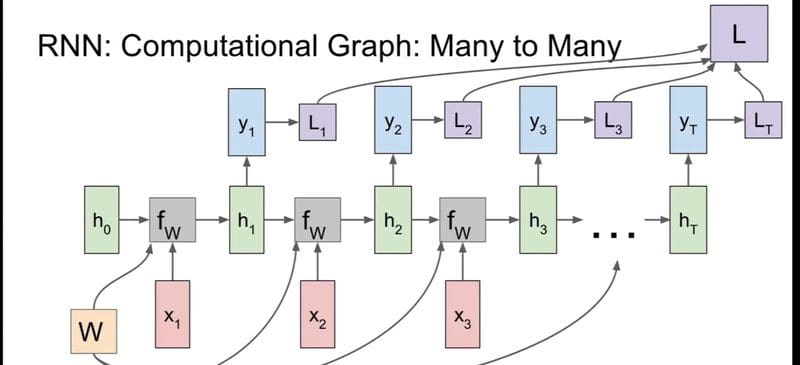

The primary three lessons we’re going to take a look at are multi-layer lessons, which suggests they will symbolize bi-directional recurrent neural networks. To simplify what this implies, it permits the deep studying program to study from previous states in addition to study from new/future states as computations proceed to run and course of. This permits these applications to study and course of pure language inputs and even perceive deeper language quirks.

torch.nn.RNN many to many diagram, Image Source

torch.nn.RNN stands for Recurring Neural Network and allows you to know what to anticipate from the category. That is the best recurring neural community PyTorch class to make use of to get began with pure language processing.

2. torch.nn.LSTM

torch.nn.LSTM is one other multi-layer PyTorch class. It has all the identical advantages as torch.nn.RNN however with Long Short Term Memory. Primarily, this implies deep studying applications utilizing this class can transcend one-to-one knowledge level connections and course of total knowledge sequences.

For pure language processing with PyTorch, torch.nn.LSTM is among the extra frequent lessons used as a result of it will probably start to know connections between not solely written or typed knowledge enter however may acknowledge speech and different vocalizations.

With the ability to course of extra complicated knowledge sequences makes it a essential element in having the ability to capably carry out for applications using the total potential of pure language processing.

3. torch.nn.GRU

torch.nn.GRU builds on the RNN and LSTM lessons by creating Gated Recurrent Units. Briefly, this interprets to torch.nn.GRU class applications having a gated output. This implies they perform equally to torch.nn.LSTM however even have processes to easily neglect datasets that don’t match or align with the remainder of the specified outcomes or conclusions of the vast majority of datasets.

torch.nn.GRU class applications are one other nice solution to get began in NLP with PyTorch as a result of they’re easier however produce related outcomes to torch.nn.LSTM in much less time. Nonetheless, they are often much less correct with out shut monitoring if this system ignores datasets that is perhaps necessary to its studying.

4. torch.nn.RNNCell

The following three lessons are simplified variations of their predecessors, so all of them perform equally with completely different advantages. These lessons are all cell-level lessons and principally run one operation at a time quite than course of a number of datasets or sequences concurrently.

Output results of NLP with PyTorch utilizing phrases assigned to corresponding photos,Image Source

The going is slower this fashion, however the end result will be much more correct with sufficient time. Being a RNNCell, this class program can nonetheless study from previous and future states.

5. torch.nn.LSTMCell

The torch.nn.LSTMCell features equally to the common torch.nn.LSTM class with the flexibility to course of datasets and sequences, however not a number of concurrently. As with RNNCell applications, this implies the going is slower and fewer intensive however it will probably really improve accuracy with time.

Every of those cell-level lessons have small variations from their predecessors, however to dive an excessive amount of into element for these variations can be far past the scope of this information.

6. torch.nn.GRUCell

One of the fascinating lessons utilized in pure language processing with PyTorch is torch.nn.GRUCell. It nonetheless maintains the performance of getting gated outputs, which suggests it will probably ignore and even neglect outlier datasets whereas nonetheless studying from previous and future operations.

That is, arguably, one of many extra widespread PyTorch lessons used for these beginning out as a result of it has essentially the most potential with the bottom variety of necessities for optimum operations.

The principle sacrifice right here is effort and time in ensuring this system is educated correctly.

There may be nonetheless a lot to be mentioned about learn how to get began with pure language processing with PyTorch, however yet another main issue to know upon getting chosen a PyTorch class that’s acceptable in your deep studying mannequin is to determine how you’ll implement NLP inside your mannequin.

Encoding phrases into your mannequin might be some of the apparent and necessary processes to having a fully-optimized and operational deep studying mannequin with pure language processing. NLP with PyTorch requires having some type of phrase encoding methodology.

There are numerous methods to have fashions course of particular person letters, however the level of making NLP deep studying fashions is to, presumably, focus not on particular person phrases and letters however the semantics and linguistic meanings of these phrases and phrases. Listed here are three primary phrase embedding fashions for NLP with PyTorch:

- Easy phrase encoding: coaching the mannequin to give attention to every particular person phrase in a sequence and letting them derive similarities and variations on their very own. That is the best however will be tough for the mannequin to precisely perceive or predict semantics.

- N-Gram language modeling: the mannequin is educated to study phrases with respect to the phrases within the sequence. This implies they will find out how phrases perform in relationship to 1 one other and in sentences as an entire.

- Steady bag-of-words (CBOW): an expanded model of N-Gram language modeling, the deep studying mannequin is educated to sequence knowledge of a set quantity of phrases earlier than and a set quantity of phrases after every phrase in a sequence as a way to deeply find out how phrases perform with surrounding phrases and the way they perform of their sequence. That is, by far, the preferred methodology utilized by these utilizing pure language processing with PyTorch.

After getting your PyTorch class chosen and a technique of embedding phrases you’re able to get began using pure language processing in your subsequent deep studying mission!

Utilizing pure language processing with PyTorch to create finish end result like Siri, Image Source

Pure language processing is among the hottest subjects in deep studying and AI with many industries attempting to determine methods to make the most of any such deep studying mannequin for inner and exterior use.

There may be a lot extra to see and study, however tell us for those who assume we missed something. When you’ve got any questions on learn how to begin implementing NLP or PyTorch, then don’t hesitate to achieve out!

What do you assume? Are you able to deal with pure language processing with PyTorch? Be at liberty to remark beneath with any questions you have got.

Kevin Vu manages Exxact Corp weblog and works with a lot of its proficient authors who write about completely different points of Deep Studying.

Original. Reposted with permission.

[ad_2]

Source link