[ad_1]

Why Do We Want Encoding?

Within the realm of machine studying, most algorithms demand inputs in numeric kind, particularly in lots of widespread Python frameworks. For example, in scikit-learn, linear regression, and neural networks require numerical variables. This implies we have to remodel categorical variables into numeric ones for these fashions to know them. Nonetheless, this step isn’t at all times obligatory for fashions like tree-based ones.

In the present day, I’m thrilled to introduce three elementary encoding strategies which might be important for each budding knowledge scientist! Plus, I’ve included a sensible tip that can assist you see these strategies in motion on the finish! Until said, all of the codes and footage are created by the writer.

Label Encoding / Ordinal Encoding

Each label encoding and ordinal encoding contain assigning integers to completely different lessons. The excellence lies in whether or not the specific variable inherently has an order. For instance, responses like ‘strongly agree,’ ‘agree,’ ‘impartial,’ ‘disagree,’ and ‘strongly disagree’ are ordinal as they observe a particular sequence. When a variable doesn’t have such an order, we use label encoding.

Let’s delve into label encoding.

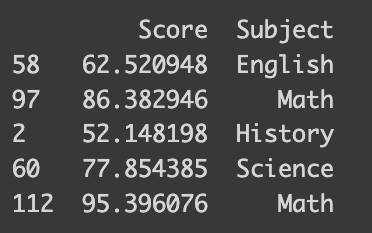

I’ve ready an artificial dataset with math take a look at scores and college students’ favourite topics. This dataset is designed to mirror larger scores for college students preferring STEM topics. The next code exhibits how it’s synthesized.

import numpy as np

import pandas as pdmath_score = [60, 70, 80, 90]

favorite_subject = ["History", "English", "Science", "Math"]

std_deviation = 5

num_samples = 30

# Generate 30 samples with a standard distribution

scores = []

topics = []

for i in vary(4):

scores.prolong(np.random.regular(math_score[i], std_deviation, num_samples))

topics.prolong([favorite_subject[i]]*num_samples)

knowledge = {'Rating': scores, 'Topic': topics}

df_math = pd.DataFrame(knowledge)

# Print the DataFrame

print(df_math.pattern(frac=0.04))import numpy as np

import pandas as pd

import random

math_score = [60, 70, 80, 90]

favorite_subject = ["History", "English", "Science", "Math"]

std_deviation = 5 # Customary deviation in cm

num_samples = 30 # Variety of samples

# Generate 30 samples with a standard distribution

scores = []

topics = []

for i in vary(4):

scores.prolong(np.random.regular(math_score[i], std_deviation, num_samples))

topics.prolong([favorite_subject[i]]*num_samples)

knowledge = {'Rating': scores, 'Topic': topics}

df_math = pd.DataFrame(knowledge)

# Print the DataFrame

sampled_index = random.pattern(vary(len(df_math)), 5)

sampled = df_math.iloc[sampled_index]

print(sampled)

You’ll be amazed at how easy it’s to encode your knowledge — it takes only a single line of code! You may move a dictionary that maps between the topic identify and quantity to the default methodology of the pandas dataframe like the next.

# Easy approach

df_math['Subject_num'] = df_math['Subject'].exchange({'Historical past': 0, 'Science': 1, 'English': 2, 'Math': 3})

print(df_math.iloc[sampled_index])

However what in the event you’re coping with an enormous array of lessons, or maybe you’re on the lookout for a extra simple method? That’s the place the scikit-learn library’s `LabelEncoder` operate is useful. It routinely encodes your lessons based mostly on their alphabetical order. For the most effective expertise, I like to recommend utilizing model 1.4.0, which helps all of the encoders we’re discussing.

# Scikit-learn

from sklearn.preprocessing import LabelEncoder

le = LabelEncoder()

df_math["Subject_num_scikit"] = le.fit_transform(df_math[['Subject']])

print(df_math.iloc[sampled_index])

Nonetheless, there’s a catch. Take into account this: our dataset doesn’t indicate an ordinal relationship between favourite topics. For example, ‘Historical past’ is encoded as 0, however that doesn’t imply it’s ‘inferior’ to ‘Math,’ which is encoded as 3. Equally, the numerical hole between ‘English’ and ‘Science’ is smaller than that between ‘English’ and ‘Historical past,’ however this doesn’t essentially mirror their relative similarity.

This encoding method additionally impacts interpretability in some algorithms. For instance, in linear regression, every coefficient signifies the anticipated change within the end result variable for a one-unit change in a predictor. However how can we interpret a ‘unit change’ in a topic that’s been numerically encoded? Let’s put this into perspective with a linear regression on our dataset.

from sklearn.linear_model import LinearRegressionmannequin = LinearRegression()

mannequin.match(df_math[["Subject_num"]], df_math[["Score"]])

coefficients = mannequin.coef_

print("Coefficients:", coefficients)

How can we interpret the coefficient 8.26 right here? The naive approach can be when the label modifications by 1 unit, the take a look at rating modifications by 8. Nonetheless, it isn’t actually true from Science (encoded as 1) to Historical past (encoded as 2) since I synthesized in a approach that the imply rating can be 80 and 70 respectively. So, we must always not interpret the coefficient when there is no such thing as a which means in the best way we label every class!

Now, transferring on to ordinal encoding, let’s apply it to a different artificial dataset, this time specializing in peak and faculty classes. I’ve tailor-made this dataset to mirror common heights for various faculty ranges: 110 cm for kindergarten, 140 cm for elementary faculty, and so forth. Let’s see how this performs out.

import numpy as np

import pandas as pd# Set the parameters

mean_height = [110, 140, 160, 175, 180] # Imply peak in cm

grade = ["kindergarten", "elementary school", "middle school", "high school", "college"]

std_deviation = 5 # Customary deviation in cm

num_samples = 10 # Variety of samples

# Generate 10 samples with a standard distribution

heights = []

grades = []

for i in vary(5):

heights.prolong(np.random.regular(mean_height[i], std_deviation, num_samples))

grades.prolong([grade[i]]*10)

knowledge = {'Grade': grades, 'Peak': heights}

df = pd.DataFrame(knowledge)

sampled_index = random.pattern(vary(len(df)), 5)

sampled = df.iloc[sampled_index]

print(sampled)

The `OrdinalEncoder` from scikit-learn’s preprocessing toolkit is an actual gem for dealing with ordinal variables. It’s intuitive, routinely figuring out the ordinal construction and encoding it accordingly. When you take a look at encoder.categories_, you may verify how the variable was encoded.

from sklearn.preprocessing import OrdinalEncoderencoder = OrdinalEncoder(classes=[grade])

df['Category'] = encoder.fit_transform(df[['Grade']])

print(encoder.categories_)

print(df.iloc[sampled_index])

With regards to ordinal categorical variables, deciphering linear regression fashions turns into extra simple. The encoding displays the diploma of training in a numerical order — the upper the training stage, the upper its corresponding worth.

from sklearn.linear_model import LinearRegressionmannequin = LinearRegression()

mannequin.match(df[["Category"]], df[["Height"]])

coefficients = mannequin.coef_

print("Coefficients:", coefficients)

height_diff = [mean_height[i] - mean_height[i-1] for i in vary(1, len(mean_height),1)]

print("Common Peak Distinction:", sum(height_diff)/len(height_diff))

The mannequin reveals one thing fairly intuitive: a one-unit change in class sort corresponds to a 17.5 cm enhance in peak. This makes good sense given our dataset!

So, let’s wrap up with a fast abstract of label/ordinal encoding:

Execs:

– Simplicity: It’s user-friendly and straightforward to implement.

– Effectivity: This methodology is mild on computational assets and reminiscence, creating only one new numerical characteristic.

– Supreme for Ordinal Classes: It shines when coping with categorical variables which have a pure order.

Cons:

– Implied Order: One potential draw back is that it may well introduce a way of order the place none exists, probably resulting in misinterpretation (like assuming a class labeled ‘3’ is superior to at least one labeled ‘2’).

– Not All the time Appropriate: Sure algorithms, corresponding to linear or logistic regression, would possibly incorrectly interpret the encoded numerical values as having ordinal significance.

One-hot encoding

Subsequent up, let’s dive into one other encoding approach that addresses the interpretability challenge: One-hot encoding.

The core challenge with label encoding is that it imposes an ordinal construction on variables that don’t inherently have one, by changing classes with numerical values. One-hot encoding tackles this by making a separate column for every class. Every of those columns incorporates binary values, indicating whether or not the row belongs to that class. It’s like pivoting the info to a wider format, for individuals who are aware of that idea. To make this clearer, let’s see an instance utilizing the math_score and topic knowledge. The `OneHotEncoder` from sklearn.preprocessing is ideal for this process.

from sklearn.preprocessing import OneHotEncoderknowledge = {'Rating': scores, 'Topic': topics}

df_math = pd.DataFrame(knowledge)

y = df_math["Score"] # Goal

x = df_math.drop('Rating', axis=1)

# Outline encoder

encoder = OneHotEncoder()

x_ohe = encoder.fit_transform(x)

print("Sort:",sort(x_ohe))

# Convert x_ohe to array in order that it's extra suitable

x_ohe = x_ohe.toarray()

print("Dimension:", x_ohe.form)

# Convet again to pandas dataframe

x_ohe = pd.DataFrame(x_ohe, columns=encoder.get_feature_names_out())

df_math_ohe = pd.concat([y, x_ohe], axis=1)

sampled_ohe_idx = random.pattern(vary(len(df_math_ohe)), 5)

print(df_math_ohe.iloc[sampled_ohe_idx])

Now, as an alternative of getting a single ‘Topic’ column, our dataset options particular person columns for every topic. This successfully eliminates any unintended ordinal construction! Nonetheless, the method right here is a little more concerned, so let me clarify.

Like with label/ordinal encoding, you first must outline your encoder. However the output of one-hot encoding differs: whereas label/ordinal encoding returns a numpy array, one-hot encoding usually produces a `scipy.sparse._csr.csr_matrix`. To combine this with a pandas dataframe, you’ll must convert it into an array. Then, create a brand new dataframe with this array and assign column names, which you may get from the encoder’s `get_feature_names_out()` methodology. Alternatively, you may get numpy array immediately by setting `sparse_output=False` when defining the encoder.

Nonetheless, in sensible purposes, you don’t must undergo all these steps. I’ll present you a extra streamlined method utilizing `make_column_transformer` in direction of the top of our dialogue!

Now, let’s proceed with working a linear regression on our one-hot encoded knowledge. This could make the interpretation a lot simpler, proper?

mannequin = LinearRegression()

mannequin.match(x_ohe, y)coefficients = mannequin.coef_

intercept = mannequin.intercept_

print("Coefficients:", coefficients)

print(encoder.get_feature_names_out())

print("Intercept:",intercept)

However wait, why are the coefficients so tiny, and the intercept so giant? What’s going fallacious right here? This conundrum is a particular challenge in linear regression referred to as good multicollinearity. Excellent multicollinearity happens when when one variable in a linear regression mannequin may be completely predicted from the others, which within the case of one-hot encoding occurs as a result of one class may be inferred if all different lessons are zero. To sidestep this drawback, we will drop one of many lessons by setting `OneHotEncoder(drop=”first”)`. Let’s try the impression of this adjustment.

encoder_with_drop = OneHotEncoder(drop="first")

x_ohe_drop = encoder_with_drop.fit_transform(x)# in the event you do not sparse_output = False, you want to run the next to transform sort

x_ohe_drop = x_ohe_drop.toarray()

x_ohe_drop = pd.DataFrame(x_ohe_drop, columns=encoder_with_drop.get_feature_names_out())

mannequin = LinearRegression()

mannequin.match(x_ohe_drop, y)

coefficients = mannequin.coef_

intercept = mannequin.intercept_

print("Coefficients:", coefficients)

print(encoder_with_drop.get_feature_names_out())

print("Intercept:",intercept)

Right here, the column for English has been dropped, and now the coefficients appear rather more affordable! Plus, they’re simpler to interpret. When all of the one-hot encoded columns are zero (indicating English as the favourite topic), we predict the take a look at rating to be round 71 (aligned with our outlined common rating for English). For Historical past, it will be 71 minus 11 equals 60, for Math, 71 plus 19, and so forth.

Nonetheless, there’s a big caveat with one-hot encoding: it may well result in high-dimensional datasets, particularly when the variable has a lot of lessons. Let’s think about a dataset that features 1000 rows, every representing a novel product with numerous options, together with a class that spans 100 differing kinds.

# Outline 1000 classes (for simplicity, these are simply numbered)

classes = [f"Category_{i}" for i in range(1, 200)]producers = ["Manufacturer_A", "Manufacturer_B", "Manufacturer_C"]

glad = ["Satisfied", "Not Satisfied"]

n_rows = 1000

# Generate random knowledge

knowledge = {

"Product_ID": [f"Product_{i}" for i in range(n_rows)],

"Class": [random.choice(categories) for _ in range(n_rows)],

"Worth": [round(random.uniform(10, 500), 2) for _ in range(n_rows)],

"High quality": [random.choice(satisfied) for _ in range(n_rows)],

"Producer": [random.choice(manufacturers) for _ in range(n_rows)],

}

df = pd.DataFrame(knowledge)

print("Dimension earlier than one-hot encoding:",df.form)

print(df.head())

Notice that the dataset’s dimensions are 1000 rows by 5 columns. Now, let’s observe the modifications after making use of a one-hot encoder.

# Now do one-hot encoding

encoder = OneHotEncoder(sparse_output=False)# Reshape the 'Class' column to a 2D array as required by the OneHotEncoder

category_array = df['Category'].values.reshape(-1, 1)

one_hot_encoded_array = encoder.fit_transform(category_array)

one_hot_encoded_df = pd.DataFrame(one_hot_encoded_array, columns=encoder.get_feature_names_out(['Category']))

encoded_df = pd.concat([df.drop('Category', axis=1), one_hot_encoded_df], axis=1)

print("Dimension after one-hot encoding:", encoded_df.form)

After making use of one-hot encoding, our dataset’s dimension balloons to 1000×201 — a whopping 40 occasions bigger than earlier than. This enhance is a priority, because it calls for extra reminiscence. Furthermore, you’ll discover that many of the values within the newly created columns are zeros, leading to what we name a sparse dataset. Sure fashions, particularly tree-based ones, wrestle with sparse knowledge. Moreover, different challenges come up when coping with high-dimensional knowledge also known as the ‘curse of dimensionality.’ Additionally, since one-hot encoding treats every class as a person column, we lose any ordinal data. Due to this fact, if the lessons in your variable inherently have a hierarchical order, one-hot encoding won’t be your most suitable option.

How can we deal with these disadvantages? One method is to make use of a unique encoding methodology. Alternatively, you may restrict the variety of lessons within the variable. Usually, even with a lot of lessons, the vast majority of values for a variable are concentrated in just some lessons. In such circumstances, treating these minority lessons as ‘others’ may be efficient. This may be achieved by setting parameters like `min_frequency` or `max_categories` in OneHotEncoder. One other technique for coping with sparse knowledge includes strategies like characteristic hashing, which primarily simplifies the illustration by mapping a number of classes to a lower-dimensional house utilizing a hash operate, or dimension discount strategies like PCA.

Right here’s a fast abstract of One-hot encoding:

Execs:

– Prevents Deceptive Interpretations: It avoids the chance of fashions misinterpreting the info as having some kind of order, a difficulty prevalent in label/goal encoding.

– Appropriate for Non-Ordinal Options: Supreme for categorical knowledge with out an ordinal relationship.

Cons:

– Dimensionality Enhance: Results in a big enhance within the dataset’s dimensionality, which may be problematic, particularly for variables with many classes.

– Sparse Matrix: Leads to many columns crammed with zeros, creating sparse knowledge.

– Not Environment friendly with Excessive Cardinality Options: Much less efficient for variables with a lot of classes.

Goal Encoding

Let’s now discover goal encoding, a way notably efficient with high-cardinality knowledge and in fashions like tree-based algorithms.

The essence of goal encoding is to leverage the data from the worth of the dependent variable. Its implementation varies relying on the duty. In regression, we encode the goal variable by the imply of the dependent variable for every class. For binary classification, it’s accomplished by encoding the goal variable with the likelihood of being in a single class (calculated because the variety of rows in that class the place the end result is 1, divided by the entire variety of rows within the class). In multiclass classification, the specific variable is encoded based mostly on the likelihood of belonging to every class, leading to as many new columns as there are lessons within the dependent variable. To make clear, let’s use the identical product dataset we employed for one-hot encoding.

Let’s start with goal encoding for a regression process. Think about we need to predict the worth of products and purpose to encode the product sort. Just like different encodings, we use TargetEncoder from sklearn.preprocessing!

from sklearn.preprocessing import TargetEncoder

x = df.drop(["Price"], axis=1)

x_need_encode = df["Category"].to_frame()

y = df["Price"]# Outline encoder

encoder = TargetEncoder()

x_encoded = encoder.fit_transform(x_need_encode, y)

# Encoder with 0 smoothing

encoder_no_smooth = TargetEncoder(clean=0)

x_encoded_no_smooth = encoder_no_smooth.fit_transform(x_need_encode, y)

x_encoded = pd.DataFrame(x_encoded, columns=["encoded_category"])

data_target = pd.concat([x, x_encoded], axis=1)

print("Dimension earlier than encoding:", df.form)

print("Dimension after encoding:", data_target.form)

print("---------")

print("Encoding")

print(encoder.encodings_[0][:5])

print(encoder.categories_[0][:5])

print(" ")

print("Encoding with no clean")

print(encoder_no_smooth.encodings_[0][:5])

print(encoder_no_smooth.categories_[0][:5])

print("---------")

print("Imply by Class")

print(df.groupby("Class").imply("Worth").head())

print("---------")

print("dataset:")

print(data_target.head())

After the encoding, you’ll discover that, regardless of the variable having many lessons, the dataset’s dimension stays unchanged (1000 x 5). You can even observe how every class is encoded. Though I discussed that the encoding for every class is predicated on the imply of the goal variable for that class, you’ll discover that the precise imply differs barely from the encoding utilizing the default settings. This discrepancy arises as a result of, by default, the operate routinely selects a smoothing parameter. This parameter blends the native class imply with the general world imply, which is especially helpful to forestall overfitting in classes with restricted samples. If we set `clean=0`, the encoded values align exactly with the precise means.

Now, let’s think about binary classification. Think about our purpose is to categorise whether or not the standard of a product is passable. On this situation, the encoded worth represents the likelihood of a class being ‘passable.’

x = df.drop(["Quality"], axis=1)

x_need_encode = df["Category"].to_frame()

y = df["Quality"]# Outline encoder

encoder = TargetEncoder()

x_encoded = encoder.fit_transform(x_need_encode, y)

x_encoded = pd.DataFrame(x_encoded, columns=["encoded_category"])

data_target = pd.concat([x, x_encoded], axis=1)

print("Dimension:", data_target.form)

print("---------")

print("Encoding")

print(encoder.encodings_[0][:5])

print(encoder.categories_[0][:5])

print("---------")

print(encoder.classes_)

print("---------")

print("dataset:")

print(data_target.head())

You may certainly see that the encoded_category characterize the likelihood being “Glad” (float worth between 0 and 1). To see how every class is encoded, you may verify the `classes_` attribute of the encoder. For binary classification, the primary worth within the checklist is usually dropped, which means that the column right here signifies the likelihood of being glad. Conveniently, the encoder routinely detects the kind of process, so there’s no must specify that it’s a binary classification.

Lastly, let’s see multi-class classification instance. Suppose we’re predicting which producer produced a product.

x = df.drop(["Manufacturer"], axis=1)

x_need_encode = df["Category"].to_frame()

y = df["Manufacturer"]# Outline encoder

encoder = TargetEncoder()

x_encoded = encoder.fit_transform(x_need_encode, y)

x_encoded = pd.DataFrame(x_encoded, columns=encoder.classes_)

data_target = pd.concat([x, x_encoded], axis=1)

print("Dimension:", data_target.form)

print("---------")

print("Encoding")

print(encoder.encodings_[0][:5])

print(encoder.categories_[0][:5])

print("---------")

print("dataset:")

print(data_target.head())

After encoding, you’ll see that we now have columns for every producer. These columns point out the likelihood of a product belonging to a sure class being produced by that producer. Though our dataset has expanded barely, the variety of lessons for the dependent variable is often a lot smaller, so it’s unlikely to trigger points.

Goal encoding is especially advantageous for tree-based fashions. These fashions make splits based mostly on characteristic values that almost all successfully separate the goal variable. By immediately incorporating the imply of the goal variable, goal encoding offers a transparent and environment friendly means for the mannequin to make these splits, usually extra so than different encoding strategies.

Nonetheless, warning is required with goal encoding. If there are only some observations for a category, and these don’t characterize the true imply for that class, there’s a threat of overfitting.

This results in one other essential level: it’s important to carry out goal encoding after splitting your knowledge into coaching and testing units. Doing it beforehand can result in knowledge leakage, because the encoding can be influenced by the outcomes within the take a look at dataset. This might outcome within the mannequin performing exceptionally properly on the coaching dataset, supplying you with a misunderstanding of its efficacy. Due to this fact, to precisely assess your mannequin’s efficiency, guarantee goal encoding is finished put up train-test break up.

Right here’s a fast abstract of goal encoding:

Execs:

– Retains Cardinality in Verify: It’s extremely efficient for prime cardinality options because it doesn’t enhance the characteristic house.

– Can Seize Info Inside Labels: By incorporating goal knowledge, it usually enhances predictive efficiency.

– Helpful for Tree-Based mostly Fashions: Notably advantageous for advanced fashions corresponding to random forests or gradient boosting machines.

Cons:

– Threat of Overfitting: There’s a heightened threat of overfitting, particularly when classes have a restricted variety of observations.

– Goal Leakage: It might inadvertently introduce future data into the mannequin, i.e., particulars from the goal variable that wouldn’t be accessible throughout precise predictions.

– Much less Interpretable: For the reason that transformations are based mostly on the goal, they are often more difficult to interpret in comparison with strategies like one-hot or label encoding.

Remaining tip

To wrap up, I’d like to supply some sensible ideas. All through this dialogue, we’ve checked out completely different encoding strategies, however in actuality, you would possibly need to apply numerous encodings to completely different variables inside a dataset. That is the place `make_column_transformer` from sklearn.compose is useful. For instance, suppose you’re predicting product costs and resolve to make use of goal encoding for the ‘Class’ as a result of its excessive cardinality, whereas making use of one-hot encoding for ‘Producer’ and ‘High quality’. To do that, you’d outline arrays containing the names of the variables for every encoding sort and apply the operate as proven beneath. This method permits you to deal with the remodeled knowledge seamlessly, main you to an effectively encoded dataset prepared on your analyses!

from sklearn.compose import make_column_transformer

ohe_cols = ["Manufacturer"]

te_cols = ["Category", "Quality"]encoding = make_column_transformer(

(OneHotEncoder(), ohe_cols),

(TargetEncoder(), te_cols)

)

x = df.drop(["Price"], axis=1)

y = df["Price"]

# Match the transformer

x_encoded = encoding.fit_transform(x, y)

x_encoded = pd.DataFrame(x_encoded, columns=encoding.get_feature_names_out())

x_rest = x.drop(ohe_cols+te_cols, axis=1)

print(pd.concat([x_rest, x_encoded],axis=1).head())

Thanks a lot for taking the time to learn by means of this! Once I first launched into my machine studying journey, selecting the best encoding strategies and understanding their implementation was fairly a maze for me. I genuinely hope this text has shed some mild for you and made your path a bit clearer!

Supply:

Scikit-learn: Machine Studying in Python, Pedregosa et al., JMLR 12, pp. 2825–2830, 2011.

Documentation of Scikit-learn:

Ordinal encoder: https://scikit-learn.org/stable/modules/generated/sklearn.preprocessing.OrdinalEncoder.html#sklearn.preprocessing.OrdinalEncoder

Goal encoder: https://scikit-learn.org/stable/modules/generated/sklearn.preprocessing.TargetEncoder.html#sklearn.preprocessing.TargetEncoder

One-hot encoder https://scikit-learn.org/stable/modules/generated/sklearn.preprocessing.OneHotEncoder.html#sklearn.preprocessing.OneHotEncoder

[ad_2]

Source link