[ad_1]

Generative AI — within the type of massive language mannequin (LLM) purposes like ChatGPT, picture mills akin to Steady Diffusion and Adobe Firefly, and recreation rendering methods like NVIDIA DLSS 3 Body Era — is quickly ushering in a brand new period of computing for productiveness, content material creation, gaming and extra.

On the Microsoft Build developer convention, NVIDIA and Microsoft right this moment showcased a collection of developments in Home windows 11 PCs and workstations with NVIDIA RTX GPUs to satisfy the calls for of generative AI.

Greater than 400 Home windows apps and video games already make use of AI know-how, accelerated by devoted processors on RTX GPUs known as Tensor Cores. Right now’s bulletins, which embrace instruments to develop AI on Home windows PCs, frameworks to optimize and deploy AI, and driver efficiency and effectivity enhancements, will empower builders to construct the subsequent era of Home windows apps with generative AI at their core.

“AI would be the single largest driver of innovation for Home windows clients within the coming years,” mentioned Pavan Davuluri, company vice chairman of Home windows silicon and system integration at Microsoft. “By working in live performance with NVIDIA on {hardware} and software program optimizations, we’re equipping builders with a transformative, high-performance, easy-to-deploy expertise.”

Develop Fashions With Home windows Subsystem for Linux

AI growth has historically taken place on Linux, requiring builders to both dual-boot their techniques or use a number of PCs to work of their AI growth OS whereas nonetheless accessing the breadth and depth of the Home windows ecosystem.

Over the previous few years, Microsoft has been constructing a strong functionality to run Linux instantly inside the Home windows OS, known as Home windows Subsystem for Linux (WSL). NVIDIA has been working carefully with Microsoft to ship GPU acceleration and assist for the whole NVIDIA AI software program stack inside WSL. Now builders can use Home windows PC for all their native AI growth wants with assist for GPU-accelerated deep learning frameworks on WSL.

With NVIDIA RTX GPUs delivering up to 48GB of RAM in desktop workstations, builders can now work with fashions on Home windows that had been beforehand solely obtainable on servers. The massive reminiscence additionally improves the efficiency and high quality for native fine-tuning of AI fashions, enabling designers to customise them to their very own fashion or content material. And since the identical NVIDIA AI software program stack runs on NVIDIA knowledge heart GPUs, it’s simple for builders to push their fashions to Microsoft Azure Cloud for giant coaching runs.

Quickly Optimize and Deploy Fashions

With educated fashions in hand, builders have to optimize and deploy AI for goal gadgets.

Microsoft launched the Microsoft Olive toolchain for optimization and conversion of PyTorch fashions to ONNX, enabling builders to routinely faucet into GPU {hardware} acceleration akin to RTX Tensor Cores. Builders can optimize fashions by way of Olive and ONNX, and deploy Tensor Core-accelerated fashions to PC or cloud. Microsoft continues to spend money on making PyTorch and associated instruments and frameworks work seamlessly with WSL to offer the very best AI mannequin growth expertise.

Improved AI Efficiency, Energy Effectivity

As soon as deployed, generative AI fashions demand unimaginable inference efficiency. RTX Tensor Cores ship as much as 1,400 Tensor TFLOPS for AI inferencing. Over the past 12 months, NVIDIA has labored to enhance DirectML efficiency to take full benefit of RTX {hardware}.

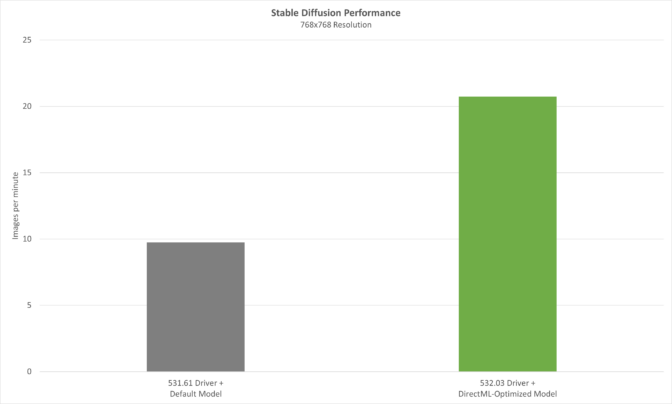

On Might 24, we’ll launch our newest optimizations in Launch 532.03 drivers that mix with Olive-optimized fashions to ship huge boosts in AI efficiency. Utilizing an Olive-optimized model of the Steady Diffusion text-to-image generator with the favored Automatic1111 distribution, efficiency is improved over 2x with the brand new driver.

With AI coming to almost each Home windows software, effectively delivering inference efficiency is vital — particularly for laptops. Coming quickly, NVIDIA will introduce new Max-Q low-power inferencing for AI-only workloads on RTX GPUs. It optimizes Tensor Core efficiency whereas conserving energy consumption of the GPU as little as potential, extending battery life and sustaining a cool, quiet system. The GPU can then dynamically scale up for max AI efficiency when the workload calls for it.

Be a part of the PC AI Revolution Now

Prime software program builders — like Adobe, DxO, ON1 and Topaz — have already included NVIDIA AI know-how with greater than 400 Home windows purposes and video games optimized for RTX Tensor Cores.

“AI, machine studying and deep studying energy all Adobe purposes and drive the way forward for creativity. Working with NVIDIA we repeatedly optimize AI mannequin efficiency to ship the absolute best expertise for our Home windows customers on RTX GPUs.” — Ely Greenfield, CTO of digital media at Adobe

“NVIDIA helps to optimize our WinML mannequin efficiency on RTX GPUs, which is accelerating the AI in DxO DeepPRIME, in addition to offering higher denoising and demosaicing, sooner.” — Renaud Capolunghi, senior vice chairman of engineering at DxO

“Working with NVIDIA and Microsoft to speed up our AI fashions working in Home windows on RTX GPUs is offering an enormous profit to our viewers. We’re already seeing 1.5x efficiency beneficial properties in our suite of AI-powered pictures modifying software program.” — Dan Harlacher, vice chairman of merchandise at ON1

“Our in depth work with NVIDIA has led to enhancements throughout our suite of photo- and video-editing purposes. With RTX GPUs, AI efficiency has improved drastically, enhancing the expertise for customers on Home windows PCs.” — Suraj Raghuraman, head of AI engine growth at Topaz Labs

NVIDIA and Microsoft are making a number of sources obtainable for builders to check drive prime generative AI fashions on Home windows PCs. An Olive-optimized model of the Dolly 2.0 massive language mannequin is on the market on Hugging Face. And a PC-optimized model of NVIDIA NeMo massive language mannequin for conversational AI is coming quickly to Hugging Face.

Builders may learn to optimize their purposes end-to-end to take full benefit of GPU-acceleration by way of the NVIDIA AI for accelerating applications developer site.

The complementary applied sciences behind Microsoft’s Home windows platform and NVIDIA’s dynamic AI {hardware} and software program stack will assist builders rapidly and simply develop and deploy generative AI on Home windows 11.

Microsoft Construct runs via Thursday, Might 25. Tune into to be taught extra on shaping the future of work with AI.

[ad_2]

Source link