[ad_1]

Background

A lot current work on giant language fashions (LLMs) has explored the phenomenon of in-context studying (ICL). On this paradigm, an LLM learns to unravel a brand new job at inference time (with none change to its weights) by being fed a immediate with examples of that job. For instance, a immediate would possibly give an LLM examples of translations, phrase corrections, or arithmetic, then ask it to translate a brand new sentence, right a brand new phrase, or resolve a brand new arithmetic drawback:

In-context studying has an essential relationship with prompting. In the event you ask ChatGPT to categorize totally different items of writing by theme, you would possibly first give it instance items with their right categorizations. This basically achieves the identical factor, however is introduced in a extra “fluent” format. Current works have explored how we are able to manipulate prompts to permit LLMs to carry out sure duties extra simply, corresponding to “Teaching Algorithmic Reasoning via In-Context Learning” by Zhou et al.

The GPT-4 technical report contains examples of questions, solutions, and reply explanations when asking the mannequin to reply new questions. The (clipped) instance beneath comes from Part A.8, and offers the mannequin a couple of instance questions, solutions, and explanations for the a number of alternative part of an AP Artwork Historical past Examination. On the finish, the immediate offers only a query and asks the mannequin to supply its (a number of alternative) reply and an evidence.

The brunt of why ICL has been so fascinating because it was launched within the authentic GPT-3 paper is that, with none additional fine-tuning, a pre-trained mannequin is ready to study to do one thing totally new by merely being proven a couple of input-output examples. As this Stanford blog notes, ICL is aggressive on many NLP benchmarks compared with fashions skilled on far more labeled information.

This piece evaluations literature that has tried to grasp ICL, and is supplemented by two paper summaries and creator Q&As with researchers who’ve revealed on the subject.

What’s taking place in in-context studying? The Literature

ICL was outlined in “Language Models are Few-Shot Learners” by Brown et al., the paper that launched GPT-3:

Throughout unsupervised pre-training, a language mannequin develops a broad set of abilities and sample recognition talents. It then makes use of these talents at inference time to quickly adapt to or acknowledge the specified job. We use the time period “in-context studying” to explain the interior loop of this course of, which happens throughout the forward-pass upon every sequence.

Nonetheless, the mechanisms underlying ICL–an understanding of why LLMs are in a position to quickly adapt to new duties “with out additional coaching”–stay the topic of contending explanations. These makes an attempt at rationalization are the topic of this part.

Early Analyses

In-context studying was first critically contended with in Brown et al., which each noticed GPT-3’s functionality for ICL and noticed that bigger fashions made “more and more environment friendly use of in-context info,” hypothesizing that additional scaling would lead to further positive factors for ICL talents. Whereas the ICL talents GPT-3 displayed had been spectacular, it’s value noting that GPT-3 confirmed no clear ICL on the Winograd dataset and blended outcomes from ICL on commonsense reasoning duties.

One earlier work, “An Explanation of In-context Learning as Implicit Bayesian Inference” by Xie et al., tried to develop a mathematical framework for understanding how ICL emerges throughout pre-training. At a excessive degree, the authors’ framework understands ICL as “finding” latent ideas the LM has acquired from its coaching information–all parts of the immediate (format, inputs, outputs, and the input-output mapping) could also be used to find an idea. In additional element, the LM would possibly infer that the duty demonstrated by the immediate’s coaching examples is sentiment classification (the “idea” may be extra detailed than this, e.g. sentiment classification of economic information), then apply that mapping to the check enter. The authors give extra element on their strategy:

On this paper, we examine how in-context studying can emerge when pretraining paperwork have long-range coherence. Right here, the LM should infer a latent document-level idea to generate coherent subsequent tokens throughout pretraining. At check time, in-context studying happens when the LM additionally infers a shared latent idea between examples in a immediate. We show when this happens regardless of a distribution mismatch between prompts and pretraining information in a setting the place the pretraining distribution is a mix of HMMs.

To review the phenomenon, the authors introduce a easy pretraining distribution the place ICL emerges:

To generate a doc, we first draw a latent idea $theta$, which parameterizes the transitions of a Hidden Markov Mannequin (HMM), then pattern a sequence of tokens from this HMM… Throughout pretraining, the LM should infer the latent idea throughout a number of sentences to generate coherent continuations… in-context studying happens when the LM additionally infers a shared immediate idea throughout examples to make a prediction.

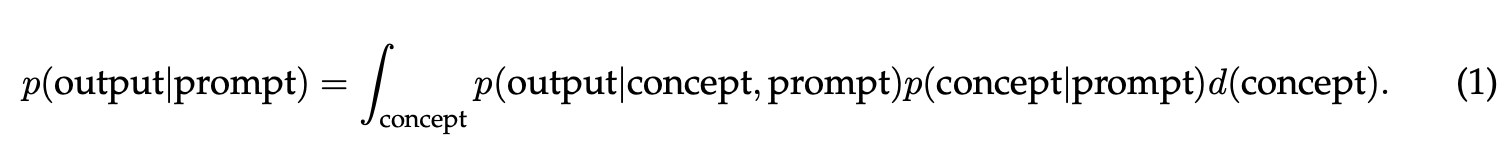

The authors then argue that, when the LM matches its pretraining distribution p precisely, ICL characterizes the conditional distribution of completions given prompts p(output|immediate) underneath the pretraining distribution; the immediate is generated from one other distribution (because the immediate just isn’t drawn immediately from the coaching information). This conditional posterior predictive distribution marginalizes out latent ideas

If p(idea|immediate) concentrates on the immediate idea with extra examples then the LM learns by way of marginalization by “deciding on” the immediate idea–this equates to performing Bayesian inference.

In Part 2, the authors characterize their theoretical setting–the pretraining distribution is a mix of HMMs (MoHMM): it entails a latent idea $theta$ from a household of ideas, which defines a distribution over noticed tokens from a vocabulary. They generate a doc by sampling an idea from a previous $p(theta)$ and pattern the doc given the idea–the likelihood of the doc (a size $T$ sequence) given the idea is outlined by a HMM, and $theta$ determines the transition likelihood matrix of the HMM hidden states. Their in-context predictor, a Bayesian predictor, outputs the probably prediction over the pretraining distribution conditioned on the immediate from the immediate distribution.

Gabriel Poesia, although he finds the tackle ICL convincing, famous that the paper’s argument would possibly miss a hyperlink between the creator’s Bayesian predictor and Transformers skilled by way of most chance: {that a} Bayesian predictor would carry out ICL and obtain optimum 0-1 loss doesn’t suggest any mannequin reaching optimum 0-1 loss behaves equivalently to the Bayesian predictor.

Ferenc Huszár further notes that the authors’ evaluation makes very sturdy assumptions about how the ICL job embedded within the sequence is expounded to the MoHMM distribution, and feedback that the in-context job underneath examine is extra like a few-shot sequence completion job than a classification job. I believe this isn’t totally damning for the paper’s insights, and Bayesian inference could but clarify some types of extrapolation.

A second work named “Rethinking the Role of Demonstrations: What Makes In-Context Learning Work?” by Min et al., the topic of certainly one of this text’s Q&As, analyzed which facets of a immediate have an effect on downstream job efficiency in in-context learners. The authors determine 4 facets of the demonstrations (thought of as input-output pairs $(x_1,y_1), … (x_k,y_k)$) that might present studying sign:

- The input-label mapping

- The distribution of the enter textual content

- The label area

- The format

Specifically, the authors discover that floor fact demonstrations are not required to realize improved efficiency on a spread of classification and multi-choice duties–demonstrations with random labels obtain comparable enchancment to demonstrations with gold labels, and each outperform a zero-shot baseline that asks a LM to carry out these duties with no demonstrations. The authors additionally discover that conditioning within the label area considerably contributes to efficiency positive factors when utilizing demonstrations. The format additionally seems to be crucial to enhancements from demonstrations: eradicating the format, or offering demonstrations with labels solely, performs near or worse than no demonstrations.

In abstract, the three issues that matter for in-context demonstrations are the enter distribution (the underlying distribution inputs in ICL examples come from), the output area (the set of outputs—courses or reply decisions—within the job), and the format of the demonstration. A Stanford AI Lab blog post connects the empirical findings in Min et al. to the speculation introduced in Xie et al.: since LMs don’t depend on the input-output correspondence in a immediate, they might have already got been uncovered to notions of this correspondence throughout pretraining that ICL then leverages. The opposite parts of the immediate could also be seen as offering proof that enable the mannequin to find ideas (outlined by Xie as latent variables that include document-level statistics) it has already realized.

Induction Heads and Gradient Descent

When finding out transformers underneath the lens of mechanistic interpretability, researchers at Anthropic studied a circuit they dubbed an induction head. Finding out simplified, attention-only transformers, the researchers discovered that two layer fashions use consideration head composition to create these induction heads, which carry out in-context studying.

In easy phrases, induction heads search over their context for earlier examples of the token {that a} mannequin is at present processing. In the event that they discover the present token within the context, they have a look at the following token and duplicate it–this enables them to repeat earlier sequences of tokens with the intention to kind new completions. Basically, the thought is much like copy-pasting from earlier outputs of the mannequin. As an illustration, persevering with “Harry” with “Potter” could also be executed by taking a look at a earlier output of “Harry Potter”.

There’s far more element about induction heads and a full exegesis would seemingly additionally require some element from the prolonged Mathematical Framework for Transformer Circuits, which introduces Anthropic’s mechanistic framework for understanding transformer fashions. I’ll refer readers to Neel Nanda’s explainer movies on the Mathematical Framework and Induction Heads papers and this lecture by Chris Olah on Induction Heads.

The upshot, so far as we’re involved, is that induction heads seem in transformer fashions simply as transformers enhance of their means to do ICL. Since researchers can absolutely reverse engineer how these parts of transformers work, they current a promising avenue for growing a fuller understanding of how and why ICL works.

More moderen work contextualizes (no pun meant) ICL within the mild of gradient descent. Naturally, the phrase studying would recommend that ICL implements some type of optimization course of.

In “What Learning Algorithm is In-Context Learning,” Akyürek et al., utilizing linear regression as a toy drawback, present proof for the speculation that transformer-based in-context learners certainly implement commonplace studying algorithms implicitly.

“Transformers learn in-context by gradient descent” makes exactly the declare prompt by its title. The authors hypothesize {that a} transformer ahead go implements ICL “by gradient-based optimization of an implicit auto-regressive interior loss constructed from its in-context information.” As we mentioned in a recent Gradient Update, this work reveals that transformer architectures of modest measurement can technically implement gradient descent.

Learnability and ICL in Bigger Language Fashions

Two current papers from March discover a couple of fascinating properties of in-context studying, which shed some mild and lift questions on the “studying” side of ICL.

First, “The Learnability of In-Context Learning” presents a first-of-its-kind PAC-based framework for in-context learnability. With a light assumption on the variety of pretraining examples for a mannequin and the variety of downstream job examples, the authors declare that when the pretraining distribution is a mix of latent duties, these duties may be effectively realized by way of ICL. The authors justify various fascinating outcomes on this paper, however the important thing takeaway is that this work additional primes the instinct that ICL locates an idea {that a} language mannequin has already realized. The thought of a “combination of latent duties” may also remind certainly one of MetaICL, which explored downstream efficiency after tuning a pretrained mannequin to do ICL on a big set of coaching duties.

“Large Language Models Do In-Context Learning Differently” additional research which aspects of ICL have an effect on efficiency, shedding further mild on whether or not “studying” really occurs in ICL. “Rethinking the Function of Demonstrations” confirmed that presenting a mannequin with random mappings as an alternative of right input-output values didn’t considerably have an effect on efficiency, indicating that language fashions primarily depend on semantic prior data whereas following the format of in-context examples. In the meantime, the works on ICL and gradient descent present that transformers in easy settings study the input-label mappings from in-context examples.

On this paper, Wei et al. examine how semantic priors and input-label mappings work together in three settings:

- Common ICL: each semantic priors and input-label mappings can enable a mannequin to carry out ICL efficiently.

- Flipped-label ICL: all labels in examples are flipped, producing a disagreement between semantic prior data and input-label examples. Efficiency above 50% on an analysis set with ground-truth labels signifies a mannequin didn’t override semantic priors. Efficiency beneath 50% efficiency signifies {that a} mannequin efficiently realized from the (incorrect) input-label mappings, overriding its priors.

- Semantically-unrelated label ICL (SUL-ICL): the labels are semantically unrelated to the duty (e.g., “foo/bar” are used as labels for a sentiment classification job as an alternative of “optimistic/unfavorable”). On this setting, a mannequin can solely carry out ICL by utilizing input-label mappings.

The important thing takeaway from this paper is that LLMs show an emergent means to override their semantic priors and study from input-label mappings. In different phrases, a sufficiently giant mannequin requested to in-context study on examples with flipped labels will then present degraded (beneath 50%) efficiency on an analysis set whose examples have right labels. Since small fashions don’t show this means and as an alternative depend on semantic priors (as in “Rethinking the Function of Demonstrations”), this means seems to emerge with scale.

Within the SUL-ICL setting, the authors show the same shift in capabilities from small to giant fashions. The beneath determine reveals efficiency variations between common ICL and SUL-ICL, the place fashions are requested to in-context study utilizing examples whose labels are semantically unrelated to the duty.

Whereas growing mannequin measurement improves efficiency in each common ICL and SUL-ICL, the drop in efficiency from common ICL to SUL-ICL motivates an fascinating commentary. Small fashions expertise a better efficiency drop between common ICL and SUL-ICL, indicating that these fashions’ semantic priors, which depend on the precise names of labels, forestall them from studying from examples with semantically-unrelated labels. Giant fashions, alternatively, expertise a a lot smaller efficiency drop; this means that they will nonetheless study input-label mappings in-context with out semantic priors

What’s Subsequent for In-Context Studying?

In a query from our Q&A, Sewon Min raised an fascinating level about how she thinks about in-context studying: she states:

if we outline “studying” as acquiring a brand new intrinsic means it has not had beforehand, then I believe studying needs to be with gradient updates. I imagine no matter that’s taking place at check time is a consequence of “studying” that occurred at pre-training time. That’s associated to our declare within the paper that “in-context studying is principally for higher location (activation) of the intrinsic talents LMs have realized throughout coaching”, which has been claimed by different papers as effectively (Xie et al. 2021, Reynolds & McDonell 2021).

The concept in-context studying merely locates some latent means or piece of data a language mannequin has already imbibed from its coaching information feels intuitive and makes the phenomenon a bit extra believable. Just a few months in the past, I puzzled whether or not the current works connecting ICL to gradient descent problematized this view—if there’s a connection between ICL and gradient descent, maybe language fashions do study one thing new when introduced with in-context examples?

The current discovering that bigger language fashions can override their semantic priors and, maybe, “study” one thing appears to level to the conclusion that the reply to “Do language fashions really study one thing in context, versus merely finding ideas realized from pretraining?” just isn’t sure or no. Smaller fashions don’t appear to study from in-context examples, and bigger ones do.

Nonetheless, Akyürek et al. make one other commentary that may encourage us to learn these outcomes as levels of studying. Part 4.3 observes that ICL displays algorithmic section transitions as mannequin depth will increase: one-layer transformers’ ICL habits approximates a single step of gradient descent, whereas wider and deeper transformers match bizarre least squares or ridge regression options. Akyürek et al. didn’t examine transformers on the scale of Wei et al, however it’s doable to think about that if small fashions implement easy studying algorithms in-context, bigger fashions would possibly implement extra refined capabilities throughout ICL.

To conclude, various works appear to problematize the notion that ICL doesn’t contain studying and merely locates ideas current in a pre-trained mannequin. Wei et al. signifies that there’s not a binary reply right here: giant fashions appear to “study” extra from in-context examples than small fashions. However the outcomes connecting ICL to gradient descent recommend that there’s some cause to imagine studying would possibly happen when small fashions carry out ICL as effectively.

I received’t make a robust declare right here—basically nothing is “out of distribution” for a sufficiently giant mannequin, and the capability these fashions have makes it troublesome to kind a robust instinct round what they’re “doing” with the data they’ve gained by way of pretraining. If all the pieces is actually in-distribution, then what’s left however to recombine? For now, I’ll keep away from taking a stance on whether or not “studying” is definitely taking place in ICL—I do assume there are fascinating arguments on all sides. I believe our understanding of ICL will proceed to evolve, and I hope we higher perceive when and the way “studying” happens in ICL.

[ad_2]

Source link