[ad_1]

NVIDIA in the present day launched a wave of cutting-edge AI analysis that can allow builders and artists to carry their concepts to life — whether or not nonetheless or shifting, in 2D or 3D, hyperrealistic or fantastical.

Round 20 NVIDIA Analysis papers advancing generative AI and neural graphics — together with collaborations with over a dozen universities within the U.S., Europe and Israel — are headed to SIGGRAPH 2023, the premier pc graphics convention, happening Aug. 6-10 in Los Angeles.

The papers embody generative AI fashions that flip textual content into personalised photos; inverse rendering instruments that remodel nonetheless photos into 3D objects; neural physics fashions that use AI to simulate complicated 3D components with gorgeous realism; and neural rendering fashions that unlock new capabilities for producing real-time, AI-powered visible particulars.

Improvements by NVIDIA researchers are repeatedly shared with builders on GitHub and integrated into merchandise, together with the NVIDIA Omniverse platform for constructing and working metaverse purposes and NVIDIA Picasso, a not too long ago introduced foundry for customized generative AI fashions for visible design. Years of NVIDIA graphics analysis helped carry film-style rendering to video games, just like the not too long ago launched Cyberpunk 2077 Ray Tracing: Overdrive Mode, the world’s first path-traced AAA title.

The analysis developments introduced this 12 months at SIGGRAPH will assist builders and enterprises quickly generate synthetic data to populate digital worlds for robotics and autonomous automobile coaching. They’ll additionally allow creators in artwork, structure, graphic design, recreation improvement and movie to extra rapidly produce high-quality visuals for storyboarding, previsualization and even manufacturing.

AI With a Private Contact: Personalized Textual content-to-Picture Fashions

Generative AI fashions that remodel textual content into photos are highly effective instruments to create idea artwork or storyboards for movies, video video games and 3D digital worlds. Textual content-to-image AI instruments can flip a immediate like “kids’s toys” into practically infinite visuals a creator can use for inspiration — producing photos of stuffed animals, blocks or puzzles.

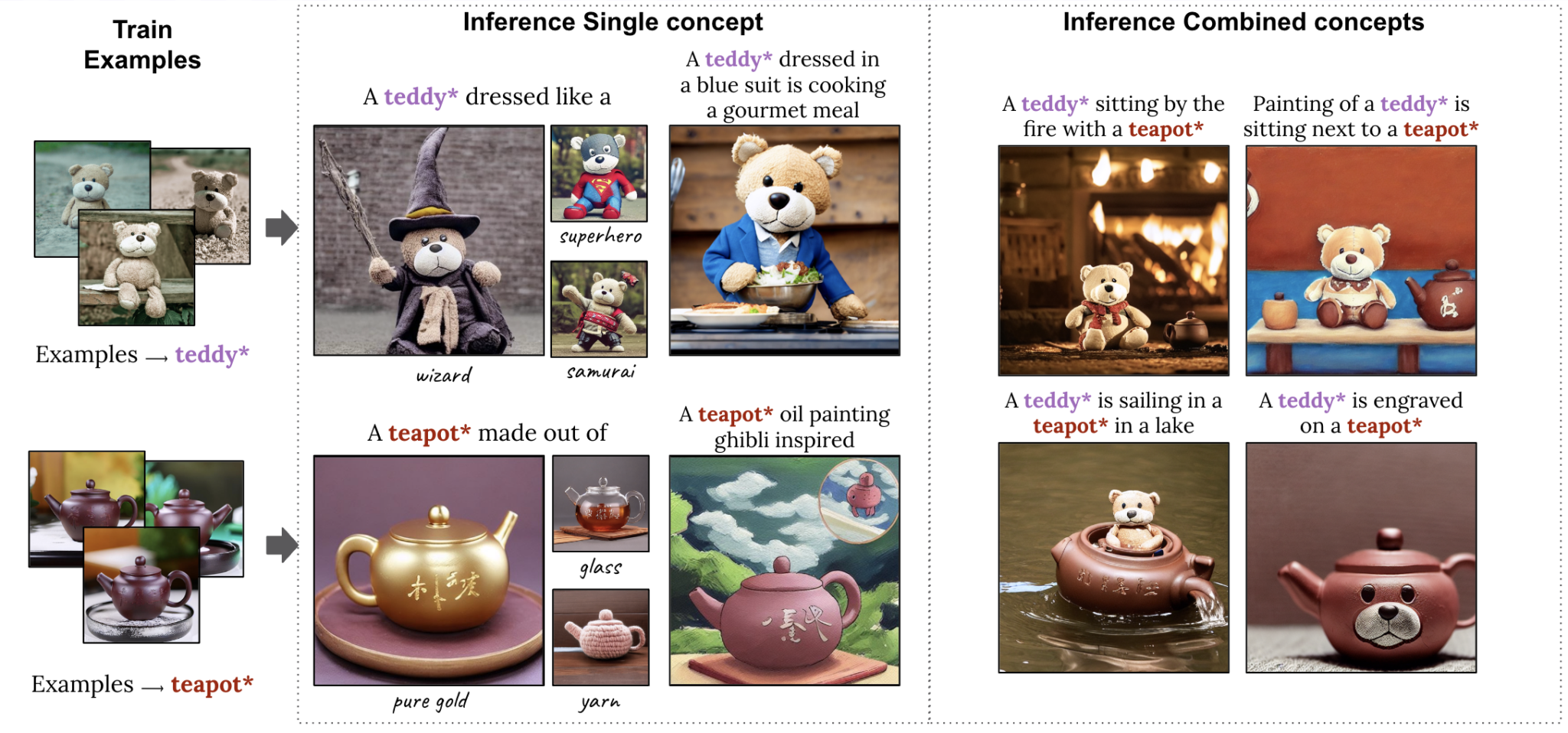

Nevertheless, artists might have a selected topic in thoughts. A inventive director for a toy model, for instance, may very well be planning an advert marketing campaign round a brand new teddy bear and need to visualize the toy in several conditions, comparable to a teddy bear tea get together. To allow this stage of specificity within the output of a generative AI mannequin, researchers from Tel Aviv College and NVIDIA have two SIGGRAPH papers that allow customers to supply picture examples that the mannequin rapidly learns from.

One paper describes a way that wants a single instance picture to customise its output, accelerating the personalization course of from minutes to roughly 11 seconds on a single NVIDIA A100 Tensor Core GPU, greater than 60x quicker than earlier personalization approaches.

A second paper introduces a extremely compact mannequin referred to as Perfusion, which takes a handful of idea photos to permit customers to combine multiple personalized elements — comparable to a particular teddy bear and teapot — right into a single AI-generated visible:

Serving in 3D: Advances in Inverse Rendering and Character Creation

As soon as a creator comes up with idea artwork for a digital world, the subsequent step is to render the atmosphere and populate it with 3D objects and characters. NVIDIA Analysis is inventing AI strategies to speed up this time-consuming course of by routinely reworking 2D photos and movies into 3D representations that creators can import into graphics purposes for additional enhancing.

A 3rd paper created with researchers on the College of California, San Diego, discusses tech that may generate and render a photorealistic 3D head-and-shoulders model primarily based on a single 2D portrait — a serious breakthrough that makes 3D avatar creation and 3D video conferencing accessible with AI. The tactic runs in actual time on a shopper desktop, and may generate a photorealistic or stylized 3D telepresence utilizing solely standard webcams or smartphone cameras.

A fourth undertaking, a collaboration with Stanford College, brings lifelike movement to 3D characters. The researchers created an AI system that may be taught a variety of tennis expertise from 2D video recordings of actual tennis matches and apply this motion to 3D characters. The simulated tennis gamers can precisely hit the ball to focus on positions on a digital courtroom, and even play prolonged rallies with different characters.

Past the take a look at case of tennis, this SIGGRAPH paper addresses the tough problem of manufacturing 3D characters that may carry out various expertise with lifelike motion — with out the usage of costly motion-capture knowledge.

Not a Hair Out of Place: Neural Physics Permits Sensible Simulations

As soon as a 3D character is generated, artists can layer in lifelike particulars comparable to hair — a fancy, computationally costly problem for animators.

People have a mean of 100,000 hairs on their heads, with every reacting dynamically to a person’s movement and the encircling atmosphere. Historically, creators have used physics formulation to calculate hair motion, simplifying or approximating its movement primarily based on the sources accessible. That’s why digital characters in a big-budget movie sport rather more detailed heads of hair than real-time online game avatars.

A fifth paper showcases a technique that may simulate tens of thousands of hairs in high resolution and in real time utilizing neural physics, an AI method that teaches a neural community to foretell how an object would transfer in the actual world.

The workforce’s novel strategy for correct simulation of full-scale hair is particularly optimized for contemporary GPUs. It provides vital efficiency leaps in comparison with state-of-the-art, CPU-based solvers, lowering simulation instances from a number of days to merely hours — whereas additionally boosting the standard of hair simulations attainable in actual time. This system lastly allows each correct and interactive bodily primarily based hair grooming.

Neural Rendering Brings Movie-High quality Element to Actual-Time Graphics

After an atmosphere is stuffed with animated 3D objects and characters, real-time rendering simulates the physics of sunshine reflecting via the digital scene. Current NVIDIA analysis exhibits how AI fashions for textures, supplies and volumes can ship film-quality, photorealistic visuals in actual time for video video games and digital twins.

NVIDIA invented programmable shading over twenty years in the past, enabling builders to customise the graphics pipeline. In these newest neural rendering innovations, researchers prolong programmable shading code with AI fashions that run deep inside NVIDIA’s real-time graphics pipelines.

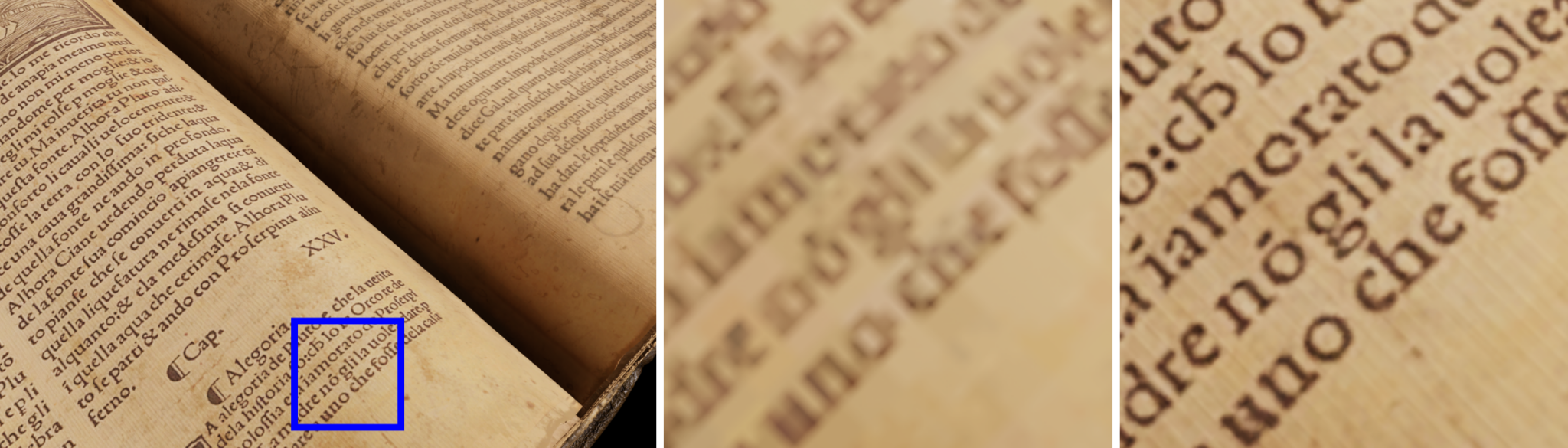

In a sixth SIGGRAPH paper, NVIDIA will current neural texture compression that delivers up to 16x more texture detail with out taking further GPU reminiscence. Neural texture compression can considerably enhance the realism of 3D scenes, as seen within the picture beneath, which demonstrates how neural-compressed textures (proper) seize sharper element than earlier codecs, the place the textual content stays blurry (heart).

A associated paper introduced final 12 months is now accessible in early entry as NeuralVDB, an AI-enabled data compression technique that decreases by 100x the reminiscence wanted to signify volumetric knowledge — like smoke, hearth, clouds and water.

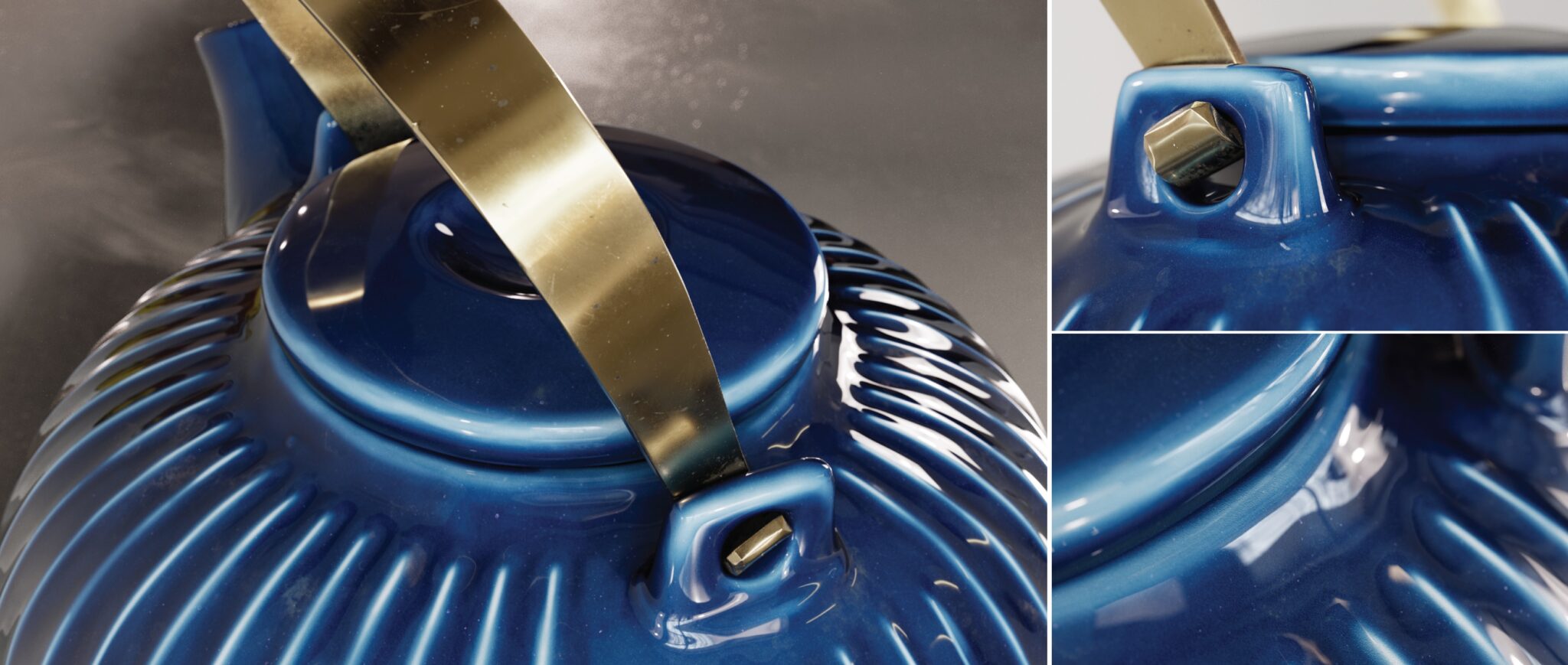

NVIDIA additionally launched in the present day more details about neural materials research that was proven in the latest NVIDIA GTC keynote. The paper describes an AI system that learns how gentle displays from photoreal, many-layered supplies, lowering the complexity of those belongings all the way down to small neural networks that run in actual time, enabling as much as 10x quicker shading.

The extent of realism may be seen on this neural-rendered teapot, which precisely represents the ceramic, the imperfect clear-coat glaze, fingerprints, smudges and even mud.

Extra Generative AI and Graphics Analysis

These are simply the highlights — learn extra about all of the NVIDIA papers at SIGGRAPH. NVIDIA may even current six programs, 4 talks and two Emerging Technology demos on the convention, with matters together with path tracing, telepresence and diffusion fashions for generative AI.

NVIDIA Research has a whole lot of scientists and engineers worldwide, with groups centered on matters together with AI, pc graphics, pc imaginative and prescient, self-driving vehicles and robotics.

[ad_2]

Source link