[ad_1]

By David Wendt and Gregory Kimball

Environment friendly processing of string information is important for a lot of information science purposes. To extract worthwhile data from string information, RAPIDS libcudf gives highly effective instruments for accelerating string information transformations. libcudf is a C++ GPU DataFrame library used for loading, becoming a member of, aggregating, and filtering information.

In information science, string information represents speech, textual content, genetic sequences, logging, and lots of different sorts of data. When working with string information for machine studying and have engineering, the info should often be normalized and reworked earlier than it may be utilized to particular use instances. libcudf gives each normal function APIs in addition to device-side utilities to allow a variety of customized string operations.

This submit demonstrates how one can skillfully remodel strings columns with the libcudf normal function API. You’ll achieve new data on how one can unlock peak efficiency utilizing customized kernels and libcudf device-side utilities. This submit additionally walks you thru examples of how one can greatest handle GPU reminiscence and effectively assemble libcudf columns to hurry up your string transformations.

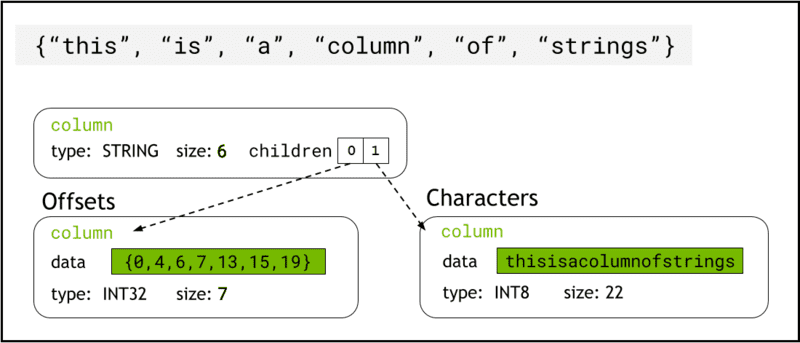

libcudf shops string information in machine reminiscence utilizing Arrow format, which represents strings columns as two little one columns: chars and offsets (Determine 1).

The chars column holds the string information as UTF-8 encoded character bytes which are saved contiguously in reminiscence.

The offsets column comprises an growing sequence of integers that are byte positions figuring out the beginning of every particular person string inside the chars information array. The ultimate offset aspect is the overall variety of bytes within the chars column. This implies the dimensions of a person string at row i is outlined as (offsets[i+1]-offsets[i]).

Determine 1. Schematic displaying how Arrow format represents strings columns with

Determine 1. Schematic displaying how Arrow format represents strings columns with chars and offsets little one columns

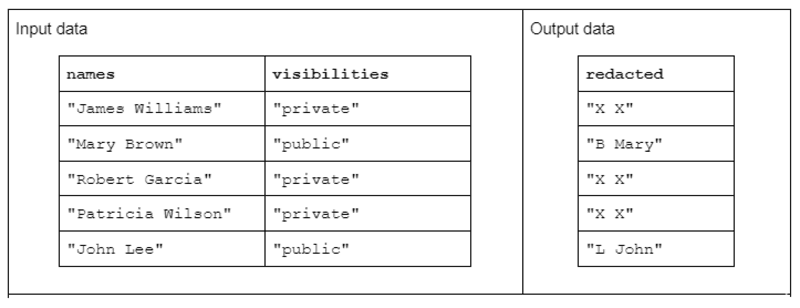

As an example an instance string transformation, take into account a operate that receives two enter strings columns and produces one redacted output strings column.

The enter information has the next kind: a “names” column containing first and final names separated by an area and a “visibilities” column containing the standing of “public” or “non-public.”

We suggest the “redact” operate that operates on the enter information to provide output information consisting of the primary preliminary of the final title adopted by an area and the complete first title. Nonetheless, if the corresponding visibility column is “non-public” then the output string must be absolutely redacted as “X X.”

Desk 1. Instance of a “redact” string transformation that receives names and visibilities strings columns as enter and partially or absolutely redacted information as output

Desk 1. Instance of a “redact” string transformation that receives names and visibilities strings columns as enter and partially or absolutely redacted information as output

First, string transformation might be completed utilizing the libcudf strings API. The overall function API is a superb place to begin and an excellent baseline for evaluating efficiency.

The API features function on a complete strings column, launching no less than one kernel per operate and assigning one thread per string. Every thread handles a single row of information in parallel throughout the GPU and outputs a single row as a part of a brand new output column.

To finish the redact instance operate utilizing the overall function API, comply with these steps:

- Convert the “visibilities” strings column right into a Boolean column utilizing

comprises - Create a brand new strings column from the names column by copying “X X” at any time when the corresponding row entry within the boolean column is “false”

- Break up the “redacted” column into first title and final title columns

- Slice the primary character of the final names because the final title initials

- Construct the output column by concatenating the final initials column and the primary names column with area (” “) separator.

// convert the visibility label right into a boolean

auto const seen = cudf::string_scalar(std::string("public"));

auto const allowed = cudf::strings::comprises(visibilities, seen);

// redact names

auto const redaction = cudf::string_scalar(std::string("X X"));

auto const redacted = cudf::copy_if_else(names, redaction, allowed->view());

// cut up the primary title and final preliminary into two columns

auto const sv = cudf::strings_column_view(redacted->view())

auto const first_last = cudf::strings::cut up(sv);

auto const first = first_last->view().column(0);

auto const final = first_last->view().column(1);

auto const last_initial = cudf::strings::slice_strings(final, 0, 1);

// assemble a outcome column

auto const television = cudf::table_view({last_initial->view(), first});

auto outcome = cudf::strings::concatenate(television, std::string(" "));

This method takes about 3.5 ms on an A6000 with 600K rows of information. This instance makes use of comprises, copy_if_else, cut up, slice_strings and concatenate to perform a customized string transformation. A profiling evaluation with Nsight Systems reveals that the cut up operate takes the longest period of time, adopted by slice_strings and concatenate.

Determine 2 reveals profiling information from Nsight Methods of the redact instance, displaying end-to-end string processing at as much as ~600 million parts per second. The areas correspond to NVTX ranges related to every operate. Gentle blue ranges correspond to intervals the place CUDA kernels are working.

Determine 2. Profiling information from Nsight Methods of the redact instance

Determine 2. Profiling information from Nsight Methods of the redact instance

The libcudf strings API is a quick and environment friendly toolkit for reworking strings, however generally performance-critical features must run even sooner. A key supply of additional work within the libcudf strings API is the creation of no less than one new strings column in international machine reminiscence for every API name, opening up the chance to mix a number of API calls right into a customized kernel.

Efficiency limitations in kernel malloc calls

First, we’ll construct a customized kernel to implement the redact instance transformation. When designing this kernel, we should remember that libcudf strings columns are immutable.

Strings columns can’t be modified in place as a result of the character bytes are saved contiguously, and any adjustments to the size of a string would invalidate the offsets information. Due to this fact the redact_kernel customized kernel generates a brand new strings column by utilizing a libcudf column manufacturing facility to construct each offsets and chars little one columns.

On this first method, the output string for every row is created in dynamic device memory utilizing a malloc name contained in the kernel. The customized kernel output is a vector of machine pointers to every row output, and this vector serves as enter to a strings column manufacturing facility.

The customized kernel accepts a cudf::column_device_view to entry the strings column information and makes use of the aspect methodology to return a cudf::string_view representing the string information on the specified row index. The kernel output is a vector of sort cudf::string_view that holds tips to the machine reminiscence containing the output string and the dimensions of that string in bytes.

The cudf::string_view class is just like the std::string_view class however is applied particularly for libcudf and wraps a hard and fast size of character information in machine reminiscence encoded as UTF-8. It has lots of the similar options (discover and substr features, for instance) and limitations (no null terminator) because the std counterpart. A cudf::string_view represents a personality sequence saved in machine reminiscence and so we are able to use it right here to report the malloc’d reminiscence for an output vector.

Malloc kernel

// word the column_device_view inputs to the kernel

__global__ void redact_kernel(cudf::column_device_view const d_names,

cudf::column_device_view const d_visibilities,

cudf::string_view redaction,

cudf::string_view* d_output)

{

// get index for this thread

auto index = threadIdx.x + blockIdx.x * blockDim.x;

if (index >= d_names.dimension()) return;

auto const seen = cudf::string_view("public", 6);

auto const title = d_names.aspect<:string_view>(index);

auto const vis = d_visibilities.aspect<:string_view>(index);

if (vis == seen) {

auto const space_idx = title.discover(' ');

auto const first = title.substr(0, space_idx);

auto const last_initial = title.substr(space_idx + 1, 1);

auto const output_size = first.size_bytes() + last_initial.size_bytes() + 1;

char* output_ptr = static_cast(malloc(output_size));

// construct output string

d_output[index] = cudf::string_view{output_ptr, output_size};

memcpy(output_ptr, last_initial.information(), last_initial.size_bytes());

output_ptr += last_initial.size_bytes();

*output_ptr++ = ' ';

memcpy(output_ptr, first.information(), first.size_bytes());

} else {

d_output[index] = cudf::string_view{redaction.information(), redaction.size_bytes()};

}

}

__global__ void free_kernel(cudf::string_view redaction, cudf::string_view* d_output, int depend)

{

auto index = threadIdx.x + blockIdx.x * blockDim.x;

if (index >= depend) return;

auto ptr = const_cast(d_output[index].information());

if (ptr != redaction.information()) free(ptr); // free all the pieces that does match the redaction string

}

This would possibly appear to be an inexpensive method, till the kernel efficiency is measured. This method takes about 108 ms on an A6000 with 600K rows of information—greater than 30x slower than the answer offered above utilizing the libcudf strings API.

redact_kernel 60.3ms

free_kernel 45.5ms

make_strings_column 0.5ms

The principle bottleneck is the malloc/free calls inside the 2 kernels right here. The CUDA dynamic machine reminiscence requires malloc/free calls in a kernel to be synchronized, inflicting parallel execution to degenerate into sequential execution.

Pre-allocating working reminiscence to get rid of bottlenecks

Eradicate the malloc/free bottleneck by changing the malloc/free calls within the kernel with pre-allocated working reminiscence earlier than launching the kernel.

For the redact instance, the output dimension of every string on this instance must be no bigger than the enter string itself, for the reason that logic solely removes characters. Due to this fact, a single machine reminiscence buffer can be utilized with the identical dimension because the enter buffer. Use the enter offsets to find every row place.

Accessing the strings column’s offsets entails wrapping the cudf::column_view with a cudf::strings_column_view and calling its offsets_begin methodology. The dimensions of the chars little one column can be accessed utilizing the chars_size methodology. Then a rmm::device_uvector is pre-allocated earlier than calling the kernel to retailer the character output information.

auto const scv = cudf::strings_column_view(names);

auto const offsets = scv.offsets_begin();

auto working_memory = rmm::device_uvector(scv.chars_size(), stream);

Pre-allocated kernel

__global__ void redact_kernel(cudf::column_device_view const d_names,

cudf::column_device_view const d_visibilities,

cudf::string_view redaction,

char* working_memory,

cudf::offset_type const* d_offsets,

cudf::string_view* d_output)

{

auto index = threadIdx.x + blockIdx.x * blockDim.x;

if (index >= d_names.dimension()) return;

auto const seen = cudf::string_view("public", 6);

auto const title = d_names.aspect<:string_view>(index);

auto const vis = d_visibilities.aspect<:string_view>(index);

if (vis == seen) {

auto const space_idx = title.discover(' ');

auto const first = title.substr(0, space_idx);

auto const last_initial = title.substr(space_idx + 1, 1);

auto const output_size = first.size_bytes() + last_initial.size_bytes() + 1;

// resolve output string location

char* output_ptr = working_memory + d_offsets[index];

d_output[index] = cudf::string_view{output_ptr, output_size};

// construct output string into output_ptr

memcpy(output_ptr, last_initial.information(), last_initial.size_bytes());

output_ptr += last_initial.size_bytes();

*output_ptr++ = ' ';

memcpy(output_ptr, first.information(), first.size_bytes());

} else {

d_output[index] = cudf::string_view{redaction.information(), redaction.size_bytes()};

}

}

The kernel outputs a vector of cudf::string_view objects which is handed to the cudf::make_strings_column manufacturing facility operate. The second parameter to this operate is used for figuring out null entries within the output column. The examples on this submit should not have null entries, so a nullptr placeholder cudf::string_view{nullptr,0} is used.

auto str_ptrs = rmm::device_uvector<:string_view>(names.dimension(), stream);

redact_kernel<<>>(*d_names,

*d_visibilities,

d_redaction.worth(),

working_memory.information(),

offsets,

str_ptrs.information());

auto outcome = cudf::make_strings_column(str_ptrs, cudf::string_view{nullptr,0}, stream);

This method takes about 1.1 ms on an A6000 with 600K rows of information and due to this fact beats the baseline by greater than 2x. The approximate breakdown is proven under:

redact_kernel 66us

make_strings_column 400us

The remaining time is spent in cudaMalloc, cudaFree, cudaMemcpy, which is typical of the overhead for managing short-term cases of rmm::device_uvector. This methodology works properly if all the output strings are assured to be the identical dimension or smaller because the enter strings.

Total, switching to a bulk working reminiscence allocation with RAPIDS RMM is a major enchancment and an excellent answer for a customized strings operate.

Optimizing column creation for sooner compute occasions

Is there a approach to enhance this even additional? The bottleneck is now the cudf::make_strings_column manufacturing facility operate which builds the 2 strings column parts, offsets and chars, from the vector of cudf::string_view objects.

In libcudf, many manufacturing facility features are included for constructing strings columns. The manufacturing facility operate used within the earlier examples takes a cudf::device_span of cudf::string_view objects after which constructs the column by performing a collect on the underlying character information to construct the offsets and character little one columns. A rmm::device_uvector is mechanically convertible to a cudf::device_span with out copying any information.

Nonetheless, if the vector of characters and the vector of offsets are constructed straight, then a unique manufacturing facility operate can be utilized, which merely creates the strings column with out requiring a collect to repeat the info.

The sizes_kernel makes a primary move over the enter information to compute the precise output dimension of every output row:

Optimized kernel: Half 1

__global__ void sizes_kernel(cudf::column_device_view const d_names,

cudf::column_device_view const d_visibilities,

cudf::size_type* d_sizes)

{

auto index = threadIdx.x + blockIdx.x * blockDim.x;

if (index >= d_names.dimension()) return;

auto const seen = cudf::string_view("public", 6);

auto const redaction = cudf::string_view("X X", 3);

auto const title = d_names.aspect<:string_view>(index);

auto const vis = d_visibilities.aspect<:string_view>(index);

cudf::size_type outcome = redaction.size_bytes(); // init to redaction dimension

if (vis == seen) {

auto const space_idx = title.discover(' ');

auto const first = title.substr(0, space_idx);

auto const last_initial = title.substr(space_idx + 1, 1);

outcome = first.size_bytes() + last_initial.size_bytes() + 1;

}

d_sizes[index] = outcome;

}

The output sizes are then transformed to offsets by performing an in-place exclusive_scan. Notice that the offsets vector was created with names.dimension()+1 parts. The final entry would be the whole variety of bytes (all of the sizes added collectively) whereas the primary entry shall be 0. These are each dealt with by the exclusive_scan name. The dimensions of the chars column is retrieved from the final entry of the offsets column to construct the chars vector.

// create offsets vector

auto offsets = rmm::device_uvector<:size_type>(names.dimension() + 1, stream);

// compute output sizes

sizes_kernel<<>>(

*d_names, *d_visibilities, offsets.information());

thrust::exclusive_scan(rmm::exec_policy(stream), offsets.start(), offsets.finish(), offsets.start());

The redact_kernel logic remains to be very a lot the identical besides that it accepts the output d_offsets vector to resolve every row’s output location:

Optimized kernel: Half 2

__global__ void redact_kernel(cudf::column_device_view const d_names,

cudf::column_device_view const d_visibilities,

cudf::size_type const* d_offsets,

char* d_chars)

{

auto index = threadIdx.x + blockIdx.x * blockDim.x;

if (index >= d_names.dimension()) return;

auto const seen = cudf::string_view("public", 6);

auto const redaction = cudf::string_view("X X", 3);

// resolve output_ptr utilizing the offsets vector

char* output_ptr = d_chars + d_offsets[index];

auto const title = d_names.aspect<:string_view>(index);

auto const vis = d_visibilities.aspect<:string_view>(index);

if (vis == seen) {

auto const space_idx = title.discover(' ');

auto const first = title.substr(0, space_idx);

auto const last_initial = title.substr(space_idx + 1, 1);

auto const output_size = first.size_bytes() + last_initial.size_bytes() + 1;

// construct output string

memcpy(output_ptr, last_initial.information(), last_initial.size_bytes());

output_ptr += last_initial.size_bytes();

*output_ptr++ = ' ';

memcpy(output_ptr, first.information(), first.size_bytes());

} else {

memcpy(output_ptr, redaction.information(), redaction.size_bytes());

}

}

The dimensions of the output d_chars column is retrieved from the final entry of the d_offsets column to allocate the chars vector. The kernel launches with the pre-computed offsets vector and returns the populated chars vector. Lastly, the libcudf strings column manufacturing facility creates the output strings columns.

This cudf::make_strings_column manufacturing facility operate builds the strings column with out making a duplicate of the info. The offsets information and chars information are already within the appropriate, anticipated format and this manufacturing facility merely strikes the info from every vector and creates the column construction round it. As soon as accomplished, the rmm::device_uvectors for offsets and chars are empty, their information having been moved into the output column.

cudf::size_type output_size = offsets.back_element(stream);

auto chars = rmm::device_uvector(output_size, stream);

redact_kernel<<>>(

*d_names, *d_visibilities, offsets.information(), chars.information());

// from pre-assembled offsets and character buffers

auto outcome = cudf::make_strings_column(names.dimension(), std::transfer(offsets), std::transfer(chars));

This method takes about 300 us (0.3 ms) on an A6000 with 600K rows of information and improves over the earlier method by greater than 2x. You would possibly discover that sizes_kernel and redact_kernel share a lot of the identical logic: as soon as to measure the dimensions of the output after which once more to populate the output.

From a code high quality perspective, it’s helpful to refactor the transformation as a tool operate referred to as by each the sizes and redact kernels. From a efficiency perspective, you is perhaps shocked to see the computational value of the transformation being paid twice.

The advantages for reminiscence administration and extra environment friendly column creation usually outweigh the computation value of performing the transformation twice.

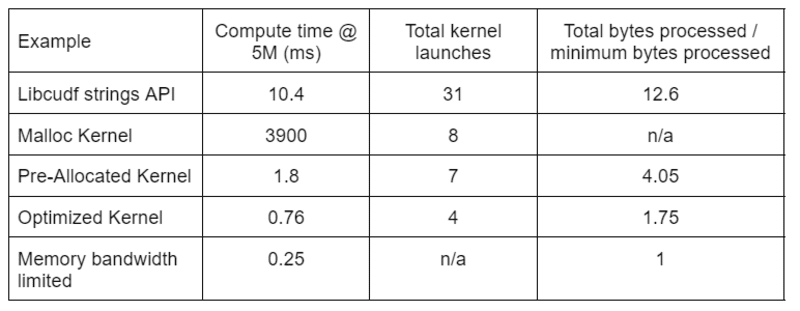

Desk 2 reveals the compute time, kernel depend, and bytes processed for the 4 options mentioned on this submit. “Complete kernel launches” displays the overall variety of kernels launched, together with each compute and helper kernels. “Complete bytes processed” is the cumulative DRAM learn plus write throughput and “minimal bytes processed” is a mean of 37.9 bytes per row for our take a look at inputs and outputs. The perfect “reminiscence bandwidth restricted” case assumes 768 GB/s bandwidth, the theoretical peak throughput of the A6000.

Desk 2. Compute time, kernel depend, and bytes processed for the 4 options mentioned on this submit

Desk 2. Compute time, kernel depend, and bytes processed for the 4 options mentioned on this submit“Optimized Kernel” gives the very best throughput as a result of decreased variety of kernel launches and the less whole bytes processed. With environment friendly customized kernels, the overall kernel launches drop from 31 to 4 and the overall bytes processed from 12.6x to 1.75x of the enter plus output dimension.

Because of this, the customized kernel achieves >10x greater throughput than the overall function strings API for the redact transformation.

The pool reminiscence useful resource in RAPIDS Memory Manager (RMM) is one other instrument you should use to extend efficiency. The examples above use the default “CUDA reminiscence useful resource” for allocating and releasing international machine reminiscence. Nonetheless, the time wanted to allocate working reminiscence provides important latency in between steps of the string transformations. The “pool reminiscence useful resource” in RMM reduces latency by allocating a big pool of reminiscence up entrance, and assigning suballocations as wanted throughout processing.

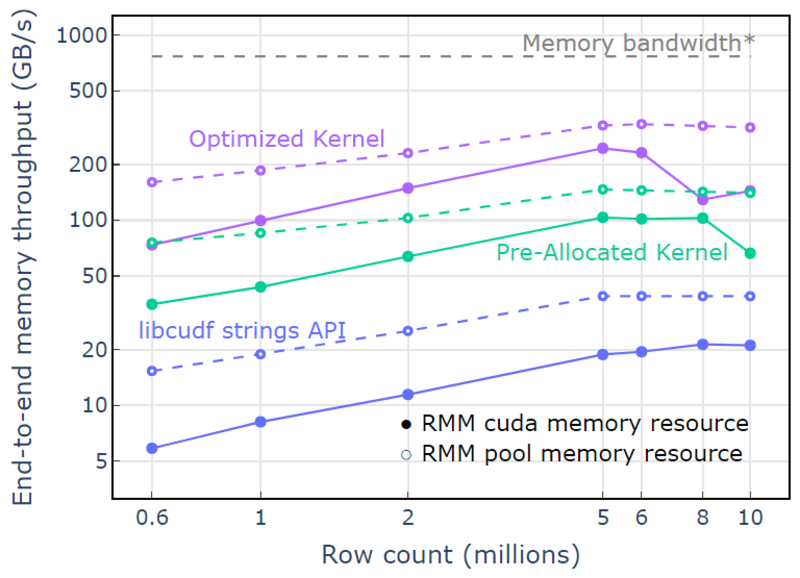

With the CUDA reminiscence useful resource, “Optimized Kernel” reveals a 10x-15x speedup that begins to drop off at greater row counts as a result of growing allocation dimension (Determine 3). Utilizing the pool reminiscence useful resource mitigates this impact and maintains 15x-25x speedups over the libcudf strings API method.

Determine 3. Speedup from the customized kernels “Pre-Allotted Kernel” and “Optimized Kernel” with the default CUDA reminiscence useful resource (strong) and the pool reminiscence useful resource (dashed), versus the libcudf string API utilizing the default CUDA reminiscence useful resource

Determine 3. Speedup from the customized kernels “Pre-Allotted Kernel” and “Optimized Kernel” with the default CUDA reminiscence useful resource (strong) and the pool reminiscence useful resource (dashed), versus the libcudf string API utilizing the default CUDA reminiscence useful resourceWith the pool reminiscence useful resource, an end-to-end reminiscence throughput approaching the theoretical restrict for a two-pass algorithm is demonstrated. “Optimized Kernel” reaches 320-340 GB/s throughput, measured utilizing the dimensions of inputs plus the dimensions of outputs and the compute time (Determine 4).

The 2-pass method first measures the sizes of the output parts, allocates reminiscence, after which units the reminiscence with the outputs. Given a two-pass processing algorithm, the implementation in “Optimized Kernel” performs near the reminiscence bandwidth restrict. “Finish-to-end reminiscence throughput” is outlined because the enter plus output dimension in GB divided by the compute time. *RTX A6000 reminiscence bandwidth (768 GB/s).

Determine 4. Reminiscence throughput for “Optimized Kernel,” “Pre-Allotted Kernel,” and “libcudf strings API” as a operate of enter/output row depend

Determine 4. Reminiscence throughput for “Optimized Kernel,” “Pre-Allotted Kernel,” and “libcudf strings API” as a operate of enter/output row depend

This submit demonstrates two approaches for writing environment friendly string information transformations in libcudf. The libcudf normal function API is quick and simple for builders, and delivers good efficiency. libcudf additionally gives device-side utilities designed to be used with customized kernels, on this instance unlocking >10x sooner efficiency.

Apply your data

To get began with RAPIDS cuDF, go to the rapidsai/cudf GitHub repo. When you have not but tried cuDF and libcudf in your string processing workloads, we encourage you to check the newest launch. Docker containers are offered for releases in addition to nightly builds. Conda packages are additionally out there to make testing and deployment simpler. For those who’re already utilizing cuDF, we encourage you to run the brand new strings transformation instance by visiting rapidsai/cudf/tree/HEAD/cpp/examples/strings on GitHub.

David Wendt is a senior programs software program engineer at NVIDIA growing C++/CUDA code for RAPIDS. David holds a grasp’s diploma in electrical engineering from Johns Hopkins College.

Gregory Kimball is a software program engineering supervisor at NVIDIA engaged on the RAPIDS group. Gregory leads improvement for libcudf, the CUDA/C++ library for columnar information processing that powers RAPIDS cuDF. Gregory holds a PhD in utilized physics from the California Institute of Know-how.

Original. Reposted with permission.

[ad_2]

Source link