[ad_1]

Matters

Accountable AI

The Accountable AI initiative appears to be like at how organizations outline and strategy accountable AI practices, insurance policies, and requirements. Drawing on world government surveys and smaller, curated professional panels, this system gathers views from various sectors and geographies with the purpose of delivering actionable insights on this nascent but essential focus space for leaders throughout business.

More in this series

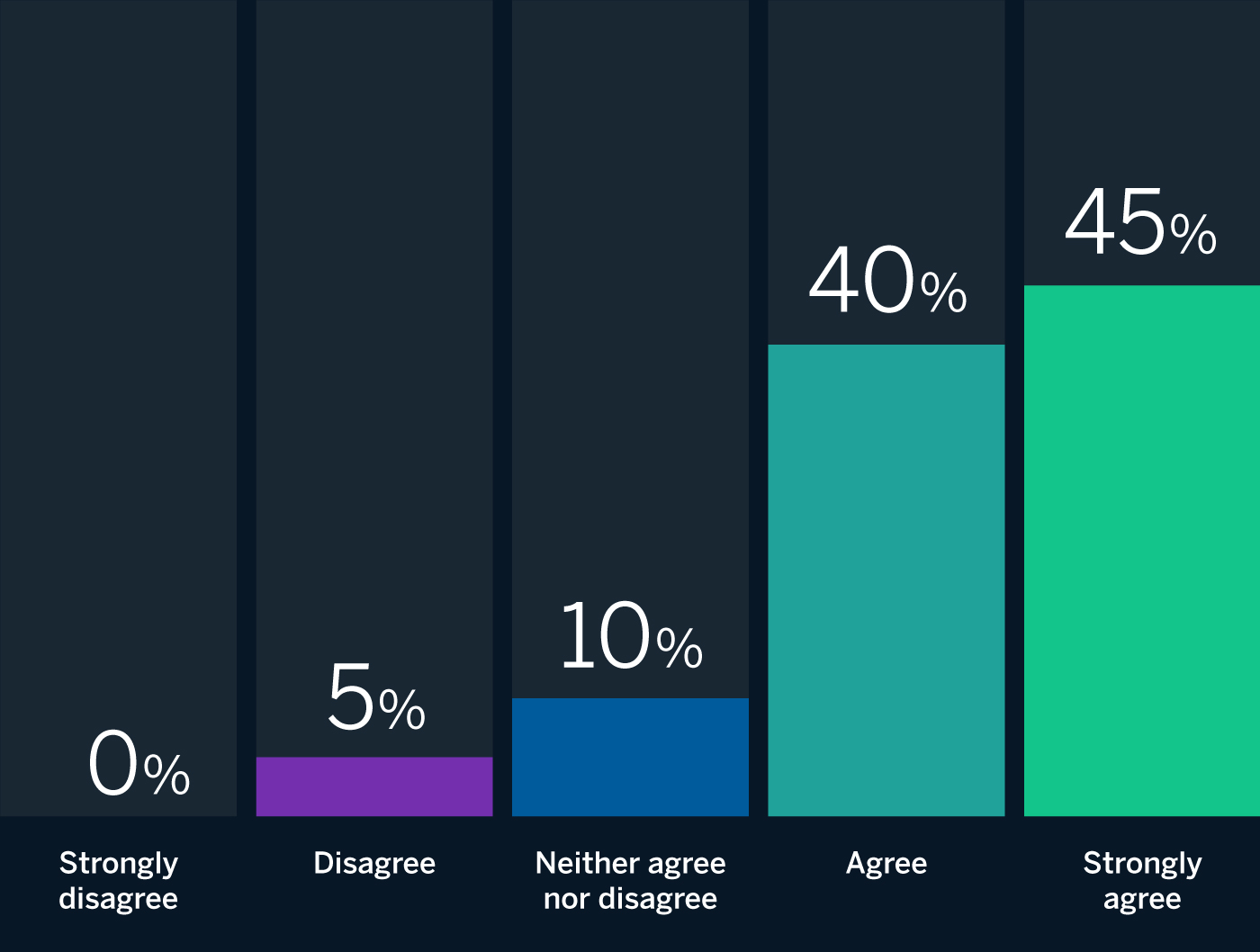

MIT Sloan Administration Overview and Boston Consulting Group have assembled a global panel of AI specialists that features teachers and practitioners to assist us acquire insights into how accountable synthetic intelligence (RAI) is being applied in organizations worldwide. In our world survey, 70% of respondents acknowledged having had not less than one AI system failure so far. This month, we requested our panelists to react to the next provocation on the subject: Mature RAI packages reduce AI system failures. The respondents overwhelmingly supported this thesis, with 85% (17 out of 20) both agreeing or strongly agreeing with it. That stated, even amongst those that agree that mature RAI helps reduce AI system failures, they warning that it isn’t by itself a silver bullet. Different elements play a job as nicely, together with how “failure” and “mature RAI” are outlined, governance requirements, and a number of technological, social, and environmental concerns. Beneath, we share insights from our panelists and draw on our personal observations and expertise engaged on RAI initiatives to supply suggestions for organizations searching for to attenuate AI system failures by their RAI efforts.

Supply: Accountable AI panel of 20 specialists in synthetic intelligence technique.

Placing Maturity and Failure in Context

For a lot of specialists, the extent to which mature RAI packages can reduce AI system failures is determined by what we imply by a “mature RAI program” and “failure.” Some failures are simple and straightforward to detect. For instance, one of the crucial seen classes of AI failure includes bias. As Salesforce’s chief moral and humane use officer Paula Goldman observes, “A few of AI’s best failures so far have been the product of bias, whether or not it’s recruiting instruments that favor males over ladies or facial recognition packages that misidentify folks of shade. Embedding ethics and inclusion within the design and supply of AI not solely helps to mitigate bias — it additionally helps to extend the accuracy and relevancy of our fashions, and to extend their efficiency in every kind of conditions as soon as deployed.” Mature RAI packages are designed to preempt, detect, and rectify bias and different seen challenges with AI methods.

However as AI methods develop extra complicated, failures can develop tougher to pinpoint and harder to handle. As Nitzan Mekel-Bobrov, eBay’s chief AI officer, explains, “AI methods are sometimes the product of many various elements, inputs, and outputs, leading to a fragmentation of decision-making throughout brokers. So, when failures happen, it’s troublesome to determine the exact cause and level of failure. A mature accountable AI framework accounts for these challenges by incorporating into the system growth life cycle end-to-end monitoring and measurement, governance over the position performed by every element, and integration into the ultimate resolution output, and it offers the enabling capabilities for stopping AI system failures forward of the expertise’s decision-making.”

To sort out extra and fewer seen failures, a mature RAI program must be broad in scale and/or scope, addressing the total life cycle of an AI system and its potential for destructive outcomes. As Linda Leopold, head of accountable AI and information at H&M, argues, “For a accountable AI program to be thought-about mature, it must be each complete and broadly adopted throughout a company. It has to cowl a number of dimensions of accountability, together with equity, transparency, accountability, safety, privateness, robustness, and human company. And it needs to be applied on each a strategic (coverage) and operational degree.” She provides, “If it ticks all these bins, it ought to stop a variety of potential AI system failures.” Equally, David Hardoon, chief information and AI officer at UnionBank of the Philippines, explains, “A mature RAI program ought to cowl the breadth of AI by way of information and modeling for each growth and operationalization, thus minimizing potential AI system failures.”

In actual fact, probably the most mature RAI packages are embedded in a company’s tradition, main to raised outcomes. As Slawek Kierner, senior vice chairman of knowledge, platforms, and machine studying at Intuitive, explains, “Mature accountable AI packages work on many ranges to extend the robustness of ensuing options that embrace AI elements. This begins with the affect RAI has on the tradition of knowledge science and engineering groups, offers government oversight and clear accountability for each step of the method, and on the technical aspect ensures that algorithms in addition to information used for coaching and predictions are audited and monitored for drift or irregular conduct. All of those steps tremendously improve the robustness of the entire AI DevOps course of and reduce dangers of AI system failures.”

The Position of Rising Requirements

Some specialists warning that with out formal or broadly adopted RAI requirements, the impression of mature RAI packages on AI system failures is tougher to ascertain. The Accountable AI Institute’s government director, Ashley Casovan, argues, “Within the absence of a globally adopted definition or commonplace framework for what accountable AI includes, the reply ought to actually be ‘It relies upon.’” In accordance with Oarabile Mudongo, a researcher on the Heart for AI and Digital Coverage in Johannesburg, such requirements are on their method: “Firms have gotten extra concerned in shaping AI-related laws and interesting with regulators on the nation degree. Regulators are taking discover as nicely, lobbying for AI regulatory frameworks that embrace enhanced information safeguards, governance, and accountability mechanisms. Mature RAI packages and rules based mostly on these requirements is not going to solely guarantee the protected, resilient, and moral use of AI but in addition assist reduce AI system failure.”

Philip Dawson, head of coverage at Armilla AI, cautions {that a} superficial strategy to AI testing throughout business has additionally meant that the majority AI initiatives both fail in growth or danger contributing to real-world harms after they’re launched. “To cut back failures,” he notes, “mature RAI packages should take a complete strategy to AI testing and validation.” Jaya Kolhatkar, chief information officer at Hulu, agrees that “to run an RAI program accurately, there must be a mechanism that catches failures sooner and a robust high quality assurance program to attenuate general points.” In sum, requirements for AI testing and high quality assurance would assist organizations consider the extent to which RAI packages reduce AI system failures.

Acknowledging Maturity’s Limitations

Even with probably the most mature RAI packages in place, failures can nonetheless happen. As Aisha Naseer, director of analysis at Huawei Applied sciences (UK), explains, “The maturity degree of RAI packages performs an important position in considering AI system failures; nonetheless, there isn’t a assure that such calamities is not going to occur. This is because of a number of potential causes of AI system failures, together with environmental constraints and contextual elements.”

Likewise, Boeing’s vice chairman and chief engineer for sustainability and future mobility, Brian Yutko, notes, “RAI packages might eradicate some forms of unintended behaviors from sure realized fashions, however general success or failure at automating a perform inside a system will probably be decided by different elements.” Steven Vosloo, digital coverage specialist in UNICEF’s Workplace of World Perception and Coverage, equally argues that RAI packages can cut back however not reduce AI system failures: “Even when developed responsibly and to not do hurt, AI methods can nonetheless fail. This might be as a consequence of limitations in algorithmic fashions, poorly scoped system targets, or issues with integration with different methods.” In different phrases, RAI is only one of a number of potential elements that have an effect on how AI methods perform, and generally fail, in the true world.

Suggestions

A mature RAI program goes a great distance towards minimizing or not less than decreasing AI system failures, since a mature RAI program is broad in scale and/or scope and addresses the total life cycle of an AI system, together with its design and, critically, its deployment in the true world. Nonetheless, the absence of formal or broadly adopted RAI requirements could make it laborious to evaluate the extent to which an RAI program mitigates such failures. Specifically, there’s a want for higher testing and high quality assurance frameworks. However even with probably the most mature RAI program in place, AI system failures can nonetheless occur.

In sum, organizations hoping that their RAI efforts will assist cut back or reduce AI system failures ought to concentrate on the next:

- Make sure the maturity of your RAI program. Mature RAI is broad in scale and/or scope, addressing a big selection of substantive areas and spanning broad swaths of the group. The extra mature your program, the likelier it’s that your efforts will stop potential failures and mitigate the consequences of any that do happen.

- Embrace requirements. RAI standardization, whether or not by new laws or business codes, is an efficient factor. Embrace these requirements, together with these round testing and high quality assurance, as a method to make sure that your efforts are serving to to attenuate AI system failures.

- Let go of perfection. Even with probably the most mature RAI program in place, AI system failures can occur as a consequence of different elements. That’s OK. The aim is to attenuate AI system failures, not essentially eradicate them. Flip failures into alternatives to be taught and develop.

[ad_2]

Source link