[ad_1]

What are computer systems for?

Traditionally, completely different solutions to this query – that’s,

completely different visions of computing – have helped encourage and

decide the computing methods humanity has in the end

constructed. Contemplate the early digital computer systems. ENIAC, the

world’s first general-purpose digital laptop, was

commissioned to compute artillery firing tables for the United

States Military. Different early computer systems had been additionally used to unravel

numerical issues, corresponding to simulating nuclear explosions,

predicting the climate, and planning the movement of rockets. The

machines operated in a batch mode, utilizing crude enter and output

units, and with none real-time interplay. It was a imaginative and prescient

of computer systems as number-crunching machines, used to hurry up

calculations that will previously have taken weeks, months, or extra

for a staff of people.

Within the Nineteen Fifties a unique imaginative and prescient of what computer systems are for started to

develop. That imaginative and prescient was crystallized in 1962, when Douglas

Engelbart proposed that computer systems could possibly be used as a approach

of

mind

instruments for fixing number-crunching issues. Slightly, they had been

real-time interactive methods, with wealthy inputs and outputs, that

people may work with to assist and broaden their very own

problem-solving course of. This imaginative and prescient of intelligence augmentation

(IA) deeply influenced many others, together with researchers corresponding to

Alan Kay at Xerox PARC, entrepreneurs corresponding to Steve Jobs at Apple,

and led to lots of the key concepts of recent computing methods. Its

concepts have additionally deeply influenced digital artwork and music, and

fields corresponding to interplay design, knowledge visualization,

computational creativity, and human-computer interplay.

Analysis on IA has usually been in competitors with analysis on

synthetic intelligence (AI): competitors for funding, competitors

for the curiosity of gifted researchers. Though there has

at all times been overlap between the fields, IA has usually centered

on constructing methods which put people and machines to work

collectively, whereas AI has centered on full outsourcing of

mental duties to machines. Particularly, issues in AI are

usually framed by way of matching or surpassing human efficiency:

beating people at chess or Go; studying to acknowledge speech and

photographs or translating language in addition to people; and so forth.

This essay describes a brand new subject, rising at this time out of a

synthesis of AI and IA. For this subject, we advise the

title synthetic intelligence augmentation (AIA): the use

of AI methods to assist develop new strategies for intelligence

augmentation. This new subject introduces essential new elementary

questions, questions not related to both mum or dad subject. We

imagine the ideas and methods of AIA can be radically

completely different to most current methods.

Our essay begins with a survey of current technical work hinting at

synthetic intelligence augmentation, together with work

on generative interfaces – that’s, interfaces

which can be utilized to discover and visualize generative machine

studying fashions. Such interfaces develop a form of cartography of

generative fashions, methods for people to discover and make that means

from these fashions, and to include what these fashions

“know” into their artistic work.

Our essay isn’t just a survey of technical work. We imagine now

is an efficient time to determine a few of the broad, elementary

questions on the basis of this rising subject. To what

extent are these new instruments enabling creativity? Can they be used

to generate concepts that are really stunning and new, or are the

concepts cliches, primarily based on trivial recombinations of current concepts?

Can such methods be used to develop elementary new interface

primitives? How will these new primitives change and broaden the

approach people assume?

Utilizing generative fashions to invent significant artistic operations

Let’s have a look at an instance the place a machine studying mannequin makes a

new sort of interface doable. To know the interface,

think about you’re a kind designer, engaged on creating a brand new

font

a font and a typeface. Apologies to any sort designers who could also be

studying.

want to experiment with daring, italic, and condensed variations.

Let’s look at a instrument to generate and discover such variations, from

any preliminary design. For causes that can quickly be defined the

high quality of outcomes is kind of crude; please bear with us.

In fact, various the bolding (i.e., the burden), italicization

and width are simply 3 ways you may range a font. Think about that

as an alternative of constructing specialised instruments, customers may construct their very own

instrument merely by selecting examples of current fonts. For example,

suppose you needed to range the diploma of serifing on a font. In

the next, please choose 5 to 10 sans-serif fonts from the highest

field, and drag them to the field on the left. Choose 5 to 10 serif

fonts and drag them to the field on the proper. As you do that, a

machine studying mannequin working in your browser will mechanically

infer from these examples tips on how to interpolate your beginning font in

both the serif or sans-serif course:

Actually, we used this identical approach to construct the sooner bolding

italicization, and condensing instrument. To take action, we used the

following examples of daring and non-bold fonts, of italic and

non-italic fonts, and of condensed and non-condensed fonts:

To construct these instruments, we used what’s referred to as a generative

mannequin; the actual mannequin we use was skilled

by

perceive generative fashions, contemplate that a priori

describing a font seems to require a whole lot of knowledge. For

occasion, if the font is by pixels, then we’d count on

to want parameters to explain a single

glyph. However we will use a generative mannequin to discover a a lot easier

description.

We do that by constructing a neural community which takes a small quantity

of enter variables, referred to as latent variables, and produces

as output your entire glyph. For the actual mannequin we use, we

have latent area dimensions, and map that into the

-dimensional area describing all of the pixels within the glyph.

In different phrases, the thought is to map a low-dimensional area right into a

higher-dimensional area:

The generative mannequin we use is a kind of neural community often known as

a

(VAE)

mannequin aren’t so essential. The essential factor is that by

altering the latent variables used as enter, it’s doable to get

completely different fonts as output. So one alternative of latent variables will

give one font, whereas one other alternative will give a unique font:

You may consider the latent variables as a compact, high-level

illustration of the font. The neural community takes that

high-level illustration and converts it into the complete pixel

knowledge. It’s outstanding that simply numbers can seize the

obvious complexity in a glyph, which initially required

variables.

The generative mannequin we use is learnt from a coaching set of extra

than thousand

fonts

scraped from the open net. Throughout coaching, the weights and

biases within the community are adjusted in order that the community can output

an in depth approximation to any desired font from the coaching set,

supplied an acceptable alternative of latent variables is made. In some

sense, the mannequin is studying a extremely compressed illustration of

all of the coaching fonts.

Actually, the mannequin doesn’t simply reproduce the coaching fonts. It

may generalize, producing fonts not seen in coaching. By

being pressured to discover a compact description of the coaching

examples, the neural internet learns an summary, higher-level mannequin of

what a font is. That higher-level mannequin makes it doable to

generalize past the coaching examples already seen, to provide

realistic-looking fonts.

Ideally, an excellent generative mannequin can be uncovered to a comparatively

small variety of coaching examples, and use that publicity to

generalize to the area of all doable human-readable fonts.

That’s, for any conceivable font – whether or not current or

maybe even imagined sooner or later – it could be doable

to seek out latent variables corresponding precisely to that font. Of

course, the mannequin we’re utilizing falls far wanting this very best

– a very egregious failure is that many fonts

generated by the mannequin omit the tail on the capital

“Q” (you may see this within the examples above). Nonetheless,

it’s helpful to bear in mind what a great generative mannequin would

do.

Such generative fashions are related in some methods to how scientific

theories work. Scientific theories usually enormously simplify the

description of what seem like advanced phenomena, decreasing massive

numbers of variables to only a few variables from which many

elements of system conduct may be deduced. Moreover, good

scientific theories generally allow us to generalize to find

new phenomena.

For example, contemplate bizarre materials objects. Such objects

have what physicists name a section – they might be a

liquid, a stable, a fuel, or maybe one thing extra unique, like a

superconductor

or Bose-Einstein

condensate. A priori, such methods appear immensely

advanced, involving maybe or so molecules. However the

legal guidelines of thermodynamics and statistical mechanics allow us to seek out

an easier description, decreasing that complexity to only a few

variables (temperature, stress, and so forth), which embody a lot

of the conduct of the system. Moreover, generally it’s

doable to generalize, predicting surprising new phases of

matter. For instance, in 1924, physicists used thermodynamics and

statistical mechanics to foretell a outstanding new section of matter,

Bose-Einstein condensation, through which a group of atoms might all

occupy an identical quantum states, resulting in stunning large-scale

quantum interference results. We’ll come again to this predictive

capacity in our later dialogue of creativity and generative

fashions.

Returning to the nuts and bolts of generative fashions, how can we

use such fashions to do example-based reasoning like that within the

instrument proven above? Let’s contemplate the case of the bolding instrument. In

that occasion, we take the common of all of the latent vectors for

the user-specified daring fonts, and the common for all of the

user-specified non-bold fonts. We then compute the distinction

between these two common vectors:

We’ll seek advice from this because the bolding vector. To make some

given font bolder, we merely add a little bit of the bolding vector to

the corresponding latent vector, with the quantity of bolding vector

added controlling the boldness of the end result

apply, generally a barely completely different process is used. In

some generative fashions the latent vectors fulfill some constraints

– for example, they might all be of the identical size. When

that’s the case, as in our mannequin, a extra subtle

“including” operation should be used, to make sure the size

stays the identical. However conceptually, the image of including the

bolding vector is the proper approach to assume.

This system was launched

by

vectors just like the bolding vector are generally referred to as

attribute vectors. The identical concept is use to implement all

the instruments we’ve proven. That’s, we use instance fonts to creating

a bolding vector, an italicizing vector, a condensing vector, and

a user-defined serif vector. The interface thus gives a approach of

exploring the latent area in these 4 instructions.

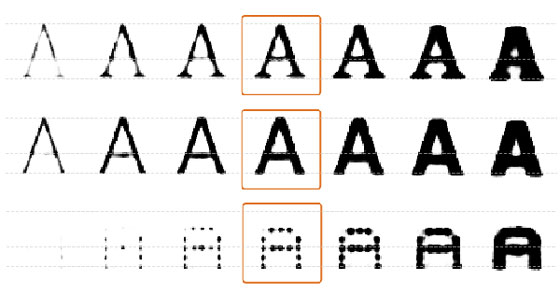

The instruments we’ve proven have many drawbacks. Contemplate the next

instance, the place we begin with an instance glyph, within the center, and

both enhance or lower the bolding (on the proper and left,

respectively):

Inspecting the glyphs on the left and proper we see many unlucky

artifacts. Notably for the rightmost glyph, the perimeters begin to get

tough, and the serifs start to vanish. A greater generative

mannequin would cut back these artifacts. That’s an excellent long-term

analysis program, posing many intriguing issues. However even with

the mannequin now we have, there are additionally some putting advantages to the

use of the generative mannequin.

To know these advantages, contemplate a naive strategy to

bolding, through which we merely add some additional pixels round a glyph’s

edges, thickening it up. Whereas this thickening maybe matches a

non-expert’s mind-set about sort design, an skilled does

one thing rather more concerned. Within the following we present the

outcomes of this naive thickening process versus what is definitely

accomplished, for Georgia and Helvetica:

As you may see, the naive bolding process produces fairly

completely different outcomes, in each circumstances. For instance, in Georgia, the

left stroke is just modified barely by bolding, whereas the proper

stroke is enormously enlarged, however solely on one facet. In each

fonts, bolding doesn’t change the peak of the font, whereas the

naive strategy does.

As these examples present, good bolding is not a trivial

means of thickening up a font. Professional sort designers have many

heuristics for bolding, heuristics inferred from a lot earlier

experimentation, and cautious research of historic

examples. Capturing all these heuristics in a traditional program

would contain immense work. The good thing about utilizing the generative

mannequin is that it mechanically learns many such heuristics.

For instance, a naive bolding instrument would quickly fill within the

enclosed detrimental area within the enclosed higher area of the letter

“A”. The font instrument doesn’t do that. As a substitute, it goes

to some hassle to protect the enclosed detrimental area, shifting

the A’s bar down, and filling out the inside strokes extra slowly

than the outside. This precept is clear within the examples

proven above, particularly Helvetica, and it will also be seen within the

operation of the font instrument:

The heuristic of preserving enclosed detrimental area is just not a

priori apparent. Nevertheless, it’s accomplished in lots of professionally

designed fonts. In the event you look at examples like these proven above

it’s simple to see why: it improves legibility. Throughout coaching,

our generative mannequin has mechanically inferred this precept

from the examples it’s seen. And our bolding interface then makes

this accessible to the person.

Actually, the mannequin captures many different heuristics. For example,

within the above examples the heights of the fonts are (roughly)

preserved, which is the norm in skilled font design. Once more,

what’s occurring isn’t only a thickening of the font, however relatively

the appliance of a extra refined heuristic inferred by the

generative mannequin. Such heuristics can be utilized to create fonts

with properties which might in any other case be unlikely to happen to

customers. Thus, the instrument expands bizarre folks’s capacity to

discover the area of significant fonts.

The font instrument is an instance of a form of cognitive know-how. In

specific, the primitive operations it incorporates may be

internalized as a part of how a person thinks. On this it resembles a

program corresponding to Photoshop or a spreadsheet or 3D graphics

applications. Every gives a novel set of interface primitives,

primitives which may be internalized by the person as elementary

new components of their pondering. This act of internalization of latest

primitives is key to a lot work on intelligence

augmentation.

The concepts proven within the font instrument may be prolonged to different domains.

Utilizing the identical interface, we will use a generative mannequin to

manipulate photographs of human faces utilizing qualities corresponding to

expression, gender, or hair shade. Or to govern sentences

utilizing size, sarcasm, or tone. Or to govern molecules utilizing

chemical properties:

Pictures from Sampling Generative Networks by

Sentence from Pleasure and Prejudice by Jane Austen. Interpolated by the authors. Impressed by experiments accomplished by the novelist

Pictures from Computerized chemical design utilizing a data-driven steady illustration of molecules by

Such generative interfaces present a form of cartography of

generative fashions, methods for people to discover and make that means

utilizing these fashions.

We noticed earlier that the font mannequin mechanically infers comparatively

deep ideas about font design, and makes them accessible to

customers. Whereas it’s nice that such deep ideas may be

inferred, generally such fashions infer different issues which might be mistaken,

or undesirable. For instance,

factors out

fashions will make faces not simply smile extra, but in addition seem extra

female. Why? As a result of within the coaching knowledge extra ladies than males

had been smiling. So these fashions might not simply study deep details about

the world, they might additionally internalize prejudices or inaccurate

beliefs. As soon as such a bias is understood, it’s usually doable to make

corrections. However to seek out these biases requires cautious auditing

of the fashions, and it’s not but clear how we will guarantee such

audits are exhaustive.

Extra broadly, we will ask why attribute vectors work, after they

work, and after they fail? In the mean time, the solutions to those

questions are poorly understood.

For the attribute vector to work requires that taking any beginning

font, we will assemble the corresponding daring model by including

the identical vector within the latent area. Nevertheless, a

priori there isn’t a cause utilizing a single fixed vector to

displace will work. It might be that we should always displace in lots of

alternative ways. For example, the heuristics used to daring serif

and sans-serif fonts are fairly completely different, and so it appears seemingly

that very completely different displacements can be concerned:

In fact, we may do one thing extra subtle than utilizing a

single fixed attribute vector. Given pairs of instance fonts

(unbold, daring) we may prepare a machine studying algorithm to take

as enter the latent vector for the unbolded model and output the

latent vector for the bolded model. With extra coaching

knowledge about font weights, the machine studying algorithm may

study to generate fonts of arbitrary weight. Attribute vectors

are simply an very simple strategy to doing these sorts of

operation.

For these causes, it appears unlikely that attribute vectors will

final as an strategy to manipulating high-level options. Over the

subsequent few years a lot better approaches can be developed. Nevertheless,

we will nonetheless count on interfaces providing operations broadly related

to these sketched above, permitting entry to high-level and

doubtlessly user-defined ideas. That interface sample doesn’t

rely upon the technical particulars of attribute vectors.

Interactive Generative Adversarial Fashions

Let’s have a look at one other instance utilizing machine studying fashions to

increase human creativity. It’s the interactive generative

adversarial networks, or iGANs, launched

by

One of many examples of Zhu et al is using iGANs in

an interface to generate photographs of shopper merchandise corresponding to

footwear. Conventionally, such an interface would require the

programmer to write down a program containing quite a lot of data

about footwear: soles, laces, heels, and so forth. As a substitute of doing

this, Zhu et al prepare a generative mannequin utilizing

thousand photographs of footwear, downloaded from Zappos. They then use

that generative mannequin to construct an interface that lets a person

roughly sketch the form of a shoe, the only real, the laces, and so

on:

al

The visible high quality is low, partially as a result of the generative mannequin

Zhu et al used is outdated by trendy (2017) requirements

– with extra trendy fashions, the visible high quality can be a lot

increased.

However the visible high quality is just not the purpose. Many attention-grabbing issues

are occurring on this prototype. For example, discover how the

total form of the shoe modifications significantly when the only real is

crammed in – it turns into narrower and sleeker. Many small

particulars are crammed in, just like the black piping on the highest of the

white sole, and the pink coloring crammed in all over the place on the

shoe’s higher. These and different details are mechanically deduced

from the underlying generative mannequin, in a approach we’ll describe

shortly.

The identical interface could also be used to sketch landscapes. The one

distinction is that the underlying generative mannequin has been

skilled on panorama photographs relatively than photographs of footwear. On this

case it turns into doable to sketch in simply the colours related

to a panorama. For instance, right here’s a person sketching in some inexperienced

grass, the define of a mountain, some blue sky, and snow on the

mountain:

al

The generative fashions utilized in these interfaces are completely different than

for our font mannequin. Slightly than utilizing variational autoencoders,

they’re primarily based on

adversarial networks (GANs)

nonetheless to discover a low-dimensional latent area which can be utilized to

characterize (say) all panorama photographs, and map that latent area to

a corresponding picture. Once more, we will consider factors within the

latent area as a compact approach of describing panorama photographs.

Roughly talking, the way in which the iGANs works is as follows. No matter

the present picture is, it corresponds to some level within the latent

area:

Suppose, as occurred within the earlier video, the person now sketches

in a stroke outlining the mountain form. We are able to consider the

stroke as a constraint on the picture, selecting out a subspace of the

latent area, consisting of all factors within the latent area whose

picture matches that define:

The best way the interface works is to discover a level within the latent area

which is close to to the present picture, so the picture is just not modified

an excessive amount of, but in addition coming near satisfying the imposed

constraints. That is accomplished by optimizing an goal perform

which mixes the gap to every of the imposed constraints, as

properly as the gap moved from the present level. If there’s

only a single constraint, say, akin to the mountain

stroke, this seems one thing like the next:

We are able to consider this, then, as a approach of making use of constraints to

the latent area to maneuver the picture round in significant methods.

The iGANs have a lot in frequent with the font instrument we confirmed

earlier. Each make accessible operations that encode a lot refined

data concerning the world, whether or not it’s studying to grasp

what a mountain seems like, or inferring that enclosed detrimental

area ought to be preserved when bolding a font. Each the iGANs and

the font instrument present methods of understanding and navigating a

high-dimensional area, holding us on the pure area of fonts

or footwear or landscapes. As Zhu et al comment:

[F]or most of us, even a easy picture manipulation in Photoshop

presents insurmountable difficulties… any less-than-perfect

edit instantly makes the picture look utterly unrealistic. To

put one other approach, basic visible manipulation paradigm doesn’t

forestall the person from “falling off” the manifold of

pure photographs.

Just like the font instrument, the iGANs is a cognitive know-how. Customers

can internalize the interface operations as new primitive components

of their pondering. Within the case of footwear, for instance, they will

study to assume by way of the distinction they wish to apply,

including a heel, or the next high, or a particular spotlight. That is

richer than the normal approach non-experts take into consideration footwear

(“Measurement 11, black” and many others). To the extent that

non-experts do assume in additional subtle methods –

“make the highest a little bit increased and sleeker” –

they get little apply in pondering this fashion, or seeing the

penalties of their selections. Having an interface like this

permits simpler exploration, the flexibility to develop idioms and the

capacity to plan, to swap concepts with buddies, and so forth.

Two fashions of computation

Let’s revisit the query we started the essay with, the query

of what computer systems are for, and the way this pertains to intelligence

augmentation.

One frequent conception of computer systems is that they’re problem-solving

machines: “laptop, what’s the results of firing this

artillery shell in such-and-such a wind [and so on]?”;

“laptop, what’s going to the utmost temperature in Tokyo be in

5 days?”; “laptop, what’s the finest transfer to take

when the Go board is on this place?”; “laptop,

how ought to this picture be categorized?”; and so forth.

It is a conception frequent to each the early view of computer systems as

number-crunchers, and likewise in a lot work on AI, each traditionally

and at this time. It’s a mannequin of a pc as a approach of outsourcing

cognition. In speculative depictions of doable future AI,

this cognitive outsourcing mannequin usually exhibits up within the

view of an AI as an oracle, capable of clear up some massive class of

issues with better-than-human efficiency.

However a really completely different conception of what computer systems are for is

doable, a conception rather more congruent with work on

intelligence augmentation.

To know this alternate view, contemplate our subjective

expertise of thought. For many individuals, that have is verbal:

they assume utilizing language, forming chains of phrases of their heads,

just like sentences in speech or written on a web page. For different

folks, pondering is a extra visible expertise, incorporating

representations corresponding to graphs and maps. Nonetheless different folks combine

arithmetic into their pondering, utilizing algebraic expressions or

diagrammatic methods, corresponding to Feynman diagrams and Penrose

diagrams.

In every case, we’re pondering utilizing representations invented by

different folks: phrases, graphs, maps, algebra, mathematical diagrams,

and so forth. We internalize these cognitive applied sciences as we develop

up, and are available to make use of them as a form of substrate for our pondering.

For many of historical past, the vary of accessible cognitive applied sciences

has modified slowly and incrementally. A brand new phrase can be

launched, or a brand new mathematical image. Extra hardly ever, a radical

new cognitive know-how can be developed. For instance, in 1637

Descartes printed his “Discourse on Technique”,

explaining tips on how to characterize geometric concepts utilizing algebra, and

vice versa:

This enabled a radical change and enlargement in how we take into consideration

each geometry and algebra.

Traditionally, lasting cognitive applied sciences have been invented

solely hardly ever. However trendy computer systems are a meta-medium enabling the

fast invention of many new cognitive applied sciences. Contemplate a

comparatively banal instance, such

as Photoshop. Adept Photoshop customers routinely

have previously unimaginable ideas corresponding to: “let’s apply the

clone stamp to the such-and-such layer.”. That’s an

occasion of a extra basic class of thought: “laptop, [new

type of action] this [new type of representation for a newly

imagined class of object]”. When that occurs, we’re utilizing

computer systems to broaden the vary of ideas we will assume.

It’s this type of cognitive transformation mannequin which

underlies a lot of the deepest work on intelligence augmentation.

Slightly than outsourcing cognition, it’s about altering the

operations and representations we use to assume; it’s about

altering the substrate of thought itself. And so whereas cognitive

outsourcing is essential, this cognitive transformation view

affords a way more profound mannequin of intelligence augmentation.

It’s a view through which computer systems are a way to alter and broaden

human thought itself.

Traditionally, cognitive applied sciences had been developed by human

inventors, starting from the invention of writing in Sumeria and

Mesoamerica, to the trendy interfaces of designers corresponding to Douglas

Engelbart, Alan Kay, and others.

Examples corresponding to these described on this essay recommend that AI

methods can allow the creation of latest cognitive applied sciences.

Issues just like the font instrument aren’t simply oracles to be consulted when

you desire a new font. Slightly, they can be utilized to discover and

uncover, to offer new representations and operations, which might

be internalized as a part of the person’s personal pondering. And whereas

these examples are of their early phases, they recommend AI is just not

nearly cognitive outsourcing. A distinct view of AI is

doable, one the place it helps us invent new cognitive applied sciences

which rework the way in which we predict.

On this essay we’ve centered on a small variety of examples, principally

involving exploration of the latent area. There are numerous different

examples of synthetic intelligence augmentation. To offer some

taste, with out being complete:

the

community assisted drawing;

the

customers to quickly construct new musical devices and creative

methods;

animations by exploring latent areas; machine studying fashions for

designing

format

interpolation between

phrases

to allow new primitives which may be built-in into the person’s

pondering. Extra broadly, synthetic intelligence augmentation will

draw on fields corresponding to

creativity

studying

Discovering highly effective new primitives of thought

We’ve argued that machine studying methods might help create

representations and operations which function new primitives in

human thought. What properties ought to we search for in such new

primitives? That is too massive a query to be answered

comprehensively in a brief essay. However we are going to discover it briefly.

Traditionally, essential new media kinds usually appear unusual when

launched. Many such tales have handed into standard tradition:

the close to riot on the premiere of Stravinsky and Nijinksy’s

“Ceremony of Spring”; the consternation attributable to the

early cubist work, main

The New York Instances

remark

chargeable for them taken go away of their senses? Is it artwork or

insanity? Who is aware of?”

One other instance comes from physics. Within the Nineteen Forties, completely different

formulations of the idea of quantum electrodynamics had been

developed independently by the physicists Julian Schwinger,

Shin’ichirō Tomonaga, and Richard Feynman. Of their work,

Schwinger and Tomonaga used a traditional algebraic strategy,

alongside strains just like the remainder of physics. Feynman used a extra

radical strategy, primarily based on what at the moment are often known as Feynman diagrams,

for depicting the interplay of sunshine and matter:

Holdsworth), licensed underneath a Inventive Commons

Attribution-Share Alike 3.0 Unported license

Initially, the Schwinger-Tomonaga strategy was simpler for different

physicists to grasp. When Feynman and Schwinger introduced

their work at a 1948 workshop, Schwinger was instantly

acclaimed. In contrast, Feynman left his viewers mystified. As

James Gleick put it in his

Feynman

It struck Feynman that everybody had a favourite precept or

theorem and he was violating all of them… Feynman knew he had

failed. On the time, he was in anguish. Later he mentioned merely:

“I had an excessive amount of stuff. My machines got here from too far

away.”

In fact, strangeness for strangeness’s sake alone is just not

helpful. However these examples recommend that breakthroughs in

illustration usually seem unusual at first. Is there any

underlying cause that’s true?

A part of the reason being as a result of if some illustration is actually new,

then it is going to seem completely different than something you’ve ever seen

earlier than. Feynman’s diagrams, Picasso’s work, Stravinsky’s

music: all revealed genuinely new methods of creating that means. Good

representations sharpen up such insights, eliding the acquainted to

present that which is new as vividly as doable. However due to

that emphasis on unfamiliarity, the illustration will appear

unusual: it exhibits relationships you’ve by no means seen earlier than. In some

sense, the duty of the designer is to determine that core

strangeness, and to amplify it as a lot as doable.

Unusual representations are sometimes obscure. At

first, physicists most well-liked Schwinger-Tomonaga to Feynman. However as

Feynman’s strategy was slowly understood by physicists, they

realized that though Schwinger-Tomonaga and Feynman had been

mathematically equal, Feynman was extra highly effective. As Gleick

places it:

Schwinger’s college students at Harvard had been put at a aggressive

drawback, or so it appeared to their fellows elsewhere, who

suspected them of surreptitiously utilizing the diagrams anyway. This

was generally true… Murray Gell-Mann later spent a semester

staying in Schwinger’s home and liked to say afterward that he

had searched all over the place for the Feynman diagrams. He had not

discovered any, however one room had been locked…

These concepts are true not simply of historic representations, however

additionally of laptop interfaces. Nevertheless, our advocacy of strangeness

in illustration contradicts a lot standard knowledge about

interfaces, particularly the widely-held perception that they need to be

“person pleasant”, i.e., easy and instantly useable

by novices. That the majority usually means the interface is cliched, constructed

from standard components mixed in customary methods. However whereas

utilizing a cliched interface could also be simple and enjoyable, it’s an ease

just like studying a formulaic romance novel. It means the

interface doesn’t reveal something really stunning about its

topic space. And so it is going to do little to deepen the person’s

understanding, or to alter the way in which they assume. For mundane duties

that’s tremendous, however for deeper duties, and for the long term, you

need a greater interface.

Ideally, an interface will floor the deepest ideas

underlying a topic, revealing a brand new world to the person. If you

study such an interface, you internalize these ideas, giving

you extra highly effective methods of reasoning about that world. These

ideas are the diffs in your understanding. They’re all you

actually wish to see, all the pieces else is at finest assist, at worst

unimportant dross. The aim of the very best interfaces isn’t to be

user-friendly in some shallow sense. It’s to be user-friendly in

a a lot stronger sense,

ideas

situations through which customers dwell and create. At that time what as soon as

appeared unusual can as an alternative turns into snug and acquainted,

a part of the sample of thought

these concepts is when an interface reifies general-purpose

ideas. An instance is an

interface

primarily based on the precept of conservation of vitality. Such

general-purpose ideas generate a number of surprising

relationships between the entities of a topic, and so are a

significantly wealthy supply of insights when reified in an

interface.

What does this imply for using AI fashions for intelligence

augmentation?

Aspirationally, as we’ve seen, our machine studying fashions will

assist us construct interfaces which reify deep ideas in methods

significant to the person. For that to occur, the fashions need to

uncover deep ideas concerning the world, acknowledge these

ideas, after which floor them as vividly as doable in an

interface, in a approach understandable by the person.

In fact, it is a tall order! The examples we’ve proven are simply

barely starting to do that. It’s true that our fashions do

generally uncover comparatively deep ideas, just like the

preservation of enclosed detrimental area when bolding a font. However

that is merely implicit within the mannequin. And whereas we’ve constructed a instrument

which takes benefit of such ideas, it’d be higher if the

mannequin mechanically inferred the essential ideas discovered, and

discovered methods of explicitly surfacing them by way of the interface.

(Encouraging progress towards this has been made

by

information-theoretic concepts to seek out construction within the latent

area.) Ideally, such fashions would begin to get at true

explanations, not simply in a static type, however in a dynamic type,

manipulable by the person. However we’re a great distance from that time.

Do these interfaces inhibit creativity?

It’s tempting to be skeptical of the expressiveness of the

interfaces we’ve described. If an interface constrains us to

discover solely the pure area of photographs, does that imply we’re

merely doing the anticipated? Does it imply these interfaces can solely

be used to generate visible cliches? Does it forestall us from

producing something really new, from doing really artistic work?

To reply these questions, it’s useful to determine two completely different

modes of creativity. This two-mode mannequin is over-simplified:

creativity doesn’t match so neatly into two distinct classes. But

the mannequin nonetheless clarifies the function of latest interfaces in

artistic work.

The primary mode of creativity is the on a regular basis creativity of a

craftsperson engaged of their craft. A lot of the work of a font

designer, for instance, consists of competent recombination of the

finest current practices. Such work usually entails many

artistic selections to fulfill the supposed design targets, however not

growing key new underlying ideas.

For such work, the generative interfaces we’ve been discussing are

promising. Whereas they at the moment have many limitations, future

analysis will id and repair many deficiencies. That is

occurring quickly with GANs: the unique

GANs

however fashions quickly appeared that had been higher tailored to

photographs

decision, lowered artifacts

accomplished on bettering decision and decreasing artifacts it appears

unfair to single out any small set of papers, and to omit the various

others.

believable these generative interfaces will turn out to be highly effective instruments

for craft work.

The second mode of creativity goals towards growing new

ideas that essentially change the vary of artistic

expression. One sees this within the work of artists corresponding to Picasso

or Monet, who violated current ideas of portray, growing

new ideas which enabled folks to see in new methods.

Is it doable to do such artistic work, whereas utilizing a generative

interface? Don’t such interfaces constrain us to the area of

pure photographs, or pure fonts, and thus actively forestall us

from exploring essentially the most attention-grabbing new instructions in artistic

work?

The state of affairs is extra advanced than this.

Partly, it is a query concerning the energy of our generative

fashions. In some circumstances, the mannequin can solely generate recombinations

of current concepts. It is a limitation of a great GAN, since a

completely skilled GAN generator will reproduce the coaching

distribution. Such a mannequin can’t immediately generate a picture primarily based

on new elementary ideas, as a result of such a picture wouldn’t look

something prefer it’s seen in its coaching knowledge.

Artists corresponding to Mario

Klingemann and Mike

Tyka at the moment are utilizing GANs to create attention-grabbing

art work. They’re doing that utilizing “imperfect” GAN

fashions, which they appear to have the ability to use to discover attention-grabbing

new ideas; it’s maybe the case that dangerous GANs could also be extra

artistically attention-grabbing than very best GANs. Moreover, nothing

says an interface should solely assist us discover the latent area.

Maybe operations may be added which intentionally take us out

of the latent area, or to much less possible (and so extra

stunning) components of the area of pure photographs.

In fact, GANs should not the one generative fashions. In a

sufficiently highly effective generative mannequin, the generalizations

found by the mannequin might include concepts going past what people

have found. In that case, exploration of the latent area might

allow us to find new elementary ideas. The mannequin would

have found stronger abstractions than human specialists. Think about

a generative mannequin skilled on work up till simply earlier than the

time of the cubists; would possibly it’s that by exploring that mannequin it

can be doable to find cubism? It could be an analogue to

one thing just like the prediction of Bose-Einstein condensation, as

mentioned earlier within the essay. Such invention is past at this time’s

generative fashions, however appears a worthwhile aspiration for future

fashions.

Our examples to this point have all been primarily based on generative fashions. However

there are some illuminating examples which aren’t primarily based on

generative fashions. Contemplate the pix2pix system developed

by

system is skilled on pairs of photographs, e.g., pairs displaying the

edges of a cat, and the precise corresponding cat. As soon as skilled,

it may be proven a set of edges and requested to generate a picture for

an precise corresponding cat. It usually does this fairly properly:

When equipped with uncommon constraints, pix2pix can produce

putting photographs:

That is maybe not excessive creativity of a Picasso-esque stage. However

it’s nonetheless stunning. It’s actually not like photographs most of us

have ever seen earlier than. How does pix2pix and its human person obtain

this type of end result?

Not like our earlier examples, pix2pix is just not a generative mannequin.

This implies it doesn’t have a latent area or a corresponding

area of pure photographs. As a substitute, there’s a neural community,

referred to as, confusingly, a generator – this isn’t meant within the

identical sense as our earlier generative fashions – that takes as

enter the constraint picture, and produces as output the filled-in

picture.

The generator is skilled adversarially towards a discriminator

community, whose job is to differentiate between pairs of photographs

generated from actual knowledge, and pairs of photographs generated by the

generator.

Whereas this sounds just like a traditional GAN, there’s a

essential distinction: there isn’t a latent vector enter to the

generator

al

the generator, however discovered it made little distinction to the

ensuing photographs.

constraint. When a human inputs a constraint not like something seen

in coaching, the community is pressured to improvise, doing the very best it

can to interpret that constraint in line with the principles it has

beforehand discovered. The creativity is the results of a pressured

merger of data inferred from the coaching knowledge, along with

novel constraints supplied by the person. Consequently, even

comparatively easy concepts – just like the bread- and beholder-cats

– may end up in putting new varieties of photographs, photographs not

inside what we might beforehand have thought-about the area of

pure photographs.

Conclusion

It’s standard knowledge that AI will change how we work together with

computer systems. Sadly, many within the AI neighborhood enormously

underestimate the depth of interface design, usually concerning it as

a easy drawback, principally about making issues fairly or

easy-to-use. On this view, interface design is an issue to be

handed off to others, whereas the laborious work is to coach some machine

studying system.

This view is wrong. At its deepest, interface design means

growing the basic primitives human beings assume and

create with. It is a drawback whose mental genesis goes

again to the inventors of the alphabet, of cartography, and of

musical notation, in addition to trendy giants corresponding to Descartes,

Playfair, Feynman, Engelbart, and Kay. It is likely one of the hardest,

most essential and most elementary issues humanity grapples

with.

As mentioned earlier, in a single frequent view of AI our computer systems will

proceed to get higher at fixing issues, however human beings will

stay largely unchanged. In a second frequent view, human beings

can be modified on the {hardware} stage, maybe immediately by way of

neural interfaces, or not directly by way of complete mind emulation.

We’ve described a 3rd view, through which AIs truly change

humanity, serving to us invent new cognitive applied sciences, which

broaden the vary of human thought. Maybe in the future these

cognitive applied sciences will, in flip, velocity up the event of

AI, in a virtuous suggestions cycle:

It could not be a Singularity in machines. Slightly, it could be a

Singularity in humanity’s vary of thought. In fact, this loop

is at current extraordinarily speculative. The methods we’ve described

might help develop extra highly effective methods of pondering, however there’s at

most an oblique sense through which these methods of pondering are being

utilized in flip to develop new AI methods.

In fact, over the long term it’s doable that machines will

exceed people on all or most cognitive duties. Even when that’s the

case, cognitive transformation will nonetheless be a precious finish, value

pursuing in its personal proper. There may be pleasure and worth concerned

in studying to play chess or Go properly, even when machines do it

higher. And in actions corresponding to story-telling the profit usually

isn’t a lot the artifact produced as the method of building

itself, and the relationships solid. There may be intrinsic worth in

private change and development, other than instrumental advantages.

The interface-oriented work we’ve mentioned is exterior the

narrative used to evaluate most current work in synthetic

intelligence. It doesn’t contain beating some benchmark for a

classification or regression drawback. It doesn’t contain

spectacular feats like beating human champions at video games corresponding to

Go. Slightly, it entails a way more subjective and

difficult-to-measure criterion: is it serving to people assume and

create in new methods?

This creates difficulties for doing this type of work,

significantly in a analysis setting. The place ought to one publish?

What neighborhood does one belong to? What requirements ought to be

utilized to evaluate such work? What distinguishes good work from

dangerous?

We imagine that over the following few years a neighborhood will emerge

which solutions these questions. It is going to run workshops and

conferences. It is going to publish work in venues corresponding to Distill. Its

requirements will draw from many various communities: from the

creative and design and musical communities; from the mathematical

neighborhood’s style in abstraction and good definition; in addition to

from the prevailing AI and IA communities, together with work on

computational creativity and human-computer interplay. The

long-term take a look at of success would be the growth of instruments which

are broadly utilized by creators. Are artists utilizing these instruments to

develop outstanding new types? Are scientists in different fields

utilizing them to develop understanding in methods not in any other case

doable? These are nice aspirations, and require an strategy

that builds on standard AI work, but in addition incorporates very

completely different norms.

[ad_2]

Source link