[ad_1]

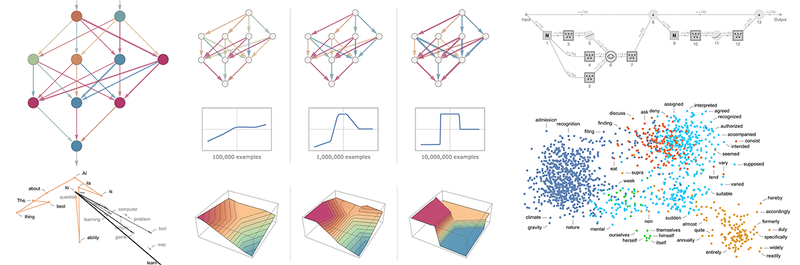

That ChatGPT can mechanically generate one thing that reads even superficially like human-written textual content is outstanding, and surprising. However how does it do it? And why does it work? My goal right here is to present a tough define of what’s happening inside ChatGPT—after which to discover why it’s that it might accomplish that nicely in producing what we would think about to be significant textual content. I ought to say on the outset that I’m going to concentrate on the massive image of what’s happening—and whereas I’ll point out some engineering particulars, I gained’t get deeply into them. (And the essence of what I’ll say applies simply as nicely to different present “massive language fashions” [LLMs] as to ChatGPT.)

The very first thing to clarify is that what ChatGPT is all the time essentially making an attempt to do is to provide a “cheap continuation” of no matter textual content it’s acquired thus far, the place by “cheap” we imply “what one may anticipate somebody to put in writing after seeing what individuals have written on billions of webpages, and many others.”

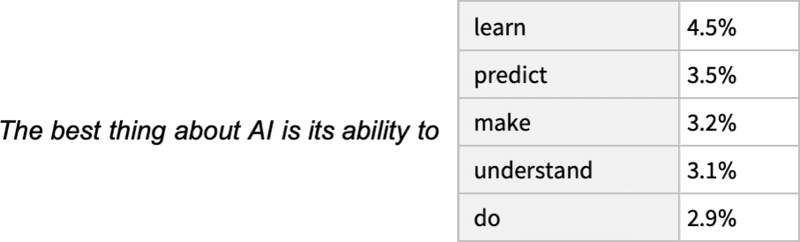

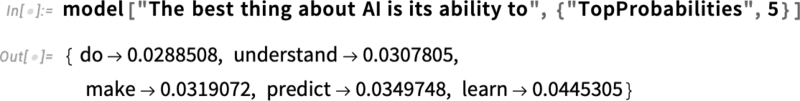

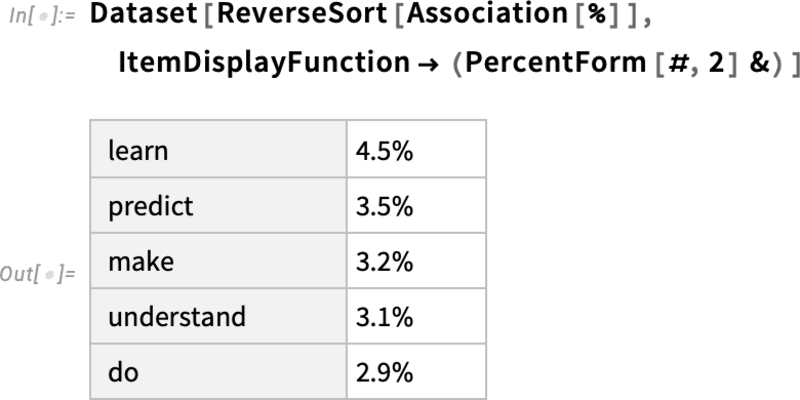

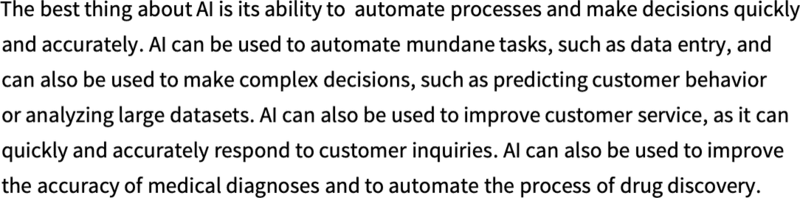

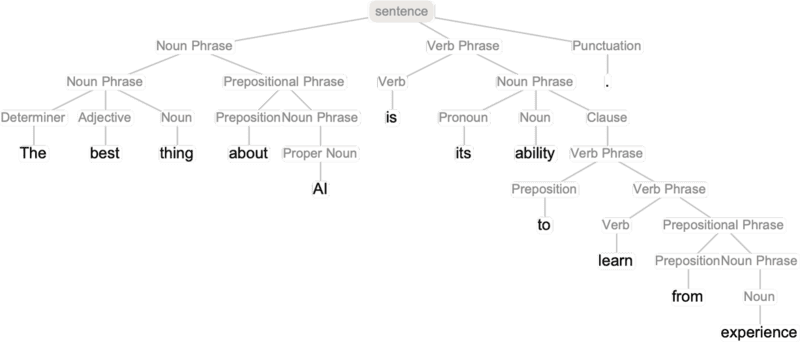

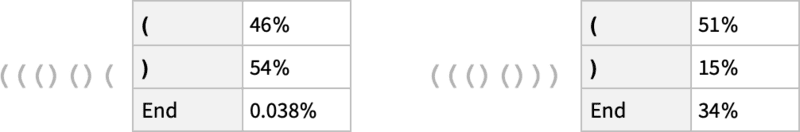

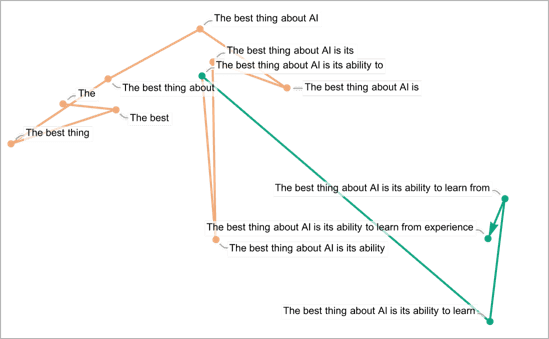

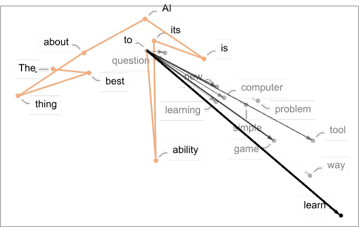

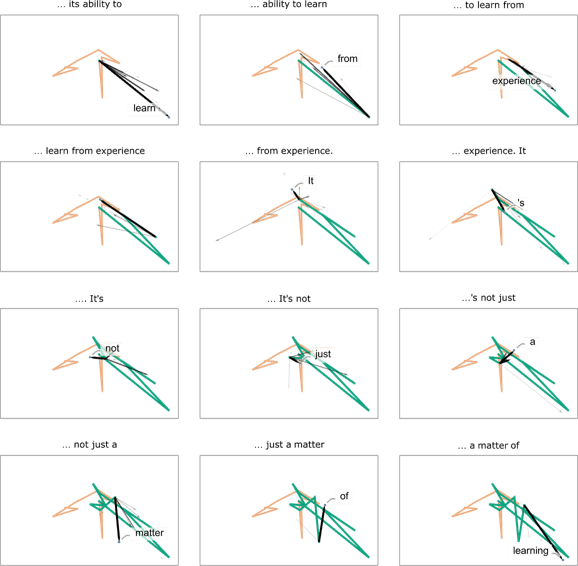

So let’s say we’ve acquired the textual content “The perfect factor about AI is its capability to”. Think about scanning billions of pages of human-written textual content (say on the internet and in digitized books) and discovering all cases of this textual content—then seeing what phrase comes subsequent what fraction of the time. ChatGPT successfully does one thing like this, besides that (as I’ll clarify) it doesn’t have a look at literal textual content; it seems for issues that in a sure sense “match in that means”. However the finish result’s that it produces a ranked listing of phrases which may comply with, along with “chances”:

And the outstanding factor is that when ChatGPT does one thing like write an essay what it’s primarily doing is simply asking again and again “given the textual content thus far, what ought to the subsequent phrase be?”—and every time including a phrase. (Extra exactly, as I’ll clarify, it’s including a “token”, which might be simply part of a phrase, which is why it might typically “make up new phrases”.)

However, OK, at every step it will get a listing of phrases with chances. However which one ought to it truly decide so as to add to the essay (or no matter) that it’s writing? One may assume it ought to be the “highest-ranked” phrase (i.e. the one to which the best “chance” was assigned). However that is the place a little bit of voodoo begins to creep in. As a result of for some purpose—that possibly someday we’ll have a scientific-style understanding of—if we all the time decide the highest-ranked phrase, we’ll sometimes get a really “flat” essay, that by no means appears to “present any creativity” (and even typically repeats phrase for phrase). But when typically (at random) we decide lower-ranked phrases, we get a “extra fascinating” essay.

The truth that there’s randomness right here signifies that if we use the identical immediate a number of instances, we’re more likely to get completely different essays every time. And, in line with the thought of voodoo, there’s a specific so-called “temperature” parameter that determines how typically lower-ranked phrases might be used, and for essay era, it seems {that a} “temperature” of 0.8 appears greatest. (It’s price emphasizing that there’s no “concept” getting used right here; it’s only a matter of what’s been discovered to work in observe. And for instance the idea of “temperature” is there as a result of exponential distributions familiar from statistical physics occur to be getting used, however there’s no “bodily” connection—no less than as far as we all know.)

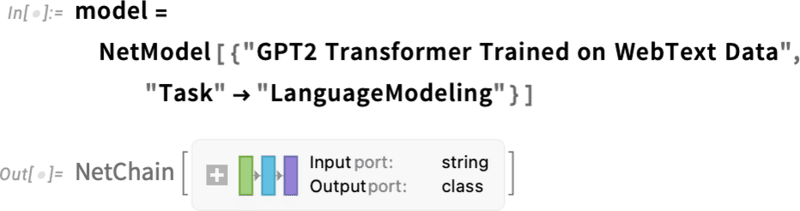

Earlier than we go on I ought to clarify that for functions of exposition I’m largely not going to make use of the full system that’s in ChatGPT; as an alternative I’ll often work with an easier GPT-2 system, which has the good characteristic that it’s sufficiently small to have the ability to run on a normal desktop pc. And so for primarily every part I present I’ll have the ability to embrace express Wolfram Language code you could instantly run in your pc. (Click on any image right here to repeat the code behind it.)

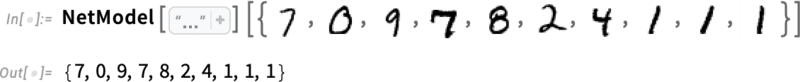

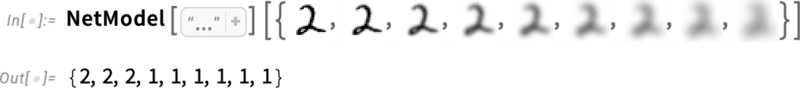

For instance, right here’s tips on how to get the desk of chances above. First, we have now to retrieve the underlying “language model” neural net:

Afterward, we’ll look inside this neural web, and speak about the way it works. However for now we will simply apply this “web mannequin” as a black field to our textual content thus far, and ask for the highest 5 phrases by chance that the mannequin says ought to comply with:

This takes that consequence and makes it into an express formatted “dataset”:

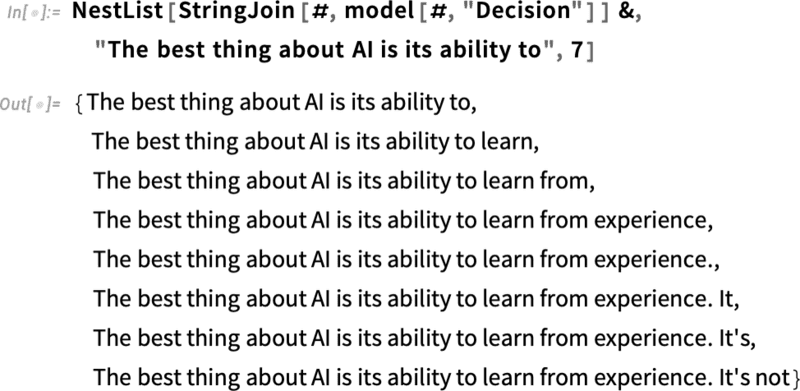

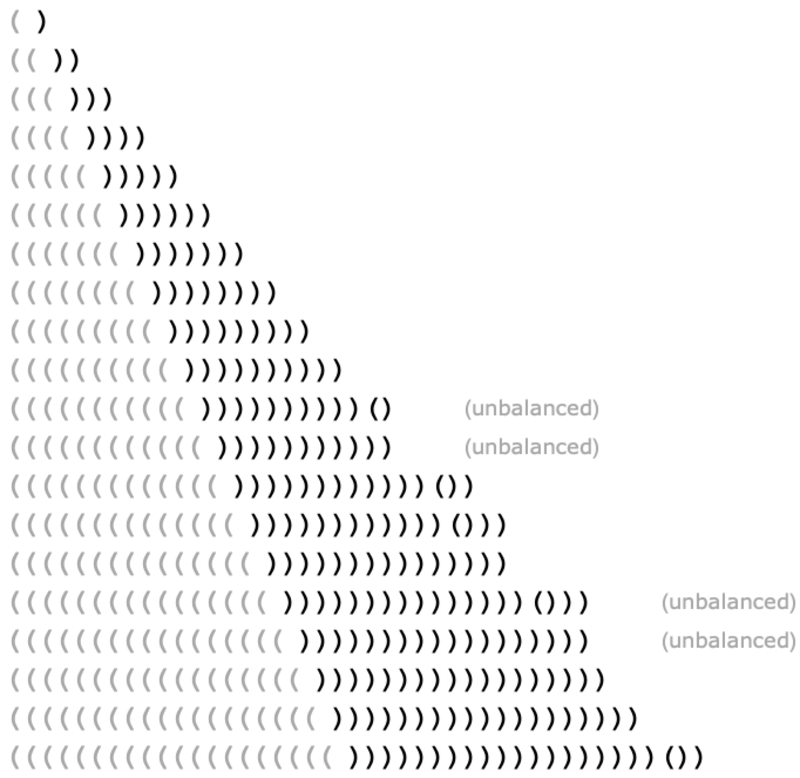

Right here’s what occurs if one repeatedly “applies the mannequin”—at every step including the phrase that has the highest chance (specified on this code because the “determination” from the mannequin):

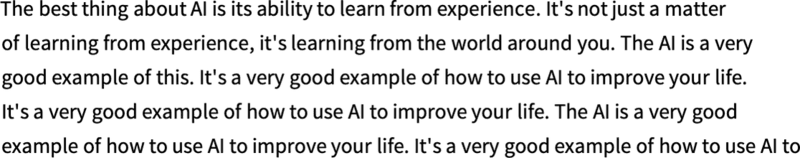

What occurs if one goes on longer? On this (“zero temperature”) case what comes out quickly will get somewhat confused and repetitive:

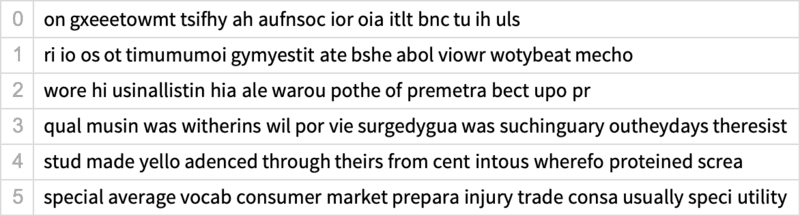

However what if as an alternative of all the time selecting the “high” phrase one typically randomly picks “non-top” phrases (with the “randomness” comparable to “temperature” 0.8)? Once more one can construct up textual content:

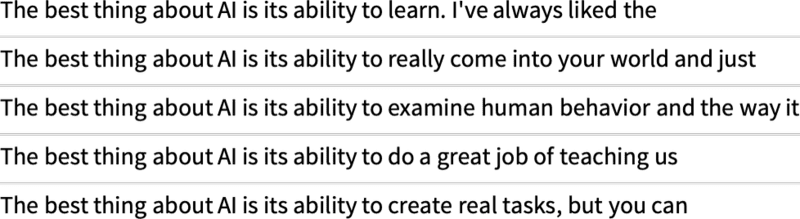

And each time one does this, completely different random decisions might be made, and the textual content might be completely different—as in these 5 examples:

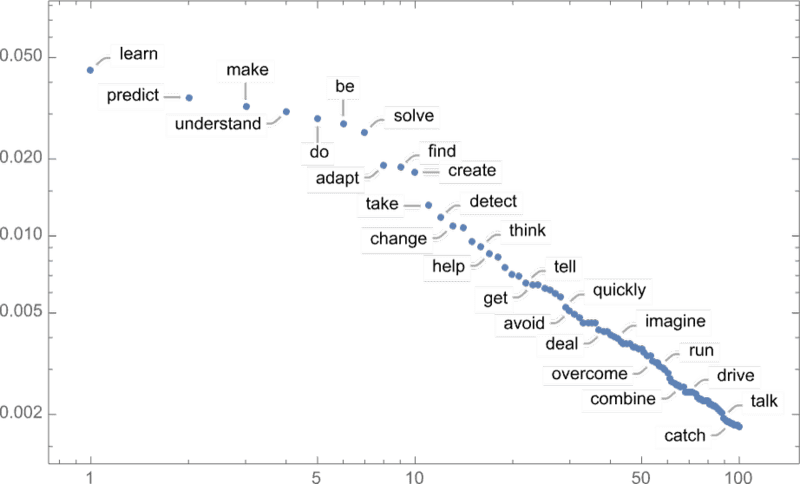

It’s price mentioning that even at step one there are a number of attainable “subsequent phrases” to select from (at temperature 0.8), although their chances fall off fairly shortly (and, sure, the straight line on this log-log plot corresponds to an n–1 “power-law” decay that’s very characteristic of the general statistics of language):

So what occurs if one goes on longer? Right here’s a random instance. It’s higher than the top-word (zero temperature) case, however nonetheless at greatest a bit bizarre:

This was achieved with the simplest GPT-2 model (from 2019). With the newer and bigger GPT-3 models the outcomes are higher. Right here’s the top-word (zero temperature) textual content produced with the identical “immediate”, however with the largest GPT-3 mannequin:

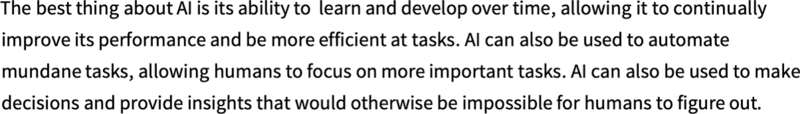

And right here’s a random instance at “temperature 0.8”:

OK, so ChatGPT all the time picks its subsequent phrase primarily based on chances. However the place do these chances come from? Let’s begin with an easier drawback. Let’s think about producing English textual content one letter (somewhat than phrase) at a time. How can we work out what the chance for every letter ought to be?

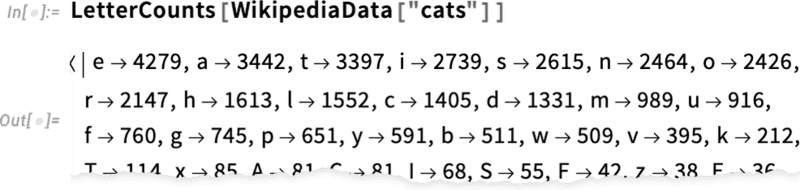

A really minimal factor we may do is simply take a pattern of English textual content, and calculate how typically completely different letters happen in it. So, for instance, this counts letters in the Wikipedia article on “cats”:

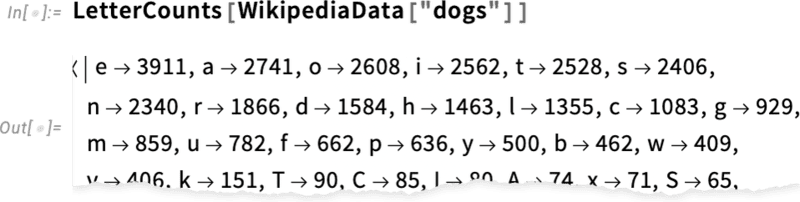

And this does the identical factor for “canine”:

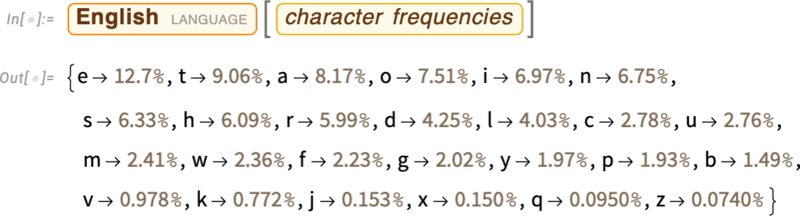

The outcomes are comparable, however not the identical (“o” is little question extra frequent within the “canine” article as a result of, in any case, it happens within the phrase “canine” itself). Nonetheless, if we take a big sufficient pattern of English textual content we will anticipate to finally get no less than pretty constant outcomes:

Right here’s a pattern of what we get if we simply generate a sequence of letters with these chances:

We are able to break this into “phrases” by including in areas as in the event that they have been letters with a sure chance:

We are able to do a barely higher job of creating “phrases” by forcing the distribution of “phrase lengths” to agree with what it’s in English:

![]()

We didn’t occur to get any “precise phrases” right here, however the outcomes are wanting barely higher. To go additional, although, we have to do extra than simply decide every letter individually at random. And, for instance, we all know that if we have now a “q”, the subsequent letter mainly needs to be “u”.

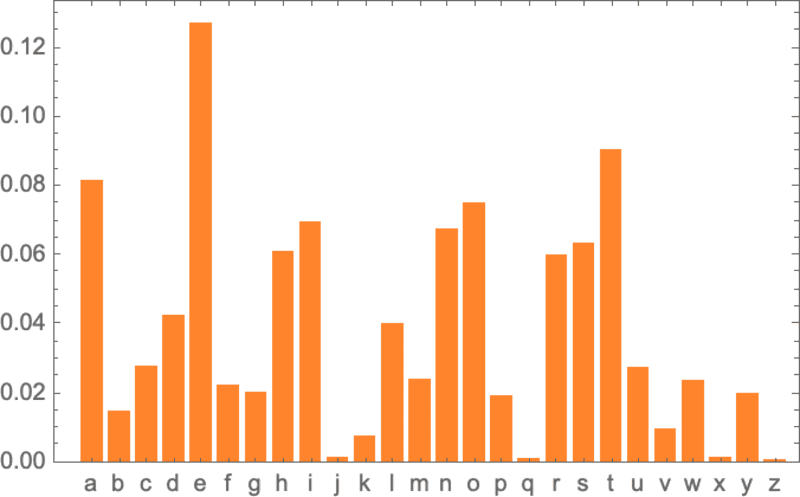

Right here’s a plot of the chances for letters on their very own:

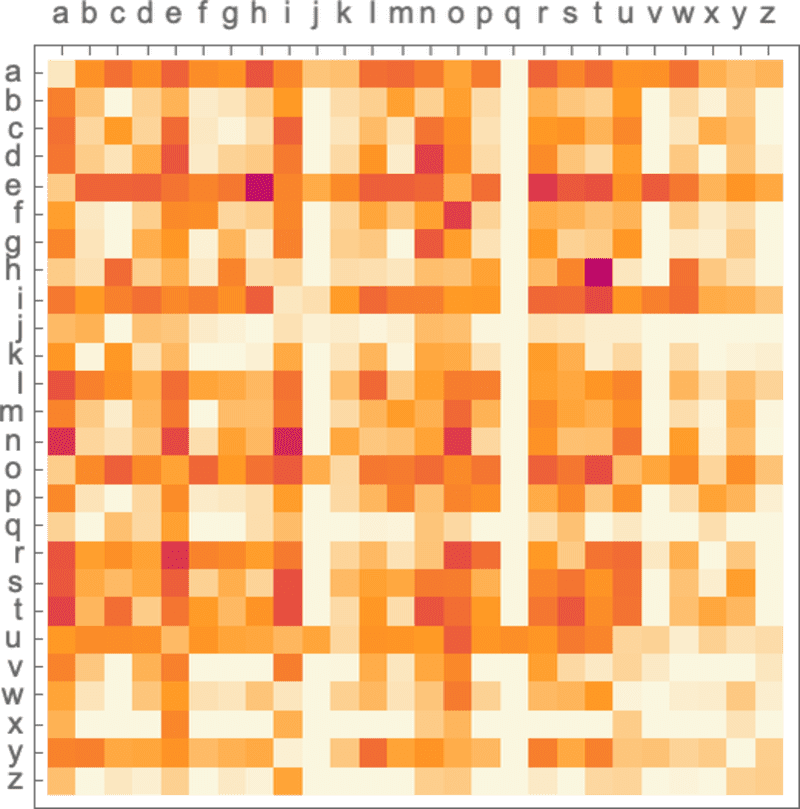

And right here’s a plot that reveals the chances of pairs of letters (“2-grams”) in typical English textual content. The attainable first letters are proven throughout the web page, the second letters down the web page:

And we see right here, for instance, that the “q” column is clean (zero chance) besides on the “u” row. OK, so now as an alternative of producing our “phrases” a single letter at a time, let’s generate them two letters at a time, utilizing these “2-gram” chances. Right here’s a pattern of the consequence—which occurs to incorporate a couple of “precise phrases”:

![]()

With sufficiently a lot English textual content we will get fairly good estimates not only for chances of single letters or pairs of letters (2-grams), but additionally for longer runs of letters. And if we generate “random phrases” with progressively longer n-gram chances, we see that they get progressively “extra sensible”:

However let’s now assume—kind of as ChatGPT does—that we’re coping with entire phrases, not letters. There are about 40,000 reasonably commonly used words in English. And by a big corpus of English textual content (say a couple of million books, with altogether a couple of hundred billion phrases), we will get an estimate of how common each word is. And utilizing this we will begin producing “sentences”, wherein every phrase is independently picked at random, with the identical chance that it seems within the corpus. Right here’s a pattern of what we get:

![]()

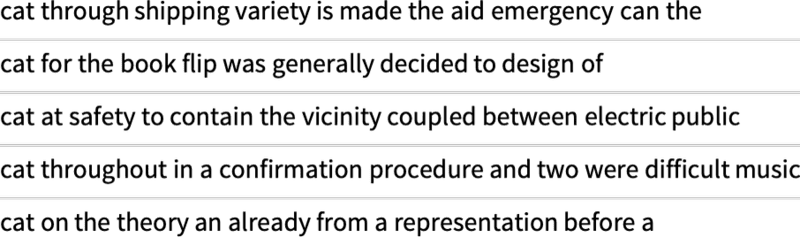

Not surprisingly, that is nonsense. So how can we do higher? Similar to with letters, we will begin bearing in mind not simply chances for single phrases however chances for pairs or longer n-grams of phrases. Doing this for pairs, listed here are 5 examples of what we get, in all instances ranging from the phrase “cat”:

It’s getting barely extra “wise wanting”. And we would think about that if we have been in a position to make use of sufficiently lengthy n-grams we’d mainly “get a ChatGPT”—within the sense that we’d get one thing that will generate essay-length sequences of phrases with the “right general essay chances”. However right here’s the issue: there simply isn’t even near sufficient English textual content that’s ever been written to have the ability to deduce these chances.

In a crawl of the web there could be a couple of hundred billion phrases; in books which were digitized there could be one other hundred billion phrases. However with 40,000 frequent phrases, even the variety of attainable 2-grams is already 1.6 billion—and the variety of attainable 3-grams is 60 trillion. So there’s no manner we will estimate the chances even for all of those from textual content that’s on the market. And by the point we get to “essay fragments” of 20 phrases, the variety of potentialities is bigger than the variety of particles within the universe, so in a way they might by no means all be written down.

So what can we do? The massive concept is to make a mannequin that lets us estimate the chances with which sequences ought to happen—regardless that we’ve by no means explicitly seen these sequences within the corpus of textual content we’ve checked out. And on the core of ChatGPT is exactly a so-called “massive language mannequin” (LLM) that’s been constructed to do a superb job of estimating these chances.

Say you wish to know (as Galileo did back in the late 1500s) how lengthy it’s going to take a cannon ball dropped from every flooring of the Tower of Pisa to hit the bottom. Properly, you could possibly simply measure it in every case and make a desk of the outcomes. Or you could possibly do what’s the essence of theoretical science: make a mannequin that provides some sort of process for computing the reply somewhat than simply measuring and remembering every case.

Let’s think about we have now (considerably idealized) knowledge for a way lengthy the cannon ball takes to fall from numerous flooring:

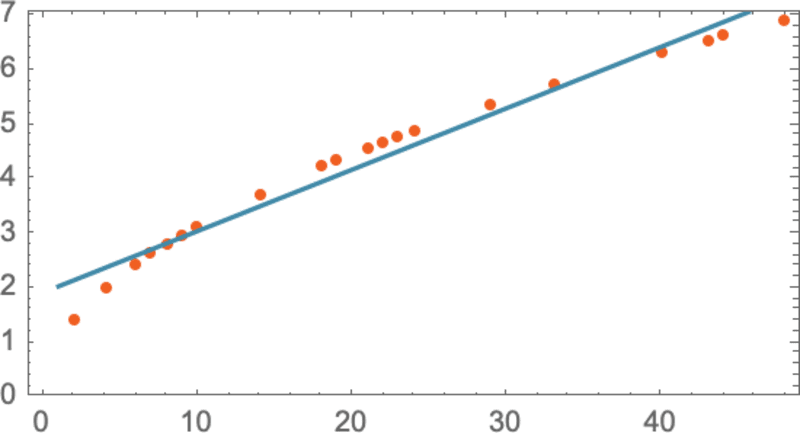

How can we work out how lengthy it’s going to take to fall from a flooring we don’t explicitly have knowledge about? On this explicit case, we will use identified legal guidelines of physics to work it out. However say all we’ve acquired is the info, and we don’t know what underlying legal guidelines govern it. Then we would make a mathematical guess, like that maybe we must always use a straight line as a mannequin:

We may decide completely different straight strains. However that is the one which’s on common closest to the info we’re given. And from this straight line we will estimate the time to fall for any flooring.

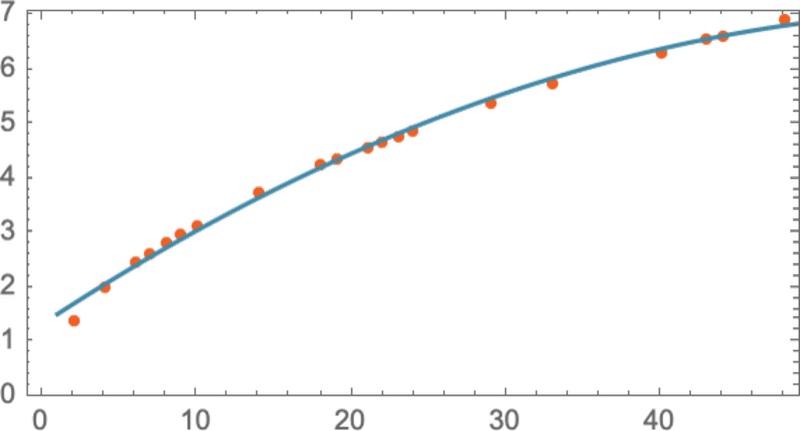

How did we all know to strive utilizing a straight line right here? At some stage we didn’t. It’s simply one thing that’s mathematically easy, and we’re used to the truth that plenty of knowledge we measure seems to be nicely match by mathematically easy issues. We may strive one thing mathematically extra sophisticated—say a + b x + c x2—after which on this case we do higher:

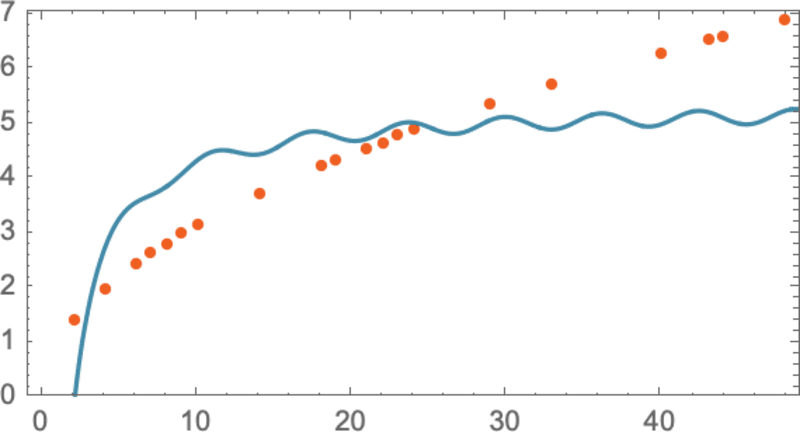

Issues can go fairly improper, although. Like right here’s the best we can do with a + b/x + c sin(x):

It’s price understanding that there’s by no means a “model-less mannequin”. Any mannequin you employ has some explicit underlying construction—then a sure set of “knobs you possibly can flip” (i.e. parameters you possibly can set) to suit your knowledge. And within the case of ChatGPT, plenty of such “knobs” are used—truly, 175 billion of them.

However the outstanding factor is that the underlying construction of ChatGPT—with “simply” that many parameters—is ample to make a mannequin that computes next-word chances “nicely sufficient” to present us cheap essay-length items of textual content.

The instance we gave above includes making a mannequin for numerical knowledge that primarily comes from easy physics—the place we’ve identified for a number of centuries that “easy arithmetic applies”. However for ChatGPT we have now to make a mannequin of human-language textual content of the sort produced by a human mind. And for one thing like that we don’t (no less than but) have something like “easy arithmetic”. So what may a mannequin of or not it’s like?

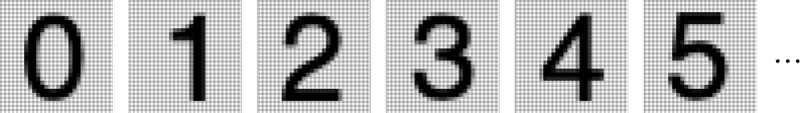

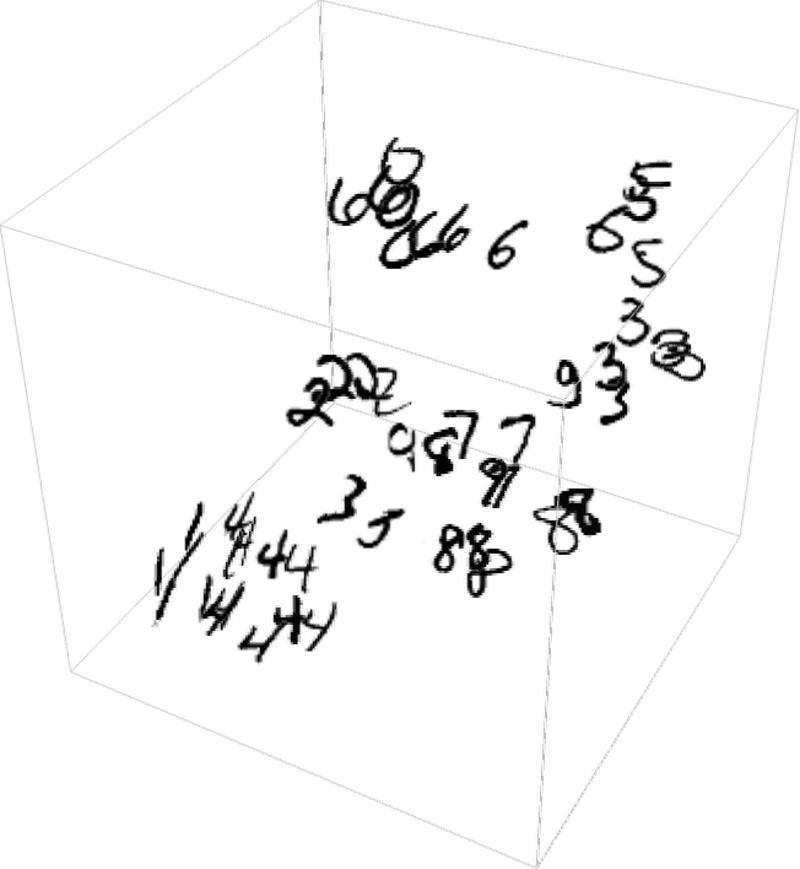

Earlier than we speak about language, let’s speak about one other human-like activity: recognizing photos. And as a easy instance of this, let’s think about photos of digits (and, sure, this can be a classic machine learning example):

One factor we may do is get a bunch of pattern photos for every digit:

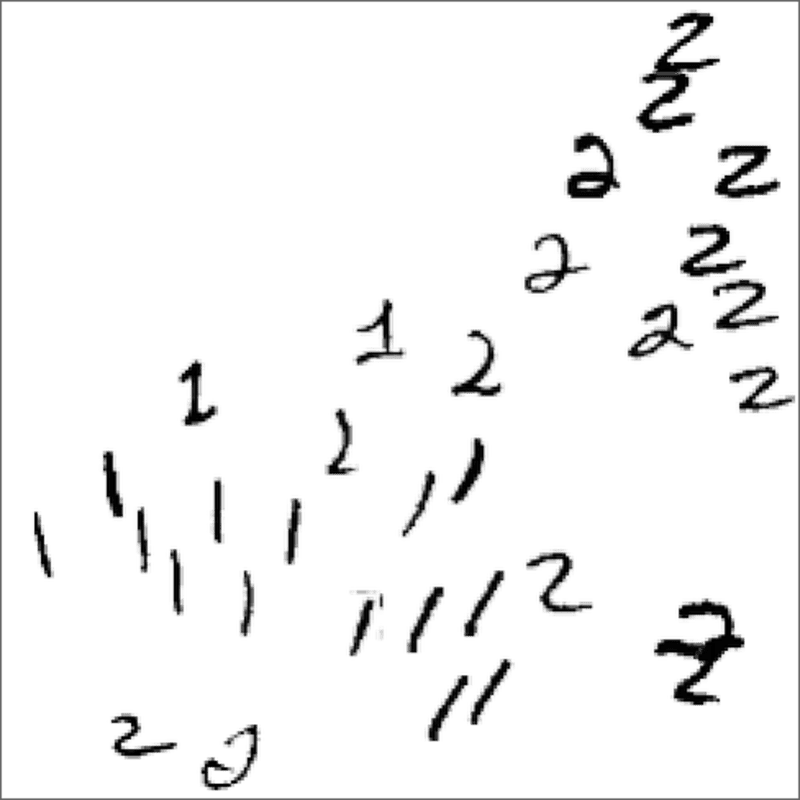

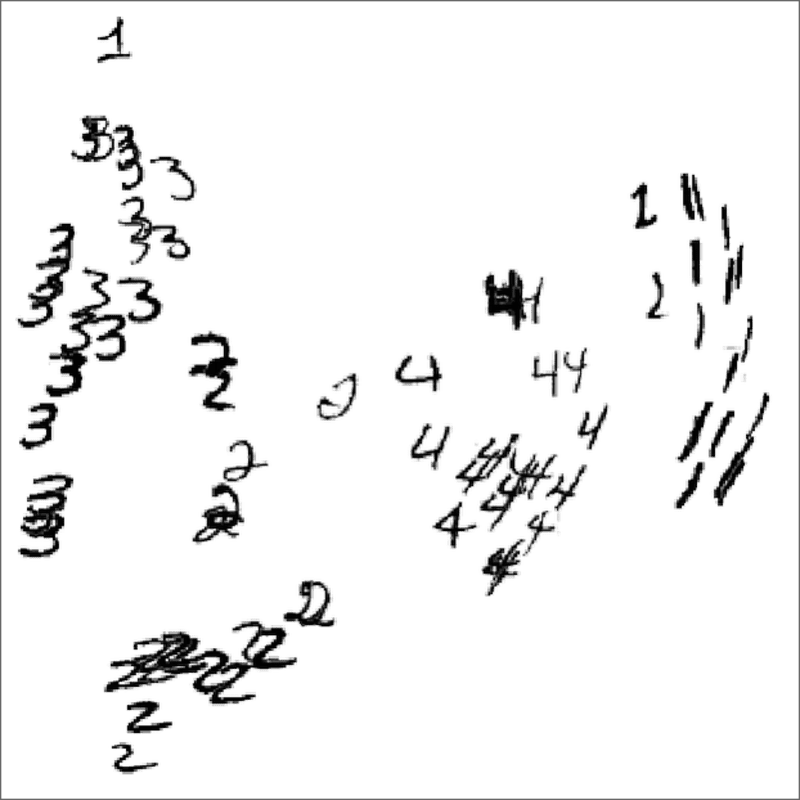

Then to search out out if a picture we’re given as enter corresponds to a specific digit we may simply do an express pixel-by-pixel comparability with the samples we have now. However as people we actually appear to do one thing higher—as a result of we will nonetheless acknowledge digits, even once they’re for instance handwritten, and have all kinds of modifications and distortions:

After we made a mannequin for our numerical knowledge above, we have been in a position to take a numerical worth x that we got, and simply compute a + b x for explicit a and b. So if we deal with the gray-level worth of every pixel right here as some variable xi is there some operate of all these variables that—when evaluated—tells us what digit the picture is of? It seems that it’s attainable to assemble such a operate. Not surprisingly, it’s not notably easy, although. And a typical instance may contain maybe half one million mathematical operations.

However the finish result’s that if we feed the gathering of pixel values for a picture into this operate, out will come the quantity specifying which digit we have now a picture of. Later, we’ll speak about how such a operate may be constructed, and the thought of neural nets. However for now let’s deal with the operate as black field, the place we feed in photos of, say, handwritten digits (as arrays of pixel values) and we get out the numbers these correspond to:

However what’s actually happening right here? Let’s say we progressively blur a digit. For a short while our operate nonetheless “acknowledges” it, right here as a “2”. However quickly it “loses it”, and begins giving the “improper” consequence:

However why do we are saying it’s the “improper” consequence? On this case, we all know we acquired all the photographs by blurring a “2”. But when our purpose is to provide a mannequin of what people can do in recognizing photos, the true query to ask is what a human would have achieved if introduced with a kind of blurred photos, with out figuring out the place it got here from.

And we have now a “good mannequin” if the outcomes we get from our operate sometimes agree with what a human would say. And the nontrivial scientific reality is that for an image-recognition activity like this we now mainly know tips on how to assemble capabilities that do that.

Can we “mathematically show” that they work? Properly, no. As a result of to do this we’d need to have a mathematical concept of what we people are doing. Take the “2” picture and alter a couple of pixels. We’d think about that with only some pixels “misplaced” we must always nonetheless think about the picture a “2”. However how far ought to that go? It’s a query of human visual perception. And, sure, the reply would little question be completely different for bees or octopuses—and probably utterly different for putative aliens.

OK, so how do our typical fashions for duties like image recognition truly work? The most well-liked—and profitable—present method makes use of neural nets. Invented—in a type remarkably near their use at this time—in the 1940s, neural nets may be considered easy idealizations of how brains seem to work.

In human brains there are about 100 billion neurons (nerve cells), every able to producing {an electrical} pulse as much as maybe a thousand instances a second. The neurons are related in a sophisticated web, with every neuron having tree-like branches permitting it to move electrical indicators to maybe 1000’s of different neurons. And in a tough approximation, whether or not any given neuron produces {an electrical} pulse at a given second will depend on what pulses it’s acquired from different neurons—with completely different connections contributing with completely different “weights”.

After we “see a picture” what’s occurring is that when photons of sunshine from the picture fall on (“photoreceptor”) cells in the back of our eyes they produce electrical indicators in nerve cells. These nerve cells are related to different nerve cells, and finally the indicators undergo a complete sequence of layers of neurons. And it’s on this course of that we “acknowledge” the picture, finally “forming the thought” that we’re “seeing a 2” (and possibly in the long run doing one thing like saying the phrase “two” out loud).

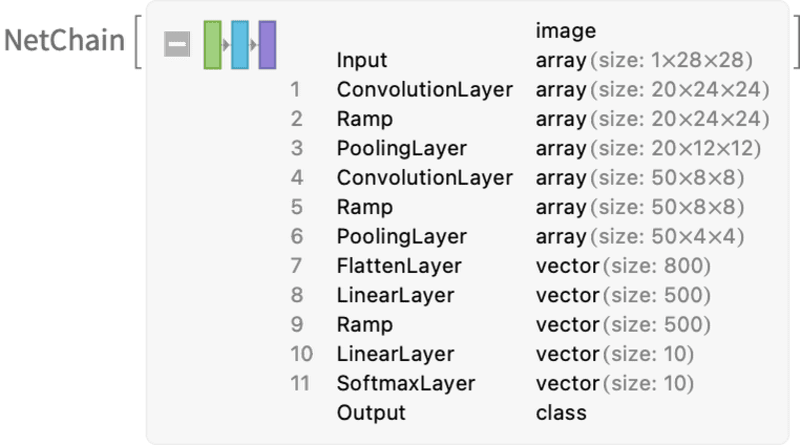

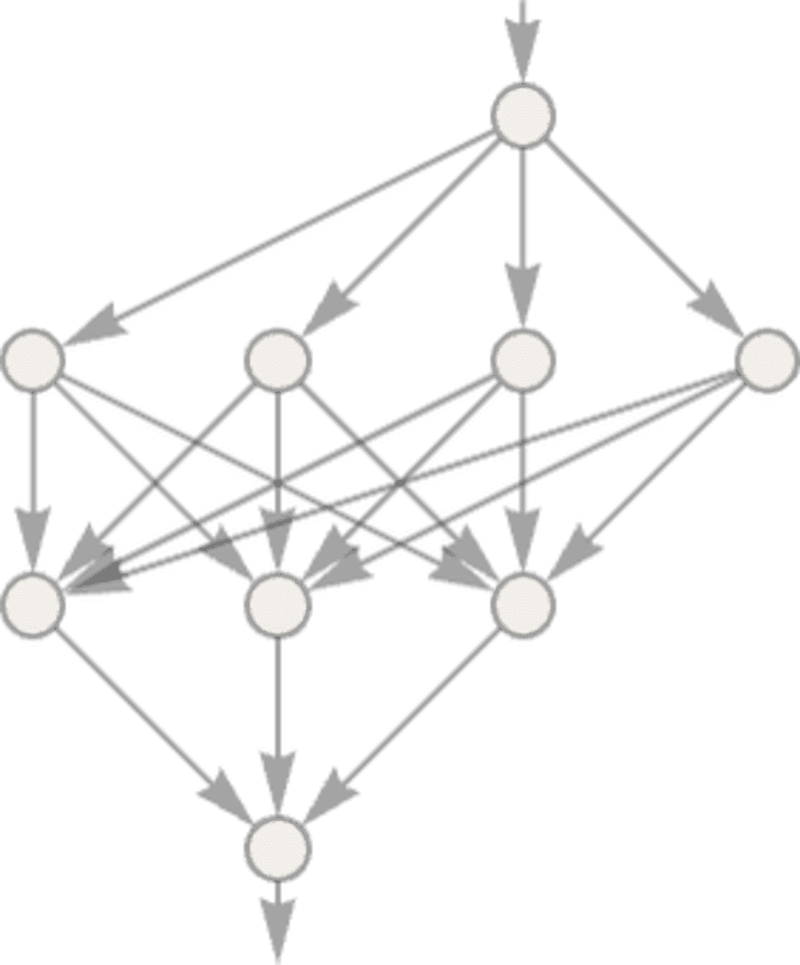

The “black-box” operate from the earlier part is a “mathematicized” model of such a neural web. It occurs to have 11 layers (although solely 4 “core layers”):

There’s nothing notably “theoretically derived” about this neural web; it’s simply one thing that—back in 1998—was constructed as a piece of engineering, and located to work. (After all, that’s not a lot completely different from how we would describe our brains as having been produced by the method of organic evolution.)

OK, however how does a neural web like this “acknowledge issues”? The bottom line is the notion of attractors. Think about we’ve acquired handwritten photos of 1’s and a couple of’s:

We in some way need all of the 1’s to “be attracted to at least one place”, and all the two’s to “be attracted to a different place”. Or, put a unique manner, if a picture is in some way “closer to being a 1” than to being a 2, we wish it to finish up within the “1 place” and vice versa.

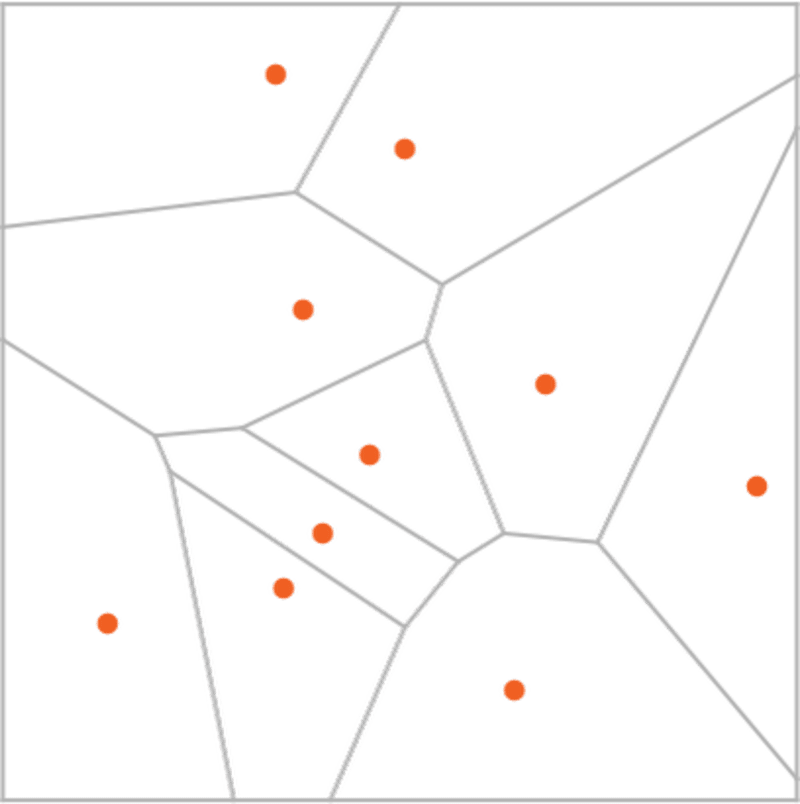

As a simple analogy, let’s say we have now sure positions within the airplane, indicated by dots (in a real-life setting they could be positions of espresso outlets). Then we would think about that ranging from any level on the airplane we’d all the time wish to find yourself on the closest dot (i.e. we’d all the time go to the closest espresso store). We are able to symbolize this by dividing the airplane into areas (“attractor basins”) separated by idealized “watersheds”:

We are able to consider this as implementing a sort of “recognition activity” wherein we’re not doing one thing like figuring out what digit a given picture “seems most like”—however somewhat we’re simply, fairly instantly, seeing what dot a given level is closest to. (The “Voronoi diagram” setup we’re exhibiting right here separates factors in 2D Euclidean house; the digit recognition activity may be considered doing one thing very comparable—however in a 784-dimensional house shaped from the grey ranges of all of the pixels in every picture.)

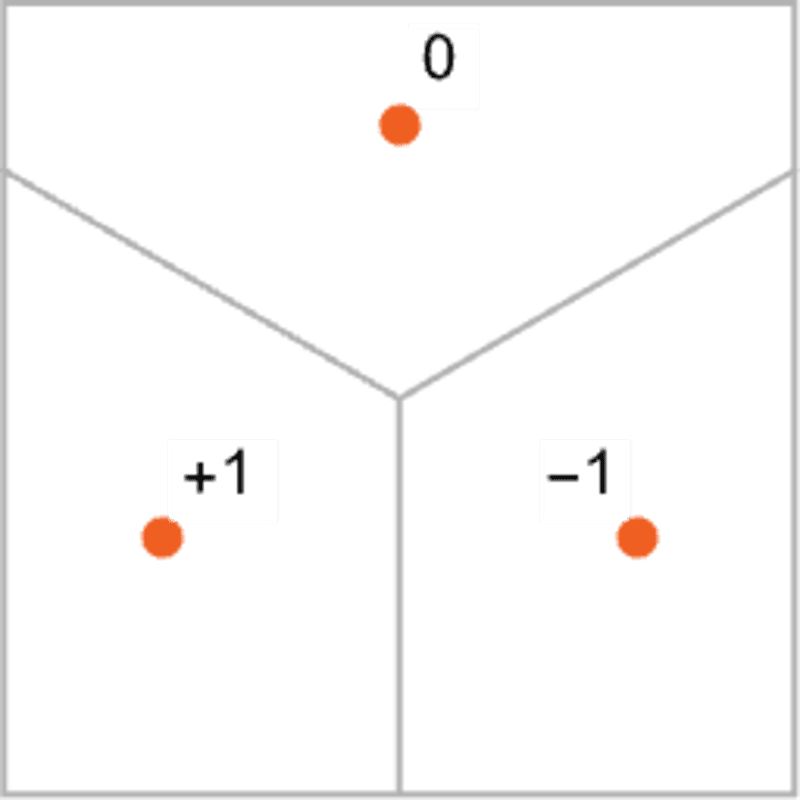

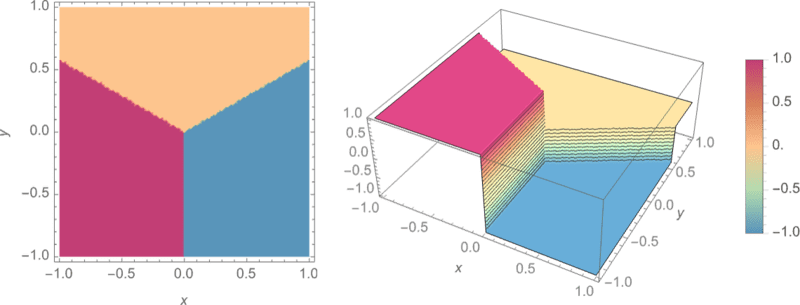

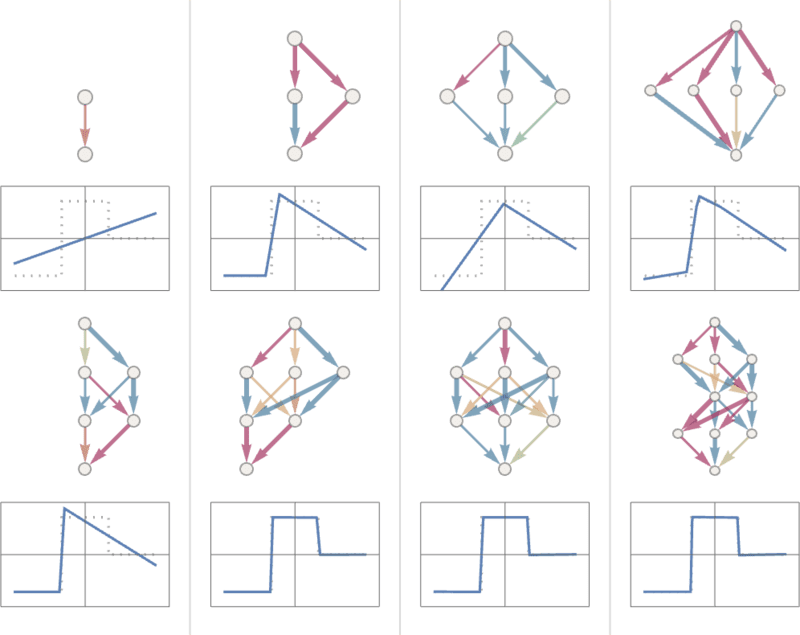

So how can we make a neural web “do a recognition activity”? Let’s think about this quite simple case:

Our purpose is to take an “enter” comparable to a place {x,y}—after which to “acknowledge” it as whichever of the three factors it’s closest to. Or, in different phrases, we wish the neural web to compute a operate of {x,y} like:

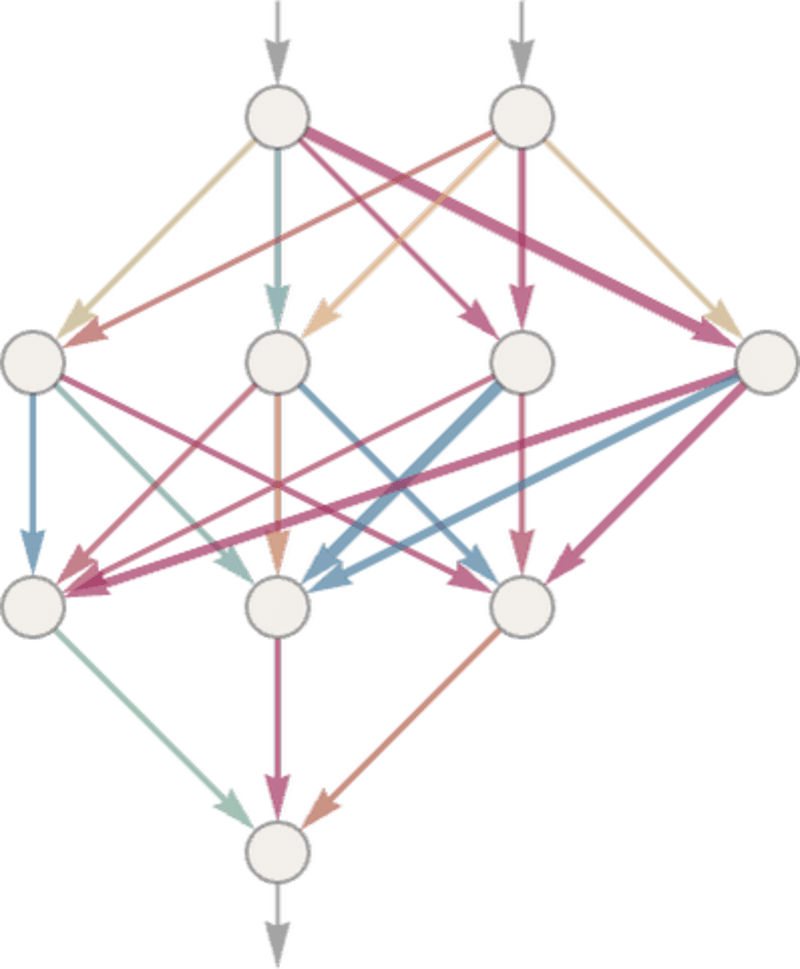

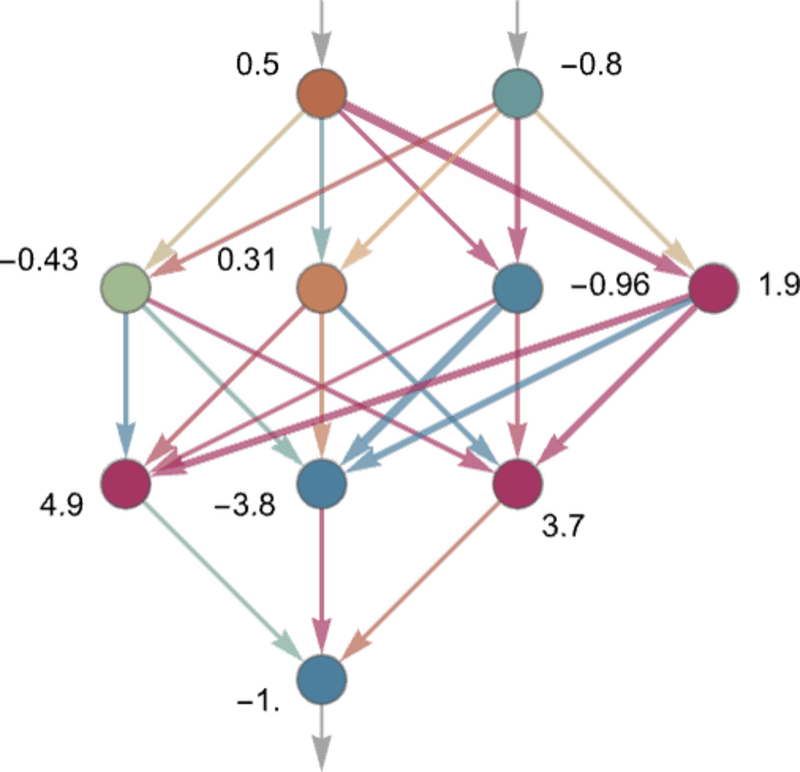

So how can we do that with a neural web? In the end a neural web is a related assortment of idealized “neurons”—often organized in layers—with a easy instance being:

Every “neuron” is successfully set as much as consider a easy numerical operate. And to “use” the community, we merely feed numbers (like our coordinates x and y) in on the high, then have neurons on every layer “consider their capabilities” and feed the outcomes ahead by the community—finally producing the ultimate consequence on the backside:

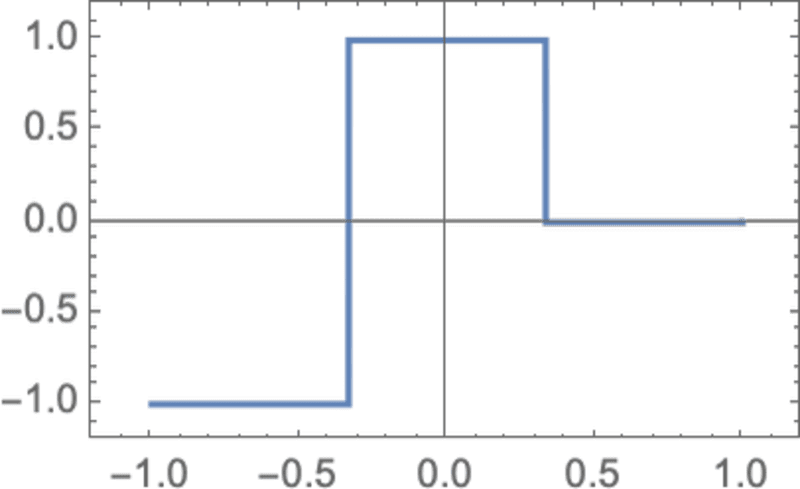

Within the conventional (biologically impressed) setup every neuron successfully has a sure set of “incoming connections” from the neurons on the earlier layer, with every connection being assigned a sure “weight” (which could be a constructive or unfavorable quantity). The worth of a given neuron is decided by multiplying the values of “earlier neurons” by their corresponding weights, then including these up and including a relentless—and at last making use of a “thresholding” (or “activation”) operate. In mathematical phrases, if a neuron has inputs x = {x1, x2 …} then we compute f[w . x + b], the place the weights w and fixed b are usually chosen in a different way for every neuron within the community; the operate f is often the identical.

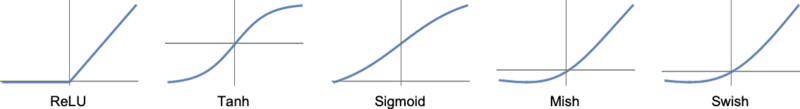

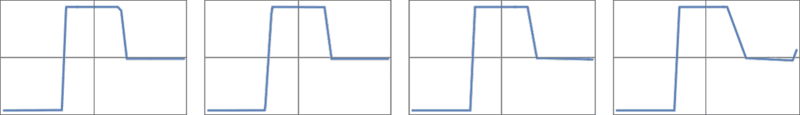

Computing w . x + b is only a matter of matrix multiplication and addition. The “activation operate” f introduces nonlinearity (and in the end is what results in nontrivial habits). Numerous activation capabilities generally get used; right here we’ll simply use Ramp (or ReLU):

For every activity we wish the neural web to carry out (or, equivalently, for every general operate we wish it to guage) we’ll have completely different decisions of weights. (And—as we’ll focus on later—these weights are usually decided by “coaching” the neural web utilizing machine studying from examples of the outputs we wish.)

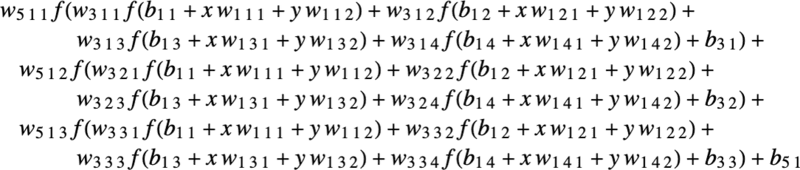

In the end, each neural web simply corresponds to some general mathematical operate—although it might be messy to put in writing out. For the instance above, it will be:

The neural web of ChatGPT additionally simply corresponds to a mathematical operate like this—however successfully with billions of phrases.

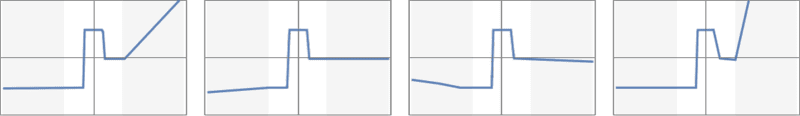

However let’s return to particular person neurons. Listed below are some examples of the capabilities a neuron with two inputs (representing coordinates x and y) can compute with numerous decisions of weights and constants (and Ramp as activation operate):

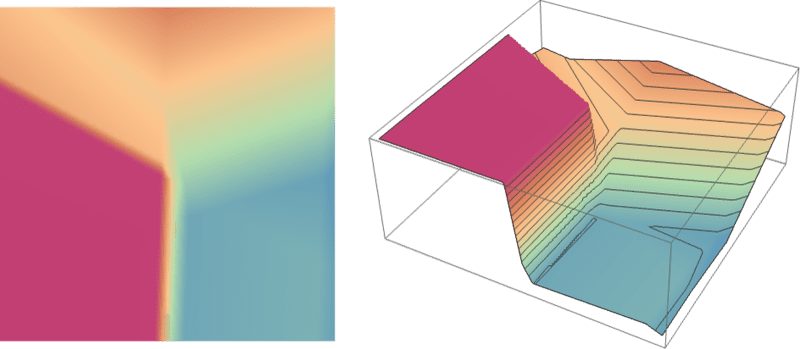

However what concerning the bigger community from above? Properly, right here’s what it computes:

It’s not fairly “proper”, but it surely’s near the “nearest level” operate we confirmed above.

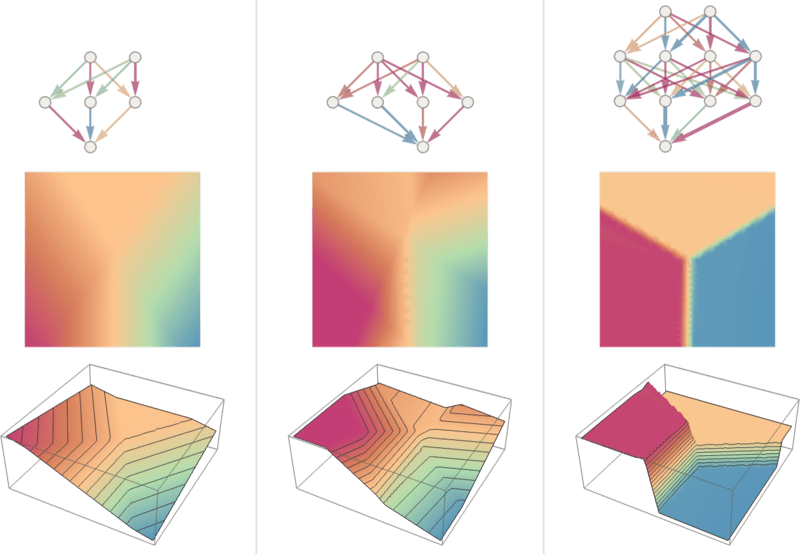

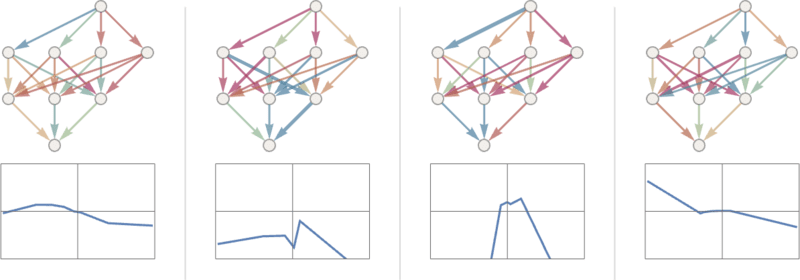

Let’s see what occurs with another neural nets. In every case, as we’ll clarify later, we’re utilizing machine studying to search out the only option of weights. Then we’re exhibiting right here what the neural web with these weights computes:

Larger networks usually do higher at approximating the operate we’re aiming for. And within the “center of every attractor basin” we sometimes get precisely the reply we wish. However at the boundaries—the place the neural web “has a tough time making up its thoughts”—issues may be messier.

With this easy mathematical-style “recognition activity” it’s clear what the “proper reply” is. However in the issue of recognizing handwritten digits, it’s not so clear. What if somebody wrote a “2” so badly it appeared like a “7”, and many others.? Nonetheless, we will ask how a neural web distinguishes digits—and this provides a sign:

Can we are saying “mathematically” how the community makes its distinctions? Not likely. It’s simply “doing what the neural web does”. Nevertheless it seems that that usually appears to agree pretty nicely with the distinctions we people make.

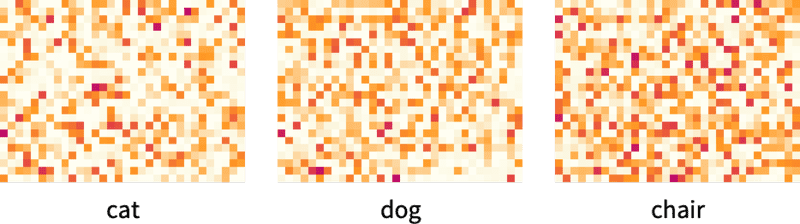

Let’s take a extra elaborate instance. Let’s say we have now photos of cats and canine. And we have now a neural net that’s been trained to distinguish them. Right here’s what it would do on some examples:

Now it’s even much less clear what the “proper reply” is. What a couple of canine wearing a cat go well with? And so on. No matter enter it’s given the neural web will generate a solution, and in a manner moderately per how people may. As I’ve mentioned above, that’s not a reality we will “derive from first rules”. It’s simply one thing that’s empirically been discovered to be true, no less than in sure domains. Nevertheless it’s a key purpose why neural nets are helpful: that they in some way seize a “human-like” manner of doing issues.

Present your self an image of a cat, and ask “Why is {that a} cat?”. Possibly you’d begin saying “Properly, I see its pointy ears, and many others.” Nevertheless it’s not very simple to clarify the way you acknowledged the picture as a cat. It’s simply that in some way your mind figured that out. However for a mind there’s no manner (no less than but) to “go inside” and see the way it figured it out. What about for an (synthetic) neural web? Properly, it’s easy to see what every “neuron” does while you present an image of a cat. However even to get a primary visualization is often very troublesome.

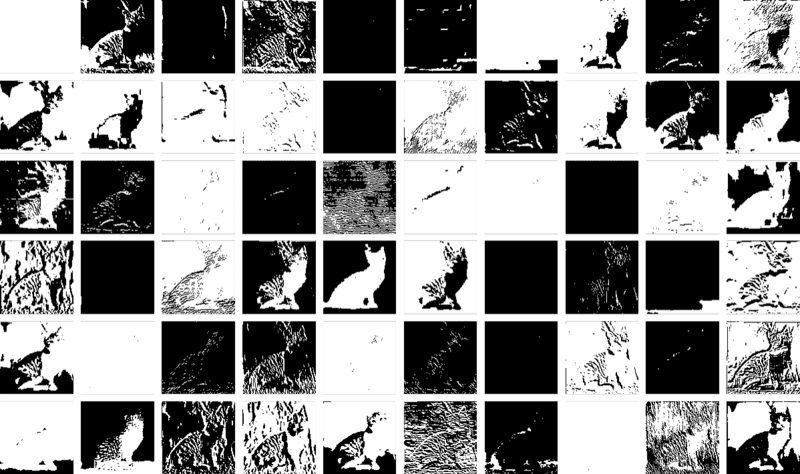

Within the closing web that we used for the “nearest level” drawback above there are 17 neurons. Within the web for recognizing handwritten digits there are 2190. And within the web we’re utilizing to acknowledge cats and canine there are 60,650. Usually it will be fairly troublesome to visualise what quantities to 60,650-dimensional house. However as a result of this can be a community set as much as cope with photos, a lot of its layers of neurons are organized into arrays, just like the arrays of pixels it’s .

And if we take a typical cat picture

then we will symbolize the states of neurons on the first layer by a group of derived photos—a lot of which we will readily interpret as being issues like “the cat with out its background”, or “the define of the cat”:

By the tenth layer it’s more durable to interpret what’s happening:

However on the whole we would say that the neural web is “selecting out sure options” (possibly pointy ears are amongst them), and utilizing these to find out what the picture is of. However are these options ones for which we have now names—like “pointy ears”? Largely not.

Are our brains utilizing comparable options? Largely we don’t know. Nevertheless it’s notable that the primary few layers of a neural web just like the one we’re exhibiting right here appear to pick features of photos (like edges of objects) that appear to be just like ones we all know are picked out by the primary stage of visible processing in brains.

However let’s say we wish a “concept of cat recognition” in neural nets. We are able to say: “Look, this explicit web does it”—and instantly that provides us some sense of “how arduous an issue” it’s (and, for instance, what number of neurons or layers could be wanted). However no less than as of now we don’t have a strategy to “give a story description” of what the community is doing. And possibly that’s as a result of it really is computationally irreducible, and there’s no basic strategy to discover what it does besides by explicitly tracing every step. Or possibly it’s simply that we haven’t “discovered the science”, and recognized the “pure legal guidelines” that permit us to summarize what’s happening.

We’ll encounter the identical sorts of points after we speak about producing language with ChatGPT. And once more it’s not clear whether or not there are methods to “summarize what it’s doing”. However the richness and element of language (and our expertise with it) might permit us to get additional than with photos.

We’ve been speaking thus far about neural nets that “already know” tips on how to do explicit duties. However what makes neural nets so helpful (presumably additionally in brains) is that not solely can they in precept do all kinds of duties, however they are often incrementally “educated from examples” to do these duties.

After we make a neural web to differentiate cats from canine we don’t successfully have to put in writing a program that (say) explicitly finds whiskers; as an alternative we simply present plenty of examples of what’s a cat and what’s a canine, after which have the community “machine be taught” from these tips on how to distinguish them.

And the purpose is that the educated community “generalizes” from the actual examples it’s proven. Simply as we’ve seen above, it isn’t merely that the community acknowledges the actual pixel sample of an instance cat picture it was proven; somewhat it’s that the neural web in some way manages to differentiate photos on the idea of what we think about to be some sort of “basic catness”.

So how does neural web coaching truly work? Primarily what we’re all the time making an attempt to do is to search out weights that make the neural web efficiently reproduce the examples we’ve given. After which we’re counting on the neural web to “interpolate” (or “generalize”) “between” these examples in a “cheap” manner.

Let’s have a look at an issue even easier than the nearest-point one above. Let’s simply attempt to get a neural web to be taught the operate:

For this activity, we’ll want a community that has only one enter and one output, like:

However what weights, and many others. ought to we be utilizing? With each attainable set of weights the neural web will compute some operate. And, for instance, right here’s what it does with a couple of randomly chosen units of weights:

And, sure, we will plainly see that in none of those instances does it get even near reproducing the operate we wish. So how do we discover weights that may reproduce the operate?

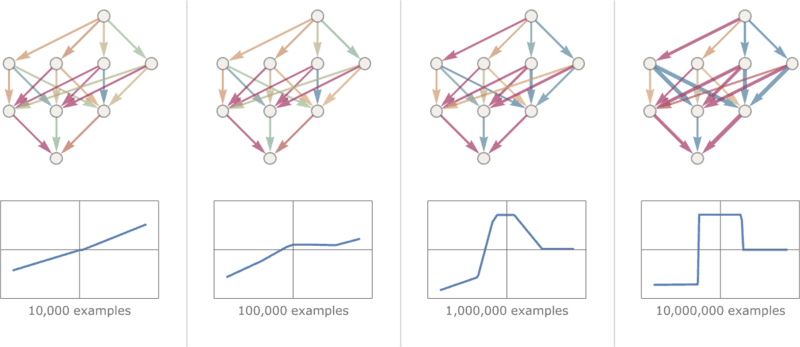

The essential concept is to provide plenty of “enter ? output” examples to “be taught from”—after which to attempt to discover weights that may reproduce these examples. Right here’s the results of doing that with progressively extra examples:

At every stage on this “coaching” the weights within the community are progressively adjusted—and we see that finally we get a community that efficiently reproduces the operate we wish. So how can we regulate the weights? The essential concept is at every stage to see “how distant we’re” from getting the operate we wish—after which to replace the weights in such a manner as to get nearer.

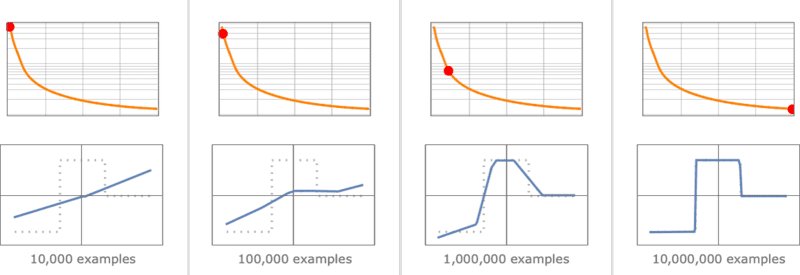

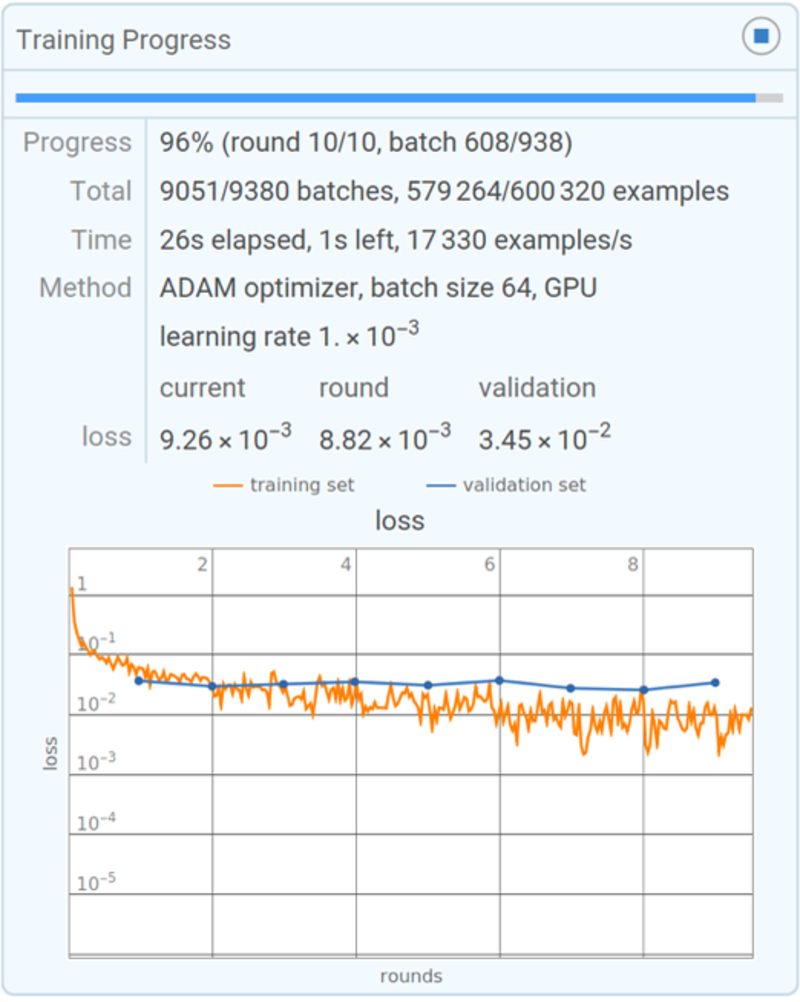

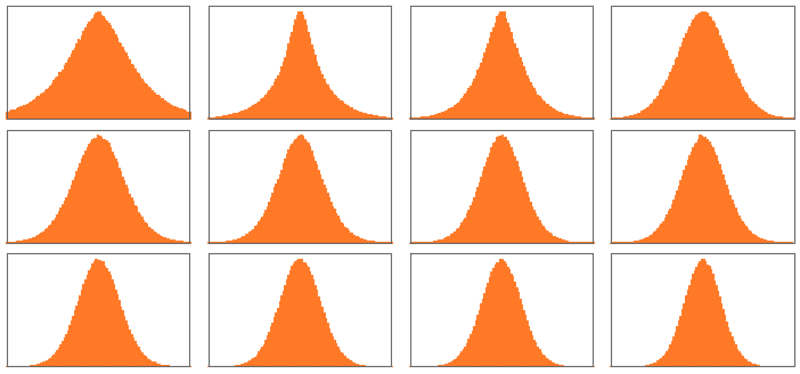

To search out out “how distant we’re” we compute what’s often referred to as a “loss operate” (or typically “price operate”). Right here we’re utilizing a easy (L2) loss operate that’s simply the sum of the squares of the variations between the values we get, and the true values. And what we see is that as our coaching course of progresses, the loss operate progressively decreases (following a sure “studying curve” that’s completely different for various duties)—till we attain some extent the place the community (no less than to a superb approximation) efficiently reproduces the operate we wish:

Alright, so the final important piece to clarify is how the weights are adjusted to scale back the loss operate. As we’ve mentioned, the loss operate provides us a “distance” between the values we’ve acquired, and the true values. However the “values we’ve acquired” are decided at every stage by the present model of neural web—and by the weights in it. However now think about that the weights are variables—say wi. We wish to learn how to regulate the values of those variables to attenuate the loss that will depend on them.

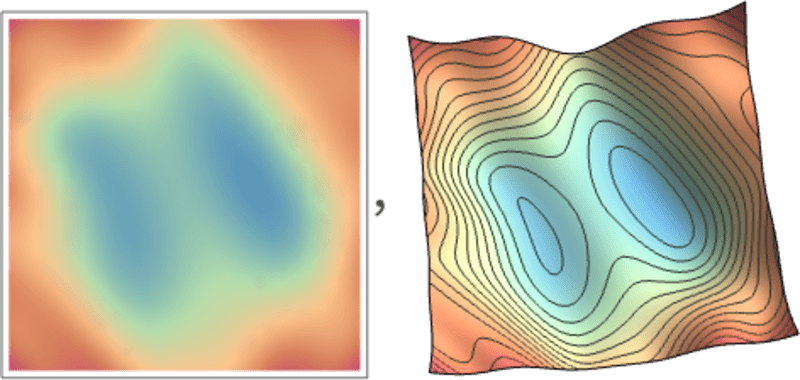

For instance, think about (in an unimaginable simplification of typical neural nets utilized in observe) that we have now simply two weights w1 and w2. Then we would have a loss that as a operate of w1 and w2 seems like this:

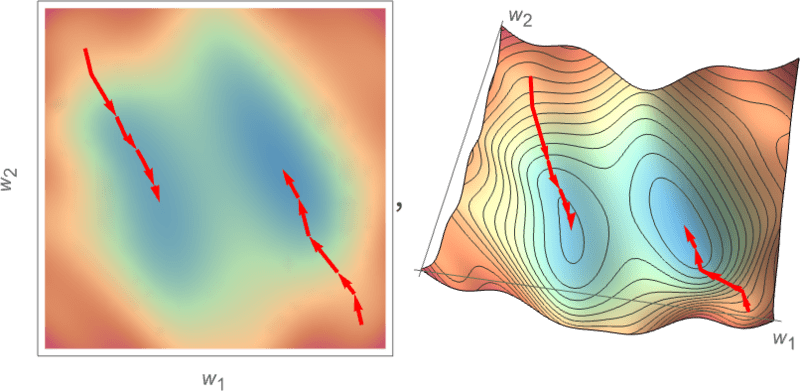

Numerical evaluation supplies a wide range of methods for locating the minimal in instances like this. However a typical method is simply to progressively comply with the trail of steepest descent from no matter earlier w1, w2 we had:

Like water flowing down a mountain, all that’s assured is that this process will find yourself at some native minimal of the floor (“a mountain lake”); it would nicely not attain the last word international minimal.

It’s not apparent that it will be possible to search out the trail of the steepest descent on the “weight panorama”. However calculus involves the rescue. As we talked about above, one can all the time consider a neural web as computing a mathematical operate—that will depend on its inputs, and its weights. However now think about differentiating with respect to those weights. It seems that the chain rule of calculus in impact lets us “unravel” the operations achieved by successive layers within the neural web. And the result’s that we will—no less than in some native approximation—“invert” the operation of the neural web, and progressively discover weights that reduce the loss related to the output.

The image above reveals the sort of minimization we would must do within the unrealistically easy case of simply 2 weights. Nevertheless it seems that even with many extra weights (ChatGPT makes use of 175 billion) it’s nonetheless attainable to do the minimization, no less than to some stage of approximation. And in reality the massive breakthrough in “deep studying” that occurred round 2011 was related to the invention that in some sense it may be simpler to do (no less than approximate) minimization when there are many weights concerned than when there are pretty few.

In different phrases—considerably counterintuitively—it may be simpler to resolve extra sophisticated issues with neural nets than easier ones. And the tough purpose for this appears to be that when one has a number of “weight variables” one has a high-dimensional house with “plenty of completely different instructions” that may lead one to the minimal—whereas with fewer variables it’s simpler to finish up getting caught in a neighborhood minimal (“mountain lake”) from which there’s no “course to get out”.

It’s price mentioning that in typical instances there are lots of completely different collections of weights that may all give neural nets which have just about the identical efficiency. And often in sensible neural web coaching there are many random decisions made—that result in “different-but-equivalent options”, like these:

However every such “completely different answer” could have no less than barely completely different habits. And if we ask, say, for an “extrapolation” exterior the area the place we gave coaching examples, we will get dramatically completely different outcomes:

However which of those is “proper”? There’s actually no strategy to say. They’re all “per the noticed knowledge”. However all of them correspond to completely different “innate” methods to “take into consideration” what to do “exterior the field”. And a few could appear “extra cheap” to us people than others.

Notably over the previous decade, there’ve been many advances within the artwork of coaching neural nets. And, sure, it’s mainly an artwork. Typically—particularly looking back—one can see no less than a glimmer of a “scientific rationalization” for one thing that’s being achieved. However largely issues have been found by trial and error, including concepts and methods which have progressively constructed a major lore about tips on how to work with neural nets.

There are a number of key elements. First, there’s the matter of what structure of neural web one ought to use for a specific activity. Then there’s the crucial difficulty of how one’s going to get the info on which to coach the neural web. And more and more one isn’t coping with coaching a web from scratch: as an alternative a brand new web can both instantly incorporate one other already-trained web, or no less than can use that web to generate extra coaching examples for itself.

One may need thought that for each explicit sort of activity one would wish a unique structure of neural web. However what’s been discovered is that the identical structure typically appears to work even for apparently fairly completely different duties. At some stage this reminds one of many idea of universal computation (and my Principle of Computational Equivalence), however, as I’ll focus on later, I believe it’s extra a mirrored image of the truth that the duties we’re sometimes making an attempt to get neural nets to do are “human-like” ones—and neural nets can seize fairly basic “human-like processes”.

In earlier days of neural nets, there tended to be the concept that one ought to “make the neural web do as little as attainable”. For instance, in converting speech to text it was thought that one ought to first analyze the audio of the speech, break it into phonemes, and many others. However what was discovered is that—no less than for “human-like duties”—it’s often higher simply to attempt to prepare the neural web on the “end-to-end drawback”, letting it “uncover” the mandatory intermediate options, encodings, and many others. for itself.

There was additionally the concept that one ought to introduce sophisticated particular person parts into the neural web, to let it in impact “explicitly implement explicit algorithmic concepts”. However as soon as once more, this has largely turned out to not be worthwhile; as an alternative, it’s higher simply to cope with quite simple parts and allow them to “arrange themselves” (albeit often in methods we will’t perceive) to realize (presumably) the equal of these algorithmic concepts.

That’s to not say that there aren’t any “structuring concepts” which might be related for neural nets. Thus, for instance, having 2D arrays of neurons with local connections appears no less than very helpful within the early phases of processing photos. And having patterns of connectivity that focus on “wanting again in sequences” appears helpful—as we’ll see later—in coping with issues like human language, for instance in ChatGPT.

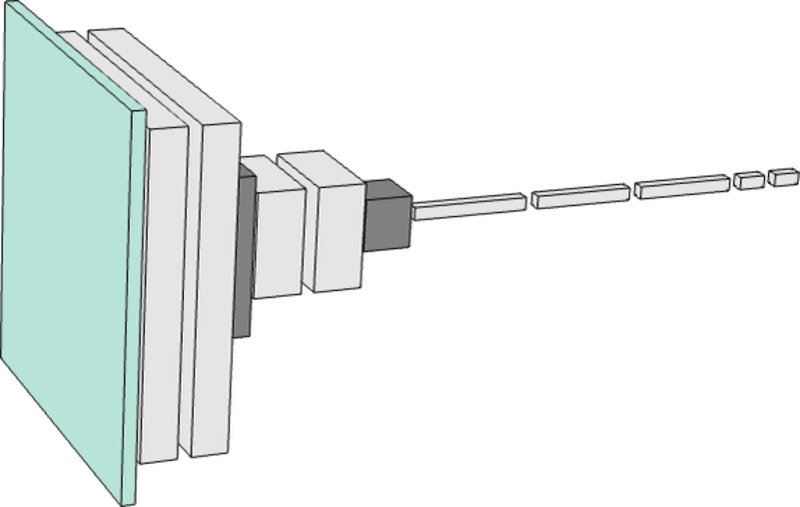

However an necessary characteristic of neural nets is that—like computer systems on the whole—they’re in the end simply coping with knowledge. And present neural nets—with present approaches to neural web coaching—specifically deal with arrays of numbers. However in the middle of processing, these arrays may be fully rearranged and reshaped. And for instance, the network we used for identifying digits above begins with a 2D “image-like” array, shortly “thickening” to many channels, however then “concentrating down” into a 1D array that may in the end comprise parts representing the completely different attainable output digits:

However, OK, how can one inform how huge a neural web one will want for a specific activity? It’s one thing of an artwork. At some stage the important thing factor is to know “how arduous the duty is”. However for human-like duties that’s sometimes very arduous to estimate. Sure, there could also be a scientific strategy to do the duty very “mechanically” by pc. Nevertheless it’s arduous to know if there are what one may consider as methods or shortcuts that permit one to do the duty no less than at a “human-like stage” vastly extra simply. It would take enumerating a giant game tree to “mechanically” play a sure sport; however there could be a a lot simpler (“heuristic”) strategy to obtain “human-level play”.

When one’s coping with tiny neural nets and easy duties one can typically explicitly see that one “can’t get there from right here”. For instance, right here’s the most effective one appears to have the ability to do on the duty from the earlier part with a couple of small neural nets:

And what we see is that if the web is simply too small, it simply can’t reproduce the operate we wish. However above some measurement, it has no drawback—no less than if one trains it for lengthy sufficient, with sufficient examples. And, by the best way, these footage illustrate a bit of neural web lore: that one can typically get away with a smaller community if there’s a “squeeze” within the center that forces every part to undergo a smaller intermediate variety of neurons. (It’s additionally price mentioning that “no-intermediate-layer”—or so-called “perceptron”—networks can solely be taught primarily linear capabilities—however as quickly as there’s even one intermediate layer it’s always in principle possible to approximate any operate arbitrarily nicely, no less than if one has sufficient neurons, although to make it feasibly trainable one sometimes has some sort of regularization or normalization.)

OK, so let’s say one’s settled on a sure neural web structure. Now there’s the problem of getting knowledge to coach the community with. And lots of the sensible challenges round neural nets—and machine studying on the whole—middle on buying or making ready the mandatory coaching knowledge. In lots of instances (“supervised studying”) one desires to get express examples of inputs and the outputs one is anticipating from them. Thus, for instance, one may need photos tagged by what’s in them, or another attribute. And possibly one must explicitly undergo—often with nice effort—and do the tagging. However fairly often it seems to be attainable to piggyback on one thing that’s already been achieved, or use it as some sort of proxy. And so, for instance, one may use alt tags which were offered for photos on the internet. Or, in a unique area, one may use closed captions which were created for movies. Or—for language translation coaching—one may use parallel variations of webpages or different paperwork that exist in numerous languages.

How a lot knowledge do you have to present a neural web to coach it for a specific activity? Once more, it’s arduous to estimate from first rules. Actually the necessities may be dramatically lowered through the use of “switch studying” to “switch in” issues like lists of necessary options which have already been realized in one other community. However usually neural nets must “see a number of examples” to coach nicely. And no less than for some duties it’s an necessary piece of neural web lore that the examples may be extremely repetitive. And certainly it’s a normal technique to simply present a neural web all of the examples one has, again and again. In every of those “coaching rounds” (or “epochs”) the neural web might be in no less than a barely completely different state, and in some way “reminding it” of a specific instance is beneficial in getting it to “keep in mind that instance”. (And, sure, maybe that is analogous to the usefulness of repetition in human memorization.)

However typically simply repeating the identical instance again and again isn’t sufficient. It’s additionally crucial to indicate the neural web variations of the instance. And it’s a characteristic of neural web lore that these “knowledge augmentation” variations don’t need to be subtle to be helpful. Simply barely modifying photos with primary picture processing could make them primarily “pretty much as good as new” for neural web coaching. And, equally, when one’s run out of precise video, and many others. for coaching self-driving automobiles, one can go on and simply get knowledge from working simulations in a mannequin videogame-like atmosphere with out all of the element of precise real-world scenes.

How about one thing like ChatGPT? Properly, it has the good characteristic that it might do “unsupervised studying”, making it a lot simpler to get it examples to coach from. Recall that the essential activity for ChatGPT is to determine tips on how to proceed a bit of textual content that it’s been given. So to get it “coaching examples” all one has to do is get a bit of textual content, and masks out the tip of it, after which use this because the “enter to coach from”—with the “output” being the entire, unmasked piece of textual content. We’ll focus on this extra later, however the principle level is that—not like, say, for studying what’s in photos—there’s no “express tagging” wanted; ChatGPT can in impact simply be taught instantly from no matter examples of textual content it’s given.

OK, so what concerning the precise studying course of in a neural web? In the long run it’s all about figuring out what weights will greatest seize the coaching examples which were given. And there are all kinds of detailed decisions and “hyperparameter settings” (so referred to as as a result of the weights may be considered “parameters”) that can be utilized to tweak how that is achieved. There are completely different choices of loss function (sum of squares, sum of absolute values, and many others.). There are alternative ways to do loss minimization (how far in weight house to maneuver at every step, and many others.). After which there are questions like how huge a “batch” of examples to indicate to get every successive estimate of the loss one’s making an attempt to attenuate. And, sure, one can apply machine studying (as we do, for instance, in Wolfram Language) to automate machine studying—and to mechanically set issues like hyperparameters.

However in the long run the entire course of of coaching may be characterised by seeing how the loss progressively decreases (as on this Wolfram Language progress monitor for a small training):

And what one sometimes sees is that the loss decreases for some time, however finally flattens out at some fixed worth. If that worth is small enough, then the coaching may be thought of profitable; in any other case it’s in all probability an indication one ought to strive altering the community structure.

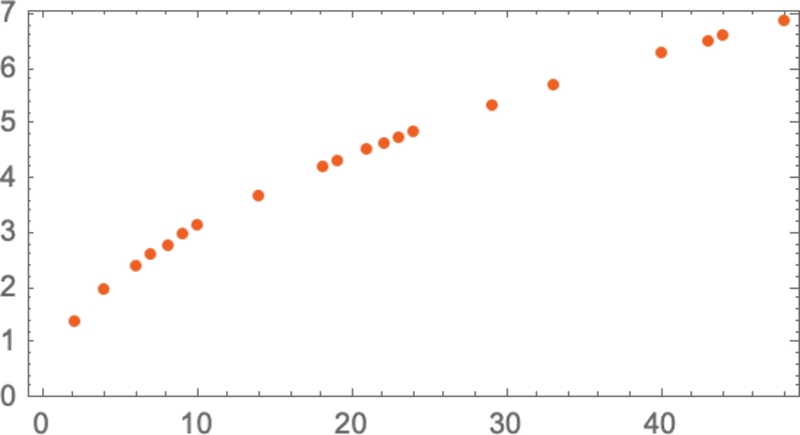

Can one inform how lengthy it ought to take for the “studying curve” to flatten out? Like for therefore many different issues, there appear to be approximate power-law scaling relationships that depend upon the dimensions of neural web and quantity of knowledge one’s utilizing. However the basic conclusion is that coaching a neural web is difficult—and takes a number of computational effort. And as a sensible matter, the overwhelming majority of that effort is spent doing operations on arrays of numbers, which is what GPUs are good at—which is why neural web coaching is often restricted by the supply of GPUs.

Sooner or later, will there be essentially higher methods to coach neural nets—or usually do what neural nets do? Nearly actually, I believe. The elemental concept of neural nets is to create a versatile “computing material” out of numerous easy (primarily an identical) parts—and to have this “material” be one that may be incrementally modified to be taught from examples. In present neural nets, one’s primarily utilizing the concepts of calculus—utilized to actual numbers—to do this incremental modification. Nevertheless it’s more and more clear that having high-precision numbers doesn’t matter; 8 bits or much less could be sufficient even with present strategies.

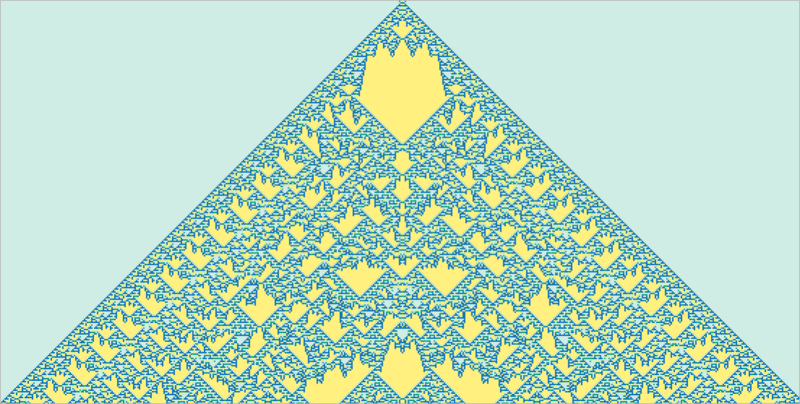

With computational systems like cellular automata that mainly function in parallel on many particular person bits it’s by no means been clear how to do this kind of incremental modification, however there’s no purpose to assume it isn’t attainable. And in reality, very similar to with the “deep-learning breakthrough of 2012” it might be that such incremental modification will successfully be simpler in additional sophisticated instances than in easy ones.

Neural nets—maybe a bit like brains—are set as much as have an primarily mounted community of neurons, with what’s modified being the power (“weight”) of connections between them. (Maybe in no less than younger brains vital numbers of wholly new connections can even develop.) However whereas this could be a handy setup for biology, it’s by no means clear that it’s even near one of the best ways to realize the performance we want. And one thing that includes the equal of progressive community rewriting (maybe harking back to our Physics Project) may nicely in the end be higher.

However even throughout the framework of current neural nets there’s presently an important limitation: neural web coaching because it’s now achieved is essentially sequential, with the consequences of every batch of examples being propagated again to replace the weights. And certainly with present pc {hardware}—even bearing in mind GPUs—most of a neural web is “idle” more often than not throughout coaching, with only one half at a time being up to date. And in a way it is because our present computer systems are likely to have reminiscence that’s separate from their CPUs (or GPUs). However in brains it’s presumably completely different—with each “reminiscence factor” (i.e. neuron) additionally being a probably energetic computational factor. And if we may arrange our future pc {hardware} this manner it would turn out to be attainable to do coaching rather more effectively.

The capabilities of one thing like ChatGPT appear so spectacular that one may think that if one may simply “preserve going” and prepare bigger and bigger neural networks, then they’d finally have the ability to “do every part”. And if one’s involved with issues which might be readily accessible to quick human considering, it’s fairly attainable that that is the case. However the lesson of the previous a number of hundred years of science is that there are issues that may be discovered by formal processes, however aren’t readily accessible to quick human considering.

Nontrivial arithmetic is one huge instance. However the basic case is absolutely computation. And in the end the problem is the phenomenon of computational irreducibility. There are some computations which one may assume would take many steps to do, however which may the truth is be “lowered” to one thing fairly quick. However the discovery of computational irreducibility implies that this doesn’t all the time work. And as an alternative there are processes—in all probability just like the one under—the place to work out what occurs inevitably requires primarily tracing every computational step:

The sorts of issues that we usually do with our brains are presumably particularly chosen to keep away from computational irreducibility. It takes particular effort to do math in a single’s mind. And it’s in observe largely not possible to “assume by” the steps within the operation of any nontrivial program simply in a single’s mind.

However in fact for that we have now computer systems. And with computer systems we will readily do lengthy, computationally irreducible issues. And the important thing level is that there’s on the whole no shortcut for these.

Sure, we may memorize plenty of particular examples of what occurs in some explicit computational system. And possibly we may even see some (“computationally reducible”) patterns that will permit us to do some generalization. However the level is that computational irreducibility signifies that we will by no means assure that the surprising gained’t occur—and it’s solely by explicitly doing the computation you could inform what truly occurs in any explicit case.

And in the long run there’s only a basic stress between learnability and computational irreducibility. Studying includes in impact compressing data by leveraging regularities. However computational irreducibility implies that in the end there’s a restrict to what regularities there could also be.

As a sensible matter, one can think about constructing little computational units—like mobile automata or Turing machines—into trainable programs like neural nets. And certainly such units can function good “instruments” for the neural web—like Wolfram|Alpha can be a good tool for ChatGPT. However computational irreducibility implies that one can’t anticipate to “get inside” these units and have them be taught.

Or put one other manner, there’s an final tradeoff between functionality and trainability: the extra you need a system to make “true use” of its computational capabilities, the extra it’s going to indicate computational irreducibility, and the much less it’s going to be trainable. And the extra it’s essentially trainable, the much less it’s going to have the ability to do subtle computation.

(For ChatGPT because it presently is, the scenario is definitely rather more excessive, as a result of the neural web used to generate every token of output is a pure “feed-forward” community, with out loops, and due to this fact has no capability to do any sort of computation with nontrivial “management circulate”.)

After all, one may ponder whether it’s truly necessary to have the ability to do irreducible computations. And certainly for a lot of human historical past it wasn’t notably necessary. However our fashionable technological world has been constructed on engineering that makes use of no less than mathematical computations—and more and more additionally extra basic computations. And if we have a look at the pure world, it’s full of irreducible computation—that we’re slowly understanding tips on how to emulate and use for our technological functions.

Sure, a neural web can actually discover the sorts of regularities within the pure world that we would additionally readily discover with “unaided human considering”. But when we wish to work out issues which might be within the purview of mathematical or computational science the neural web isn’t going to have the ability to do it—except it successfully “makes use of as a software” an “extraordinary” computational system.

However there’s one thing probably complicated about all of this. Prior to now there have been loads of duties—together with writing essays—that we’ve assumed have been in some way “essentially too arduous” for computer systems. And now that we see them achieved by the likes of ChatGPT we are likely to all of the sudden assume that computer systems will need to have turn out to be vastly extra highly effective—particularly surpassing issues they have been already mainly in a position to do (like progressively computing the habits of computational programs like mobile automata).

However this isn’t the fitting conclusion to attract. Computationally irreducible processes are nonetheless computationally irreducible, and are nonetheless essentially arduous for computer systems—even when computer systems can readily compute their particular person steps. And as an alternative what we must always conclude is that duties—like writing essays—that we people may do, however we didn’t assume computer systems may do, are literally in some sense computationally simpler than we thought.

In different phrases, the explanation a neural web may be profitable in writing an essay is as a result of writing an essay seems to be a “computationally shallower” drawback than we thought. And in a way this takes us nearer to “having a concept” of how we people handle to do issues like writing essays, or on the whole cope with language.

For those who had a large enough neural web then, sure, you may have the ability to do no matter people can readily do. However you wouldn’t seize what the pure world on the whole can do—or that the instruments that we’ve long-established from the pure world can do. And it’s the usage of these instruments—each sensible and conceptual—which have allowed us in current centuries to transcend the boundaries of what’s accessible to “pure unaided human thought”, and seize for human functions extra of what’s on the market within the bodily and computational universe.

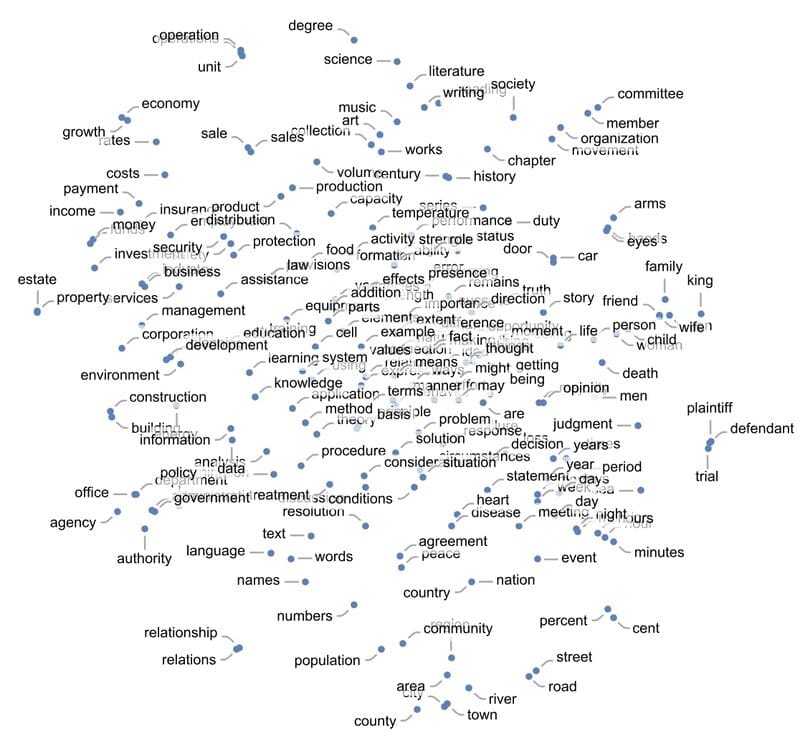

Neural nets—no less than as they’re presently arrange—are essentially primarily based on numbers. So if we’re going to to make use of them to work on one thing like textual content we’ll want a strategy to represent our text with numbers. And definitely we may begin (primarily as ChatGPT does) by simply assigning a quantity to each phrase within the dictionary. However there’s an necessary concept—that’s for instance central to ChatGPT—that goes past that. And it’s the thought of “embeddings”. One can consider an embedding as a strategy to attempt to symbolize the “essence” of one thing by an array of numbers—with the property that “close by issues” are represented by close by numbers.

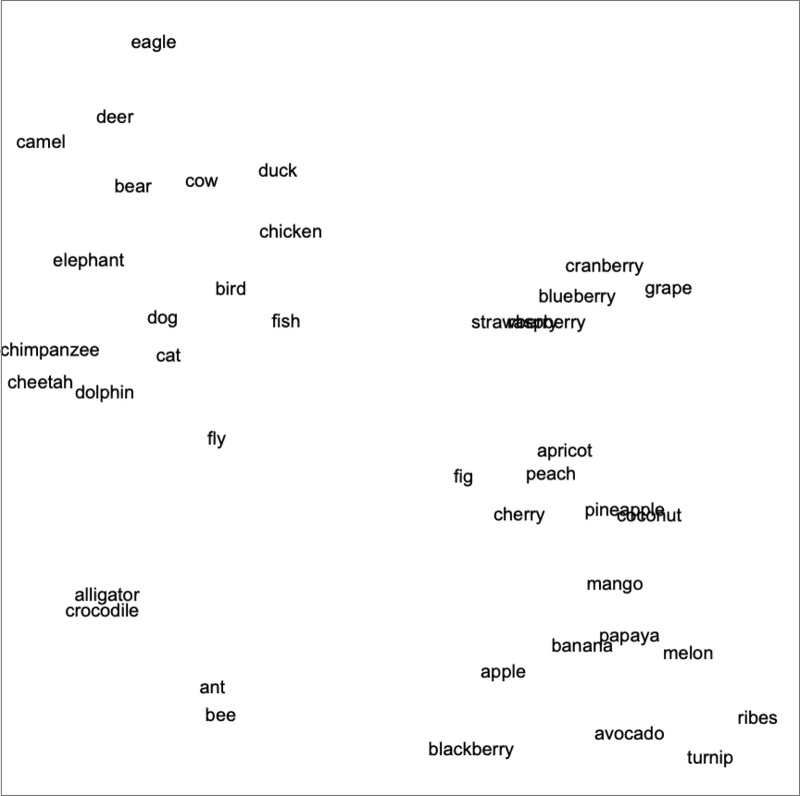

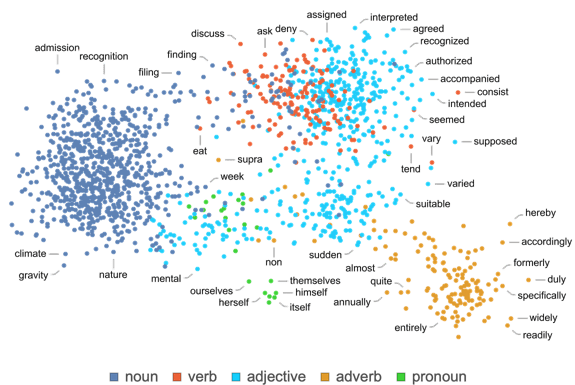

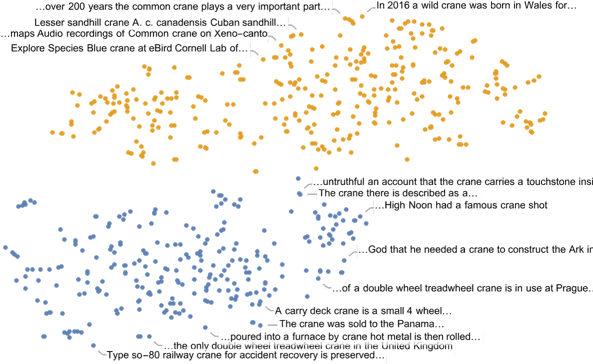

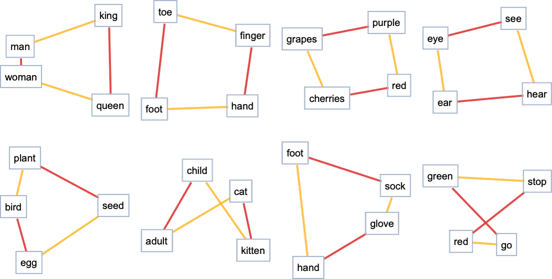

And so, for instance, we will consider a phrase embedding as making an attempt to lay out words in a kind of “meaning space” wherein phrases which might be in some way “close by in that means” seem close by within the embedding. The precise embeddings which might be used—say in ChatGPT—are likely to contain massive lists of numbers. But when we venture all the way down to 2D, we will present examples of how phrases are laid out by the embedding:

And, sure, what we see does remarkably nicely in capturing typical on a regular basis impressions. However how can we assemble such an embedding? Roughly the thought is to have a look at massive quantities of textual content (right here 5 billion phrases from the net) after which see “how comparable” the “environments” are wherein completely different phrases seem. So, for instance, “alligator” and “crocodile” will typically seem nearly interchangeably in in any other case comparable sentences, and meaning they’ll be positioned close by within the embedding. However “turnip” and “eagle” gained’t have a tendency to look in in any other case comparable sentences, in order that they’ll be positioned far aside within the embedding.

However how does one truly implement one thing like this utilizing neural nets? Let’s begin by speaking about embeddings not for phrases, however for photos. We wish to discover some strategy to characterize photos by lists of numbers in such a manner that “photos we think about comparable” are assigned comparable lists of numbers.

How can we inform if we must always “think about photos comparable”? Properly, if our photos are, say, of handwritten digits we would “think about two photos comparable” if they’re of the identical digit. Earlier we mentioned a neural web that was educated to acknowledge handwritten digits. And we will consider this neural web as being arrange in order that in its closing output it places photos into 10 completely different bins, one for every digit.

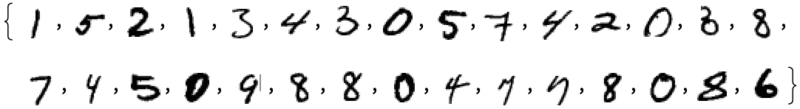

However what if we “intercept” what’s happening contained in the neural web earlier than the ultimate “it’s a ‘4’” determination is made? We’d anticipate that contained in the neural web there are numbers that characterize photos as being “largely 4-like however a bit 2-like” or some such. And the thought is to select up such numbers to make use of as parts in an embedding.

So right here’s the idea. Relatively than instantly making an attempt to characterize “what picture is close to what different picture”, we as an alternative think about a well-defined activity (on this case digit recognition) for which we will get express coaching knowledge—then use the truth that in doing this activity the neural web implicitly has to make what quantity to “nearness selections”. So as an alternative of us ever explicitly having to speak about “nearness of photos” we’re simply speaking concerning the concrete query of what digit a picture represents, after which we’re “leaving it to the neural web” to implicitly decide what that means about “nearness of photos”.

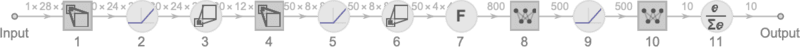

So how in additional element does this work for the digit recognition community? We are able to consider the community as consisting of 11 successive layers, that we would summarize iconically like this (with activation capabilities proven as separate layers):

Initially we’re feeding into the primary layer precise photos, represented by 2D arrays of pixel values. And on the finish—from the final layer—we’re getting out an array of 10 values, which we will consider saying “how sure” the community is that the picture corresponds to every of the digits 0 by 9.

Feed within the picture ![]() and the values of the neurons in that final layer are:

and the values of the neurons in that final layer are:

In different phrases, the neural web is by this level “extremely sure” that this picture is a 4—and to really get the output “4” we simply have to pick the place of the neuron with the most important worth.

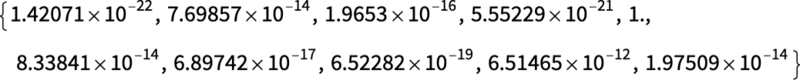

However what if we glance one step earlier? The final operation within the community is a so-called softmax which tries to “power certainty”. However earlier than that’s been utilized the values of the neurons are:

![]()

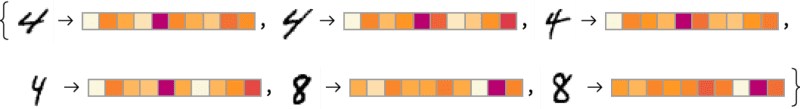

The neuron representing “4” nonetheless has the best numerical worth. However there’s additionally info within the values of the opposite neurons. And we will anticipate that this listing of numbers can in a way be used to characterize the “essence” of the picture—and thus to offer one thing we will use as an embedding. And so, for instance, every of the 4’s right here has a barely completely different “signature” (or “characteristic embedding”)—all very completely different from the 8’s:

Right here we’re primarily utilizing 10 numbers to characterize our photos. Nevertheless it’s typically higher to make use of rather more than that. And for instance in our digit recognition community we will get an array of 500 numbers by tapping into the previous layer. And that is in all probability an affordable array to make use of as an “picture embedding”.

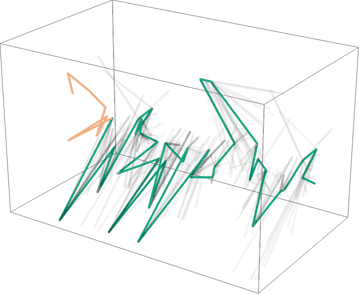

If we wish to make an express visualization of “picture house” for handwritten digits we have to “scale back the dimension”, successfully by projecting the 500-dimensional vector we’ve acquired into, say, 3D house:

We’ve simply talked about making a characterization (and thus embedding) for photos primarily based successfully on figuring out the similarity of photos by figuring out whether or not (in accordance with our coaching set) they correspond to the identical handwritten digit. And we will do the identical factor rather more usually for photos if we have now a coaching set that identifies, say, which of 5000 frequent varieties of object (cat, canine, chair, …) every picture is of. And on this manner we will make a picture embedding that’s “anchored” by our identification of frequent objects, however then “generalizes round that” in accordance with the habits of the neural web. And the purpose is that insofar as that habits aligns with how we people understand and interpret photos, it will find yourself being an embedding that “appears proper to us”, and is beneficial in observe in doing “human-judgement-like” duties.

OK, so how can we comply with the identical sort of method to search out embeddings for phrases? The bottom line is to begin from a activity about phrases for which we will readily do coaching. And the usual such activity is “phrase prediction”. Think about we’re given “the ___ cat”. Based mostly on a big corpus of textual content (say, the textual content content material of the net), what are the chances for various phrases which may “fill within the clean”? Or, alternatively, given “___ black ___” what are the chances for various “flanking phrases”?

How can we set this drawback up for a neural web? In the end we have now to formulate every part when it comes to numbers. And a technique to do that is simply to assign a singular quantity to every of the 50,000 or so frequent phrases in English. So, for instance, “the” could be 914, and “ cat” (with an area earlier than it) could be 3542. (And these are the precise numbers utilized by GPT-2.) So for the “the ___ cat” drawback, our enter could be {914, 3542}. What ought to the output be like? Properly, it ought to be a listing of fifty,000 or so numbers that successfully give the chances for every of the attainable “fill-in” phrases. And as soon as once more, to search out an embedding, we wish to “intercept” the “insides” of the neural web simply earlier than it “reaches its conclusion”—after which decide up the listing of numbers that happen there, and that we will consider as “characterizing every phrase”.

OK, so what do these characterizations appear like? Over the previous 10 years there’ve been a sequence of various programs developed (word2vec, GloVe, BERT, GPT, …), every primarily based on a unique neural web method. However in the end all of them take phrases and characterize them by lists of a whole bunch to 1000’s of numbers.

Of their uncooked type, these “embedding vectors” are fairly uninformative. For instance, right here’s what GPT-2 produces because the uncooked embedding vectors for 3 particular phrases:

If we do issues like measure distances between these vectors, then we will discover issues like “nearnesses” of phrases. Later we’ll focus on in additional element what we would think about the “cognitive” significance of such embeddings. However for now the principle level is that we have now a strategy to usefully flip phrases into “neural-net-friendly” collections of numbers.

However truly we will go additional than simply characterizing phrases by collections of numbers; we will additionally do that for sequences of phrases, or certainly entire blocks of textual content. And inside ChatGPT that’s the way it’s coping with issues. It takes the textual content it’s acquired thus far, and generates an embedding vector to symbolize it. Then its purpose is to search out the chances for various phrases which may happen subsequent. And it represents its reply for this as a listing of numbers that primarily give the chances for every of the 50,000 or so attainable phrases.

(Strictly, ChatGPT doesn’t cope with phrases, however rather with “tokens”—handy linguistic models that could be entire phrases, or may simply be items like “pre” or “ing” or “ized”. Working with tokens makes it simpler for ChatGPT to deal with uncommon, compound and non-English phrases, and, typically, for higher or worse, to invent new phrases.)

OK, so we’re lastly prepared to debate what’s inside ChatGPT. And, sure, in the end, it’s a large neural web—presently a model of the so-called GPT-3 community with 175 billion weights. In some ways this can be a neural web very very similar to the opposite ones we’ve mentioned. Nevertheless it’s a neural web that’s notably arrange for coping with language. And its most notable characteristic is a bit of neural web structure referred to as a “transformer”.

Within the first neural nets we mentioned above, each neuron at any given layer was mainly related (no less than with some weight) to each neuron on the layer earlier than. However this type of totally related community is (presumably) overkill if one’s working with knowledge that has explicit, identified construction. And thus, for instance, within the early phases of coping with photos, it’s typical to make use of so-called convolutional neural nets (“convnets”) wherein neurons are successfully laid out on a grid analogous to the pixels within the picture—and related solely to neurons close by on the grid.

The thought of transformers is to do one thing no less than considerably comparable for sequences of tokens that make up a bit of textual content. However as an alternative of simply defining a set area within the sequence over which there may be connections, transformers as an alternative introduce the notion of “attention”—and the thought of “paying consideration” extra to some elements of the sequence than others. Possibly someday it’ll make sense to simply begin a generic neural web and do all customization by coaching. However no less than as of now it appears to be crucial in observe to “modularize” issues—as transformers do, and possibly as our brains additionally do.

OK, so what does ChatGPT (or, somewhat, the GPT-3 community on which it’s primarily based) truly do? Recall that its general purpose is to proceed textual content in a “cheap” manner, primarily based on what it’s seen from the coaching it’s had (which consists in billions of pages of textual content from the net, and many others.) So at any given level, it’s acquired a certain quantity of textual content—and its purpose is to provide you with an applicable selection for the subsequent token so as to add.

It operates in three primary phases. First, it takes the sequence of tokens that corresponds to the textual content thus far, and finds an embedding (i.e. an array of numbers) that represents these. Then it operates on this embedding—in a “commonplace neural web manner”, with values “rippling by” successive layers in a community—to provide a brand new embedding (i.e. a brand new array of numbers). It then takes the final a part of this array and generates from it an array of about 50,000 values that flip into chances for various attainable subsequent tokens. (And, sure, it so occurs that there are about the identical variety of tokens used as there are frequent phrases in English, although solely about 3000 of the tokens are entire phrases, and the remaining are fragments.)

A crucial level is that each a part of this pipeline is applied by a neural community, whose weights are decided by end-to-end coaching of the community. In different phrases, in impact nothing besides the general structure is “explicitly engineered”; every part is simply “realized” from coaching knowledge.

There are, nonetheless, loads of particulars in the best way the structure is about up—reflecting all kinds of expertise and neural web lore. And—regardless that that is positively going into the weeds—I believe it’s helpful to speak about a few of these particulars, not least to get a way of simply what goes into constructing one thing like ChatGPT.

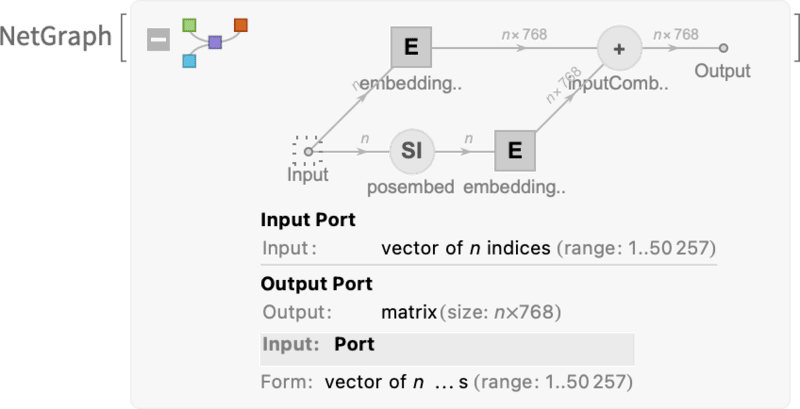

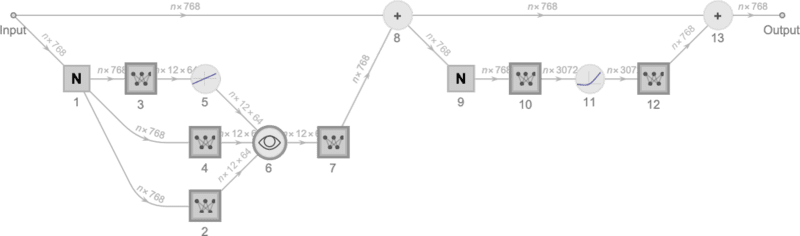

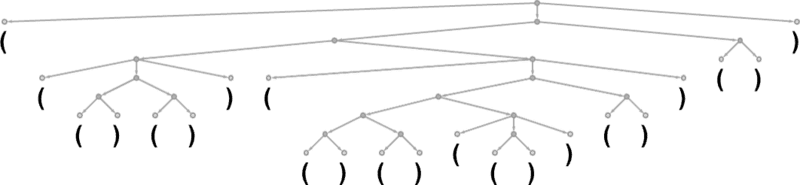

First comes the embedding module. Right here’s a schematic Wolfram Language illustration for it for GPT-2:

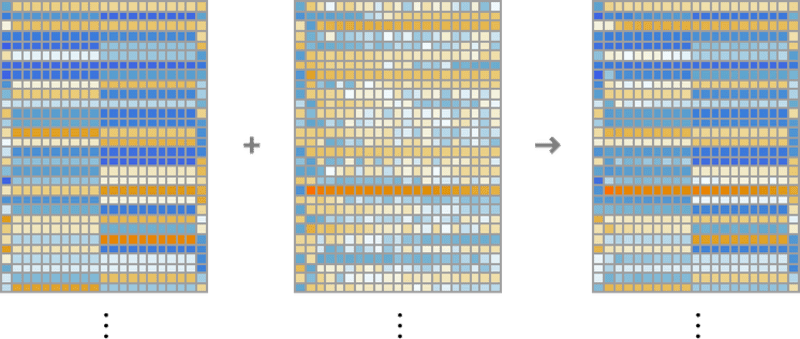

The enter is a vector of n tokens (represented as within the earlier part by integers from 1 to about 50,000). Every of those tokens is transformed (by a single-layer neural net) into an embedding vector (of size 768 for GPT-2 and 12,288 for ChatGPT’s GPT-3). In the meantime, there’s a “secondary pathway” that takes the sequence of (integer) positions for the tokens, and from these integers creates one other embedding vector. And eventually the embedding vectors from the token worth and the token place are added together—to provide the ultimate sequence of embedding vectors from the embedding module.

Why does one simply add the token-value and token-position embedding vectors collectively? I don’t assume there’s any explicit science to this. It’s simply that numerous various things have been tried, and that is one which appears to work. And it’s a part of the lore of neural nets that—in some sense—as long as the setup one has is “roughly proper” it’s often attainable to residence in on particulars simply by doing ample coaching, with out ever actually needing to “perceive at an engineering stage” fairly how the neural web has ended up configuring itself.

Right here’s what the embedding module does, working on the string howdy howdy howdy howdy howdy howdy howdy howdy howdy howdy bye bye bye bye bye bye bye bye bye bye:

The weather of the embedding vector for every token are proven down the web page, and throughout the web page we see first a run of “howdy” embeddings, adopted by a run of “bye” ones. The second array above is the positional embedding—with its somewhat-random-looking construction being simply what “occurred to be realized” (on this case in GPT-2).

OK, so after the embedding module comes the “primary occasion” of the transformer: a sequence of so-called “consideration blocks” (12 for GPT-2, 96 for ChatGPT’s GPT-3). It’s all fairly sophisticated—and harking back to typical massive hard-to-understand engineering programs, or, for that matter, organic programs. However anyway, right here’s a schematic illustration of a single “consideration block” (for GPT-2):

Inside every such consideration block there are a group of “consideration heads” (12 for GPT-2, 96 for ChatGPT’s GPT-3)—every of which operates independently on completely different chunks of values within the embedding vector. (And, sure, we don’t know any explicit purpose why it’s a good suggestion to separate up the embedding vector, or what the completely different elements of it “imply”; that is simply a kind of issues that’s been “discovered to work”.)

OK, so what do the eye heads do? Mainly they’re a manner of “wanting again” within the sequence of tokens (i.e. within the textual content produced thus far), and “packaging up the previous” in a type that’s helpful for locating the subsequent token. In the first section above we talked about utilizing 2-gram chances to select phrases primarily based on their quick predecessors. What the “consideration” mechanism in transformers does is to permit “consideration to” even a lot earlier phrases—thus probably capturing the best way, say, verbs can consult with nouns that seem many phrases earlier than them in a sentence.

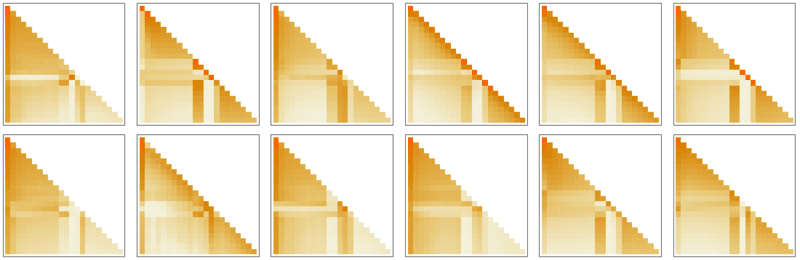

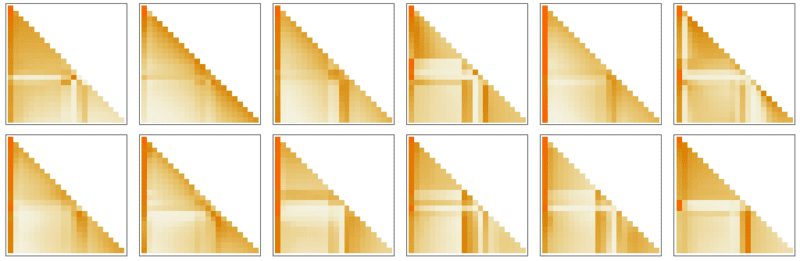

At a extra detailed stage, what an consideration head does is to recombine chunks within the embedding vectors related to completely different tokens, with sure weights. And so, for instance, the 12 consideration heads within the first consideration block (in GPT-2) have the next (“look-back-all-the-way-to-the-beginning-of-the-sequence-of-tokens”) patterns of “recombination weights” for the “howdy, bye” string above:

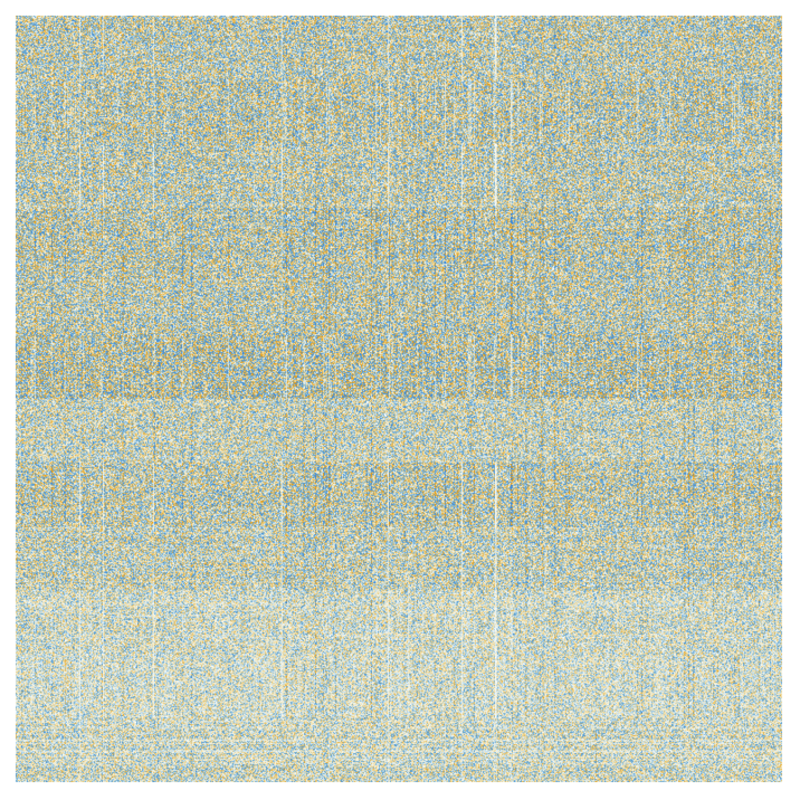

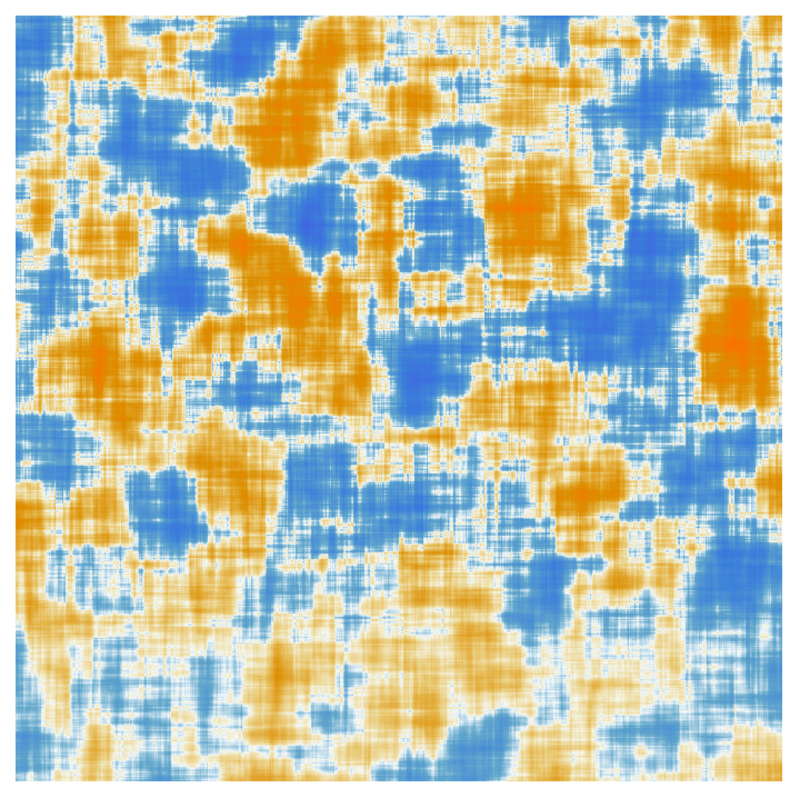

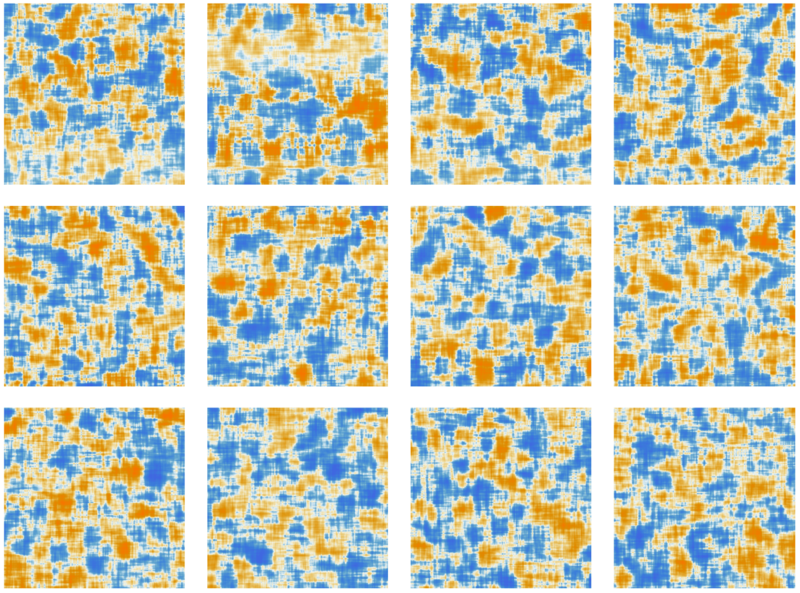

After being processed by the eye heads, the ensuing “re-weighted embedding vector” (of size 768 for GPT-2 and size 12,288 for ChatGPT’s GPT-3) is handed by a normal “fully connected” neural net layer. It’s arduous to get a deal with on what this layer is doing. However right here’s a plot of the 768×768 matrix of weights it’s utilizing (right here for GPT-2):

Taking 64×64 shifting averages, some (random-walk-ish) construction begins to emerge:

What determines this construction? In the end it’s presumably some “neural web encoding” of options of human language. However as of now, what these options could be is sort of unknown. In impact, we’re “opening up the mind of ChatGPT” (or no less than GPT-2) and discovering, sure, it’s sophisticated in there, and we don’t perceive it—regardless that in the long run it’s producing recognizable human language.

OK, so after going by one consideration block, we’ve acquired a brand new embedding vector—which is then successively handed by further consideration blocks (a complete of 12 for GPT-2; 96 for GPT-3). Every consideration block has its personal explicit sample of “consideration” and “totally related” weights. Right here for GPT-2 are the sequence of consideration weights for the “howdy, bye” enter, for the primary consideration head:

And listed here are the (moving-averaged) “matrices” for the totally related layers:

Curiously, regardless that these “matrices of weights” in numerous consideration blocks look fairly comparable, the distributions of the sizes of weights may be considerably completely different (and should not all the time Gaussian):

So after going by all these consideration blocks what’s the web impact of the transformer? Primarily it’s to rework the unique assortment of embeddings for the sequence of tokens to a closing assortment. And the actual manner ChatGPT works is then to select up the final embedding on this assortment, and “decode” it to provide a listing of chances for what token ought to come subsequent.

In order that’s in define what’s inside ChatGPT. It could appear sophisticated (not least due to its many inevitably considerably arbitrary “engineering decisions”), however truly the last word parts concerned are remarkably easy. As a result of in the long run what we’re coping with is only a neural web fabricated from “synthetic neurons”, every doing the straightforward operation of taking a group of numerical inputs, after which combining them with sure weights.

The unique enter to ChatGPT is an array of numbers (the embedding vectors for the tokens thus far), and what occurs when ChatGPT “runs” to provide a brand new token is simply that these numbers “ripple by” the layers of the neural web, with every neuron “doing its factor” and passing the consequence to neurons on the subsequent layer. There’s no looping or “going again”. Every part simply “feeds ahead” by the community.

It’s a really completely different setup from a typical computational system—like a Turing machine—wherein outcomes are repeatedly “reprocessed” by the identical computational parts. Right here—no less than in producing a given token of output—every computational factor (i.e. neuron) is used solely as soon as.

However there’s in a way nonetheless an “outer loop” that reuses computational parts even in ChatGPT. As a result of when ChatGPT goes to generate a brand new token, it all the time “reads” (i.e. takes as enter) the entire sequence of tokens that come earlier than it, together with tokens that ChatGPT itself has “written” beforehand. And we will consider this setup as that means that ChatGPT does—no less than at its outermost stage—contain a “suggestions loop”, albeit one wherein each iteration is explicitly seen as a token that seems within the textual content that it generates.

However let’s come again to the core of ChatGPT: the neural web that’s being repeatedly used to generate every token. At some stage it’s quite simple: a complete assortment of an identical synthetic neurons. And a few elements of the community simply encompass (“fully connected”) layers of neurons wherein each neuron on a given layer is related (with some weight) to each neuron on the layer earlier than. However notably with its transformer structure, ChatGPT has elements with extra construction, wherein solely particular neurons on completely different layers are related. (After all, one may nonetheless say that “all neurons are related”—however some simply have zero weight.)